Data Compression Crushing Data Using Entropy Kinematicsoup

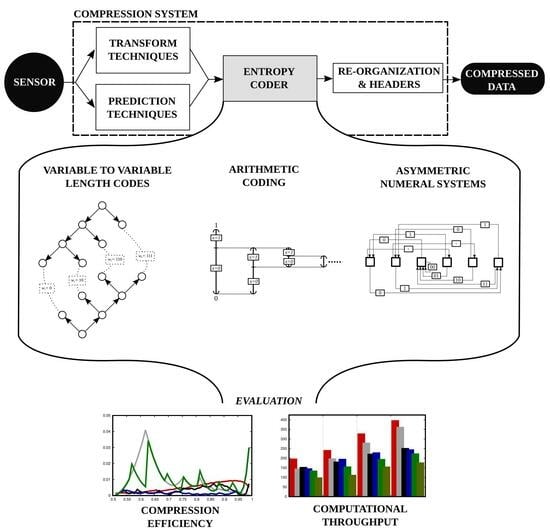

Data Compression Crushing Data Using Entropy Kinematicsoup It has been quite some time since our first installment on simple data compression. this second installment is long overdue! compression is ubiquitous in our everyday lives. By providing insight into the complexity of a data source, the entropy metric provides a tool for evaluating the effectiveness of a data compression technique.

Data Compression Crushing Data Using Entropy Kinematicsoup Shannon’s discovery of the fundamental laws of data compression and transmission marks the birth of information theory. the concept of entropy in information theory describes how much information there is in a signal or event. Suppose that the models are generated by kids using modeling software on the web where each model must fit in a single ten kilobyte message. consider the probability distribution over the images rendered in this process. Thus, in this and the next chapter, we assume that we already have digital data, and we discuss theory and techniques for further compressing this digital data. In this thesis, i contribute to this trend by investigating relative entropy coding, a mathematical framework that generalises classical source coding theory. concretely, relative entropy coding deals with the efficient communication of uncertain or randomised information.

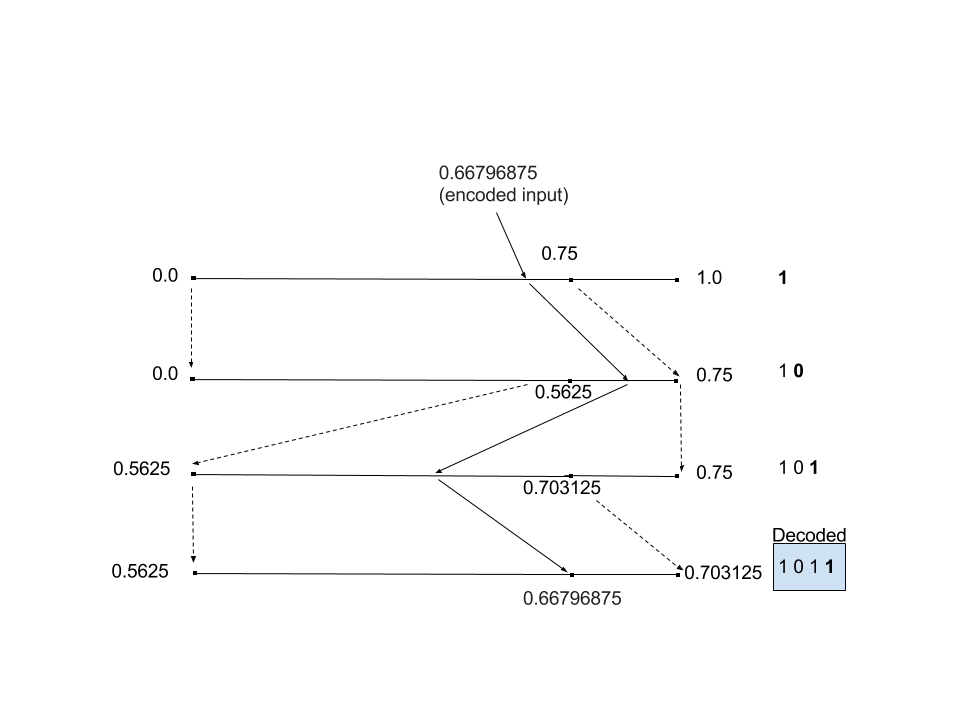

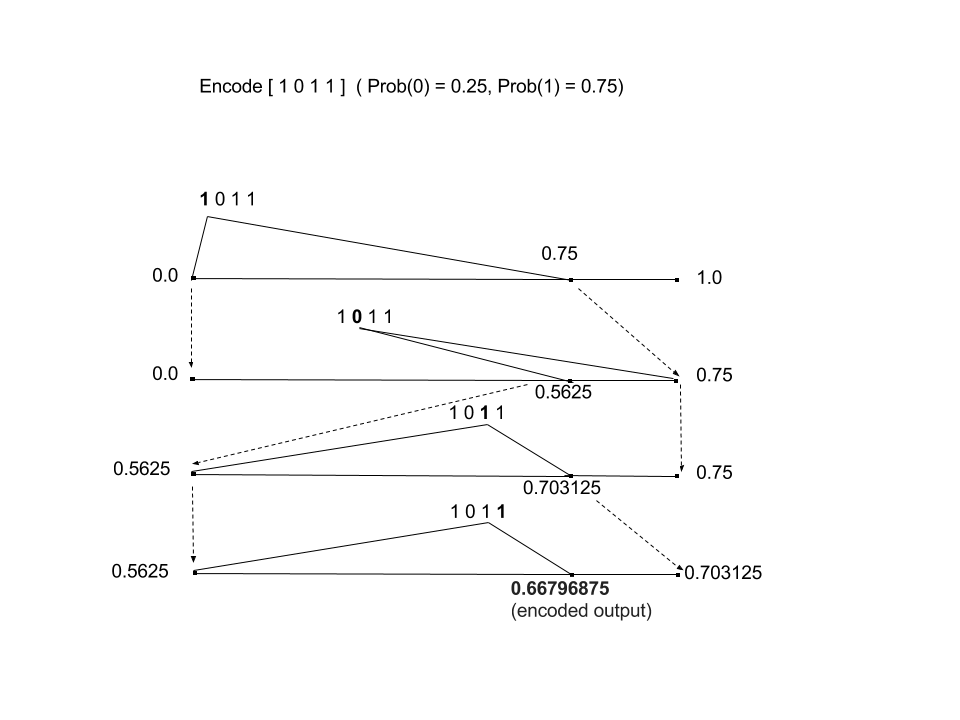

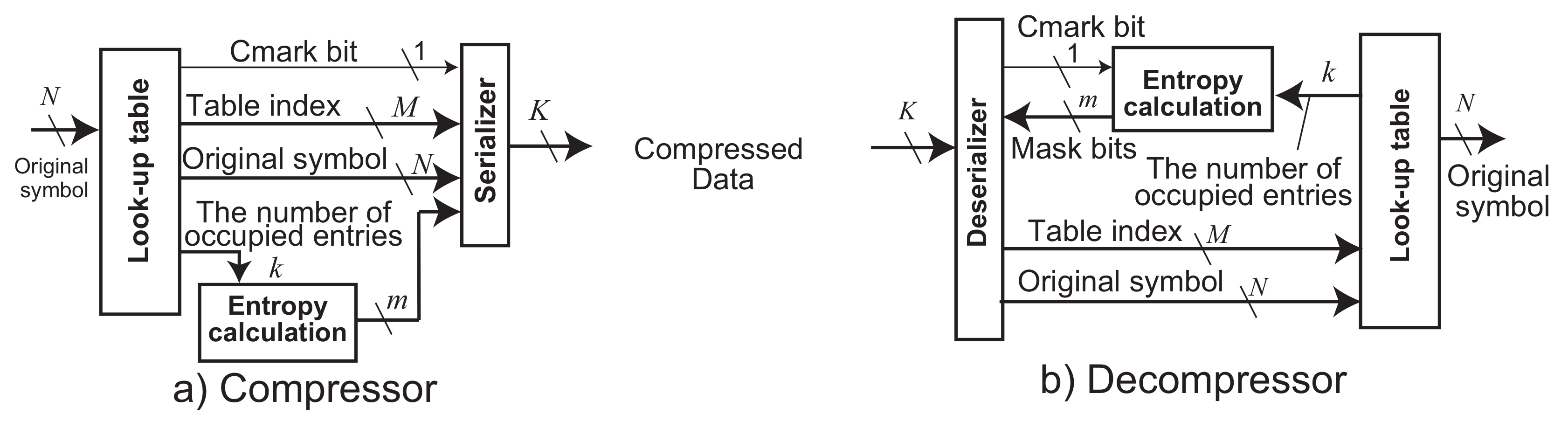

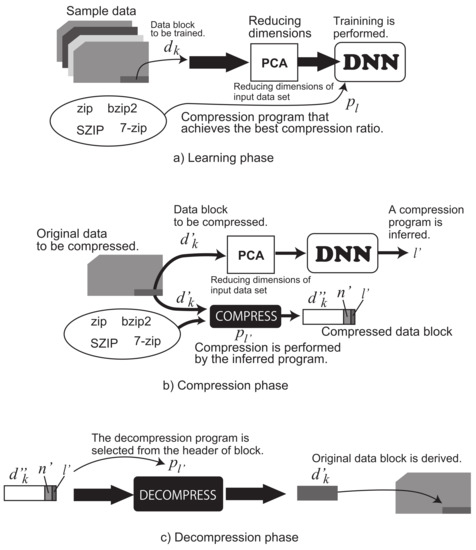

Stream Based Lossless Data Compression Applying Adaptive Entropy Coding Thus, in this and the next chapter, we assume that we already have digital data, and we discuss theory and techniques for further compressing this digital data. In this thesis, i contribute to this trend by investigating relative entropy coding, a mathematical framework that generalises classical source coding theory. concretely, relative entropy coding deals with the efficient communication of uncertain or randomised information. While calculating entropy is well understood for fully specified data, this paper explores the use of entropy for incompletely specified test data and shows how theoretical bounds on the maximum amount of test data compression can be calculated. In this article, we will discuss the overview of data compression and will discuss its method illustration, and also will cover the overview part entropy. let's discuss it one by one. Let us now focus on an important use of the shannon entropy, which involves the notion of a compression scheme. this will allow us to attach a concrete meaning to the shannon entropy. You can use entropy to find the theoretical maximum lossless compression ratio, but no, you can't use it to determine your expected compression ratio for any given compression algorithm.

Adaptive Lossless Image Data Compression Method Inferring Data Entropy While calculating entropy is well understood for fully specified data, this paper explores the use of entropy for incompletely specified test data and shows how theoretical bounds on the maximum amount of test data compression can be calculated. In this article, we will discuss the overview of data compression and will discuss its method illustration, and also will cover the overview part entropy. let's discuss it one by one. Let us now focus on an important use of the shannon entropy, which involves the notion of a compression scheme. this will allow us to attach a concrete meaning to the shannon entropy. You can use entropy to find the theoretical maximum lossless compression ratio, but no, you can't use it to determine your expected compression ratio for any given compression algorithm.

Fast And Efficient Entropy Coding Architectures For Massive Data Let us now focus on an important use of the shannon entropy, which involves the notion of a compression scheme. this will allow us to attach a concrete meaning to the shannon entropy. You can use entropy to find the theoretical maximum lossless compression ratio, but no, you can't use it to determine your expected compression ratio for any given compression algorithm.

Comments are closed.