Cypress Programming Mpi Hpc

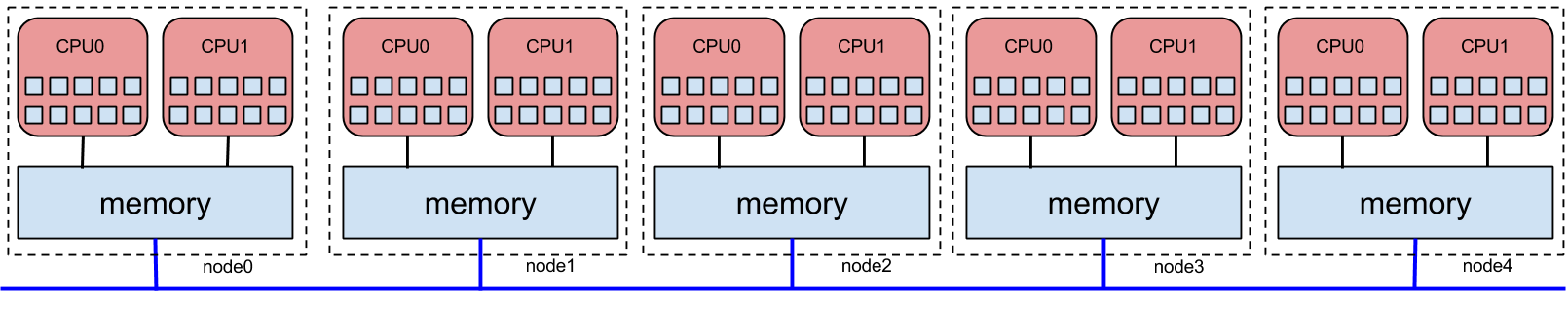

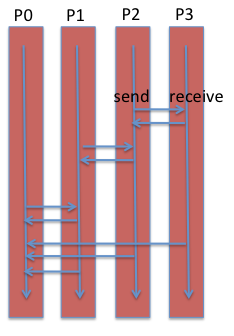

Hpc Project Mpi Pdf Message Passing Interface Parallel Computing Mpi’s execution model all processors execute same program, but with difference data. (no fork & join) variable on each are all private. data that must be shared is explicitly send between processors. Mpi has become the de facto standard to program hpc cluster systems and is often the only way available. there exist many implementations, open source and proprietary. the latest version of the standard is mpi 3.1 (released in 2015).

Hpc Nrw 02 Mpi Concepts Pdf Data Type Message Passing Interface The mpi modules provide compiler wrappers that wrap around the c, c , and fortran compilers to setup the compiler and linking options that the mpi library needs. as a general rule, it is best to use these wrappers for compiling. We have provided a set of working mpi examples, ranging from a simple "hello world" mpi program to an implementation of the "halo exchange" message passing pattern you need for the mpi assignment. What is mpi? once you have finished the tutorial, please complete our evaluation form!. This workshop is intended to give c and fortran programmers a hands on introduction to mpi programming. both days are compact, to accommodate multiple time zones, but packed with useful information and lab exercises.

Cypress Programming Mpi Hpc What is mpi? once you have finished the tutorial, please complete our evaluation form!. This workshop is intended to give c and fortran programmers a hands on introduction to mpi programming. both days are compact, to accommodate multiple time zones, but packed with useful information and lab exercises. Regular c instructions that is to be run locally for each process, e.g. some preprocessing that is equal for all processes, can be run outside the mpi context. below is a simple program that, when executed, will make each process print their name and rank as well as the total number of processes. Abstract—this paper presents a comprehensive comparison of three dominant parallel programming models in high performance computing (hpc): message passing interface (mpi), open multi processing (openmp), and compute unified device architecture (cuda). C and fortran support gin with mpi to avoid clashes with other libraries. fortran is case insensitive but in c (case sensitive) n rtran have an ierror parameter to ret mpi success (zero) indicates success. numeric codes are non portable so use mpi error string routine to translate into text. Unlike shared memory models, mpi requires programs to explicitly send and receive data between tasks. most applications must be written with mpi support from the outset, so standard serial code won’t benefit from mpi unless rewritten accordingly.

Cypress Programming Mpi Hpc Regular c instructions that is to be run locally for each process, e.g. some preprocessing that is equal for all processes, can be run outside the mpi context. below is a simple program that, when executed, will make each process print their name and rank as well as the total number of processes. Abstract—this paper presents a comprehensive comparison of three dominant parallel programming models in high performance computing (hpc): message passing interface (mpi), open multi processing (openmp), and compute unified device architecture (cuda). C and fortran support gin with mpi to avoid clashes with other libraries. fortran is case insensitive but in c (case sensitive) n rtran have an ierror parameter to ret mpi success (zero) indicates success. numeric codes are non portable so use mpi error string routine to translate into text. Unlike shared memory models, mpi requires programs to explicitly send and receive data between tasks. most applications must be written with mpi support from the outset, so standard serial code won’t benefit from mpi unless rewritten accordingly.

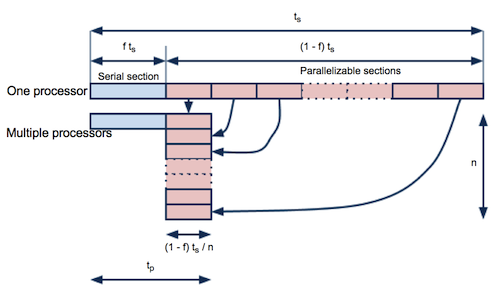

Cypress Programming Speedupscaling Hpc C and fortran support gin with mpi to avoid clashes with other libraries. fortran is case insensitive but in c (case sensitive) n rtran have an ierror parameter to ret mpi success (zero) indicates success. numeric codes are non portable so use mpi error string routine to translate into text. Unlike shared memory models, mpi requires programs to explicitly send and receive data between tasks. most applications must be written with mpi support from the outset, so standard serial code won’t benefit from mpi unless rewritten accordingly.

Cypress Programming Speedupscaling Hpc

Comments are closed.