Cvpr Paper Disentangling Adversarial Robustness And Generalization

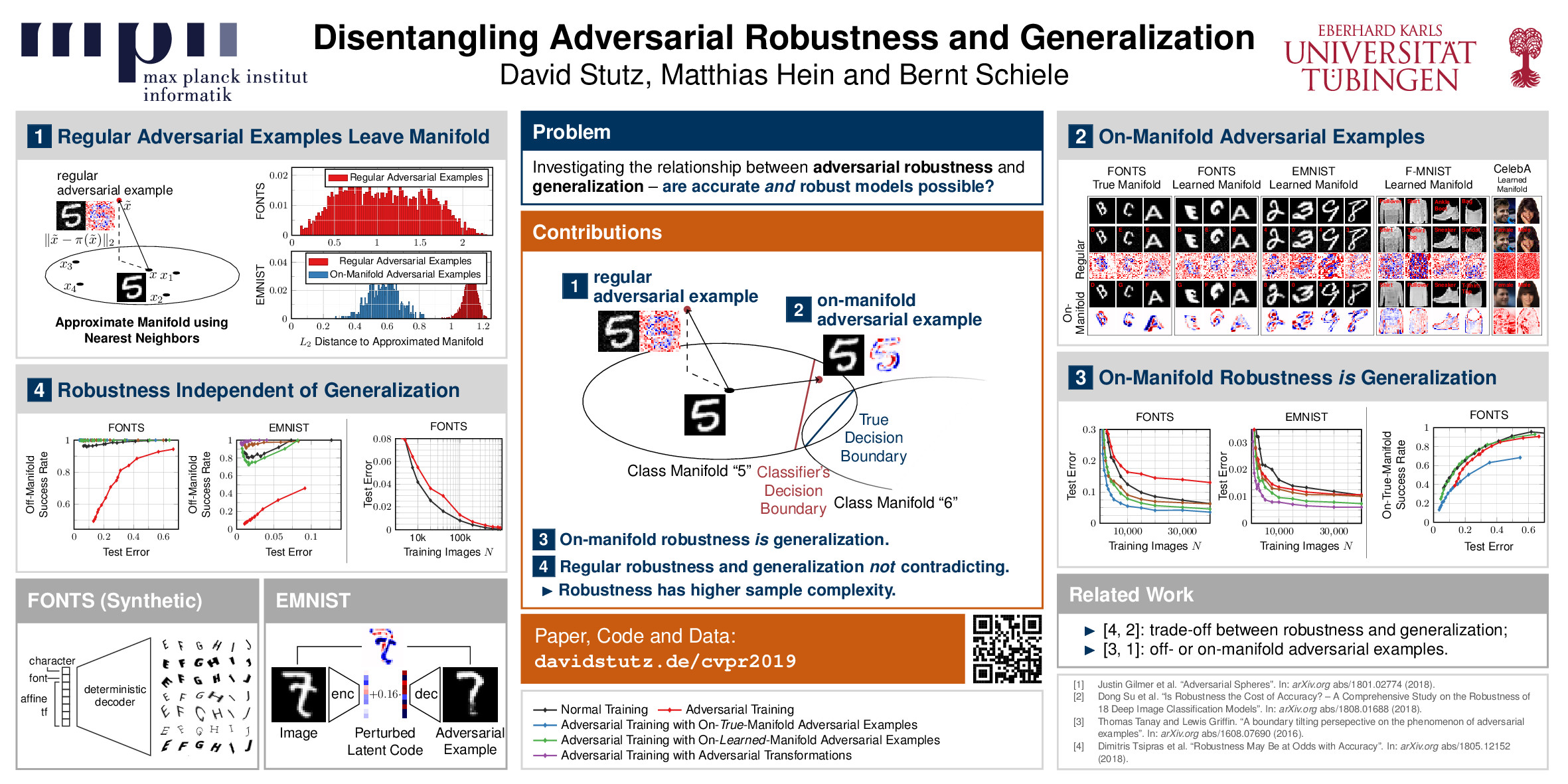

Cvpr Paper Disentangling Adversarial Robustness And Generalization For adversarial training, on regular adversarial examples, the commonly observed trade off between robustness and generalization is explained by the tendency of adversarial examples to leave the manifold. View a pdf of the paper titled disentangling adversarial robustness and generalization, by david stutz and 2 other authors.

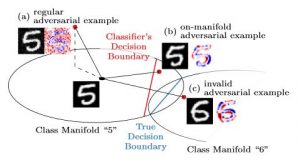

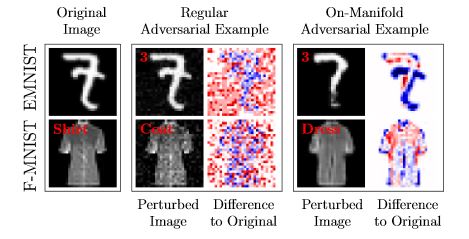

Cvpr Paper Disentangling Adversarial Robustness And Generalization Pdf | on jun 1, 2019, david stutz and others published disentangling adversarial robustness and generalization | find, read and cite all the research you need on researchgate. Ieee conference on computer vision and pattern recognition (cvpr), 2019. note that the source code and or data is based on other projects for which separate licenses apply. We argue that on manifold robustness is nothing different than generalization: as on manifold adversarial examples have non zero probability under the data distribution, they are merely generalization errors. This work assumes an underlying, low dimensional data manifold and shows that regular robustness and generalization are not necessarily contradicting goals, which implies that both robust and accurate models are possible.

Cvpr 19 Poster Disentangling Adversarial Robustness And Generalization We argue that on manifold robustness is nothing different than generalization: as on manifold adversarial examples have non zero probability under the data distribution, they are merely generalization errors. This work assumes an underlying, low dimensional data manifold and shows that regular robustness and generalization are not necessarily contradicting goals, which implies that both robust and accurate models are possible. Author: stutz, david et al.; genre: conference paper; published online: 2019; title: disentangling adversarial robustness and generalization. A recent hypothesis even states that both robust and accurate models are impossible, i. e., adversarial robustness and generalization are conflicting goals. Bibliographic details on disentangling adversarial robustness and generalization. Obtaining deep networks that are robust against adversarial examples and generalize well is an open problem. a recent hypothesis even states that both robust and accurate models are impossible, i.e., adversarial robustness and generalization are conflicting goals.

Pdf Disentangling Adversarial Robustness And Generalization Author: stutz, david et al.; genre: conference paper; published online: 2019; title: disentangling adversarial robustness and generalization. A recent hypothesis even states that both robust and accurate models are impossible, i. e., adversarial robustness and generalization are conflicting goals. Bibliographic details on disentangling adversarial robustness and generalization. Obtaining deep networks that are robust against adversarial examples and generalize well is an open problem. a recent hypothesis even states that both robust and accurate models are impossible, i.e., adversarial robustness and generalization are conflicting goals.

Adversarial Robustness Toolbox Ibm Research Bibliographic details on disentangling adversarial robustness and generalization. Obtaining deep networks that are robust against adversarial examples and generalize well is an open problem. a recent hypothesis even states that both robust and accurate models are impossible, i.e., adversarial robustness and generalization are conflicting goals.

Adversarial Robustness For Machine Learning

Comments are closed.