Custom Llm Using Retrieval Augmented Generation A Cx

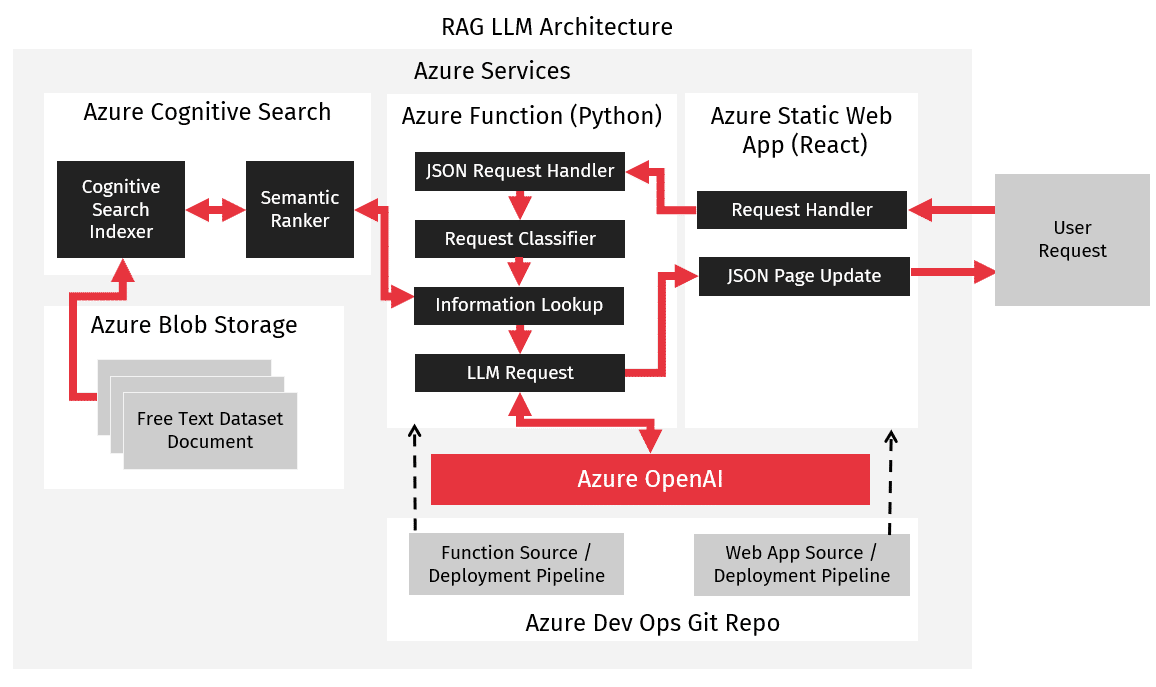

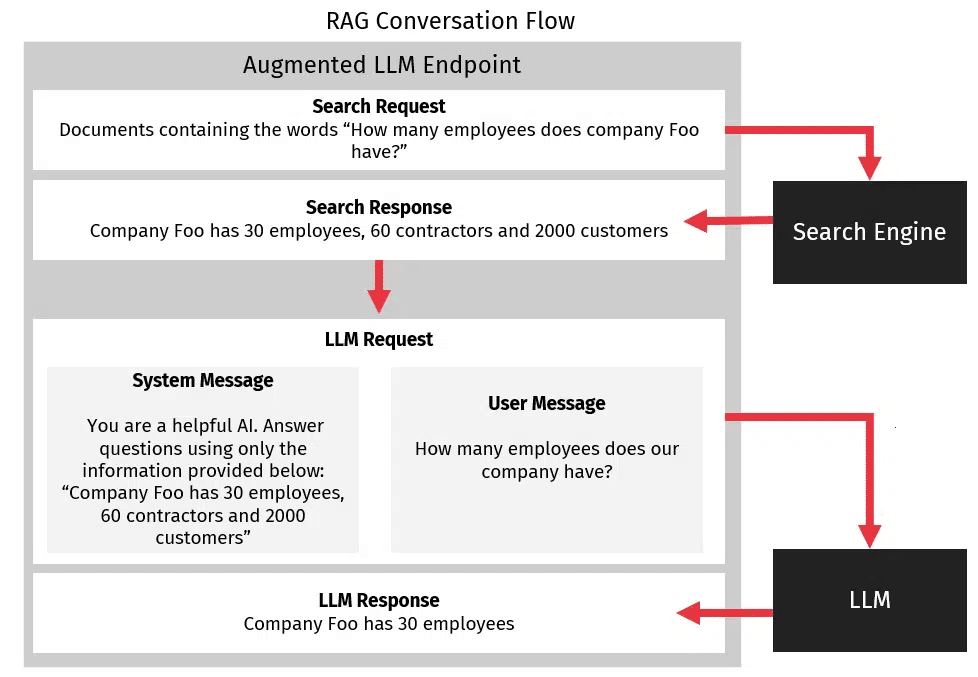

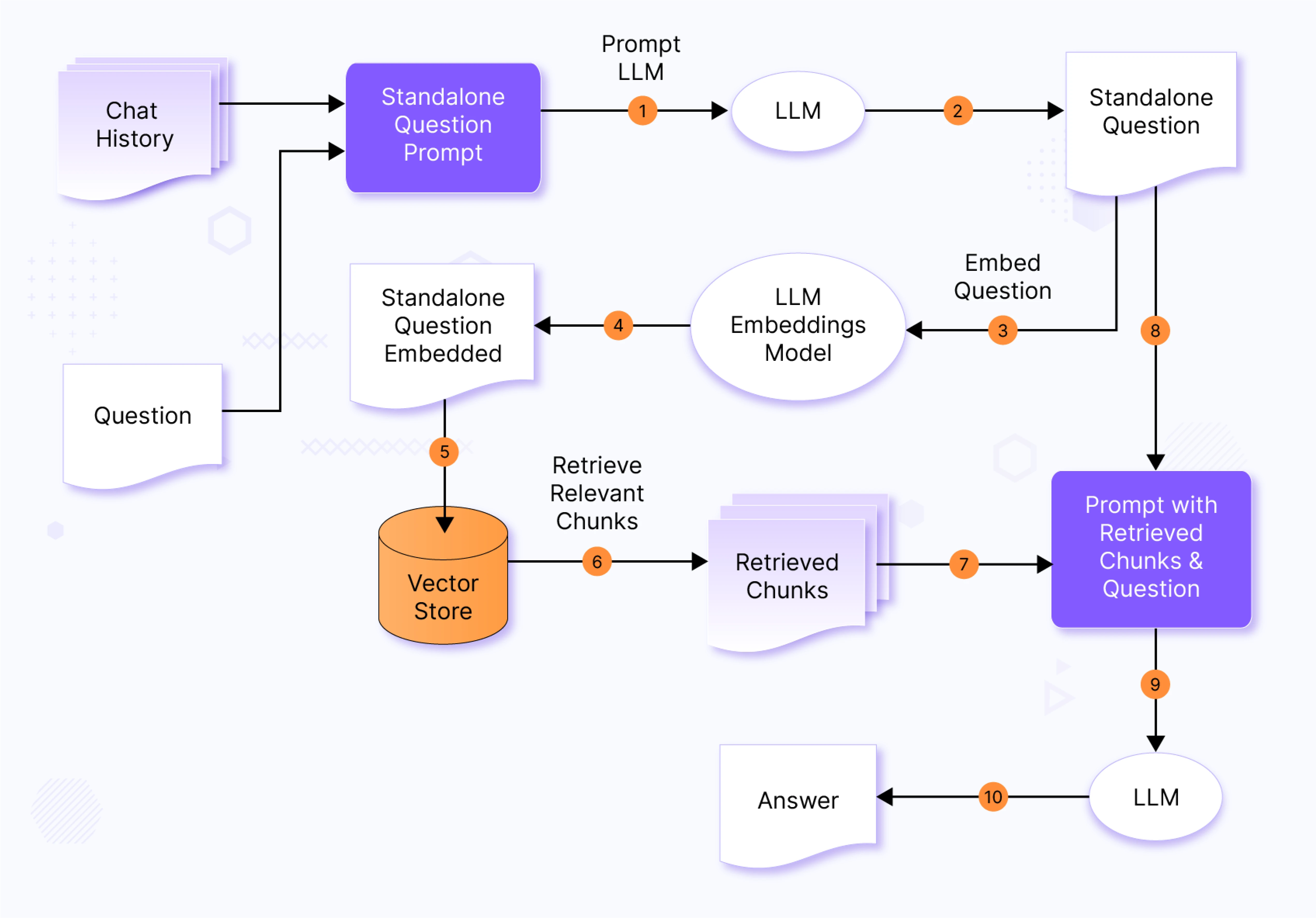

Custom Llm Using Retrieval Augmented Generation A Cx We used retrieval augmented generation to implement a custom llm endpoint for answering internal questions, improving documentation lookup speed, and creating company specific documentation and marketing posts. We implemented a custom llm endpoint using rag, which augments llm queries with internal data instead of retraining the model. this involved creating a retrieval system that fetched internal documentation to answer user queries accurately.

Using Retrieval Augmented Generation To Enhance General Llm A Cx Retrieval augmented generation (rag) helps overcome this by enabling language models to consult external knowledge sources in real time. in this article, you’ll get a detailed, visual rich. Read the latest blog post from our branden crawford. in this insightful article, he explores creating a custom llm model with retrieval augmented generation (rag). Customizing llms can be done easily and without training by using retrieval augmented generation, better known as rag. Retrieval augmented generation, or rag, is a technique used to provide custom data to an llm in order to allow the llm to answer queries about data on which it was not trained. when building a custom llm solution, rag is typically the easiest and most cost effective approach.

Retrieval Augmented Generation Using Your Data With Llms Customizing llms can be done easily and without training by using retrieval augmented generation, better known as rag. Retrieval augmented generation, or rag, is a technique used to provide custom data to an llm in order to allow the llm to answer queries about data on which it was not trained. when building a custom llm solution, rag is typically the easiest and most cost effective approach. Instead of relying solely on its static, pre trained knowledge, an llm in a rag system first retrieves relevant information related to a user's query and then uses that information to generate. Retrieval augmented generation (rag) is one of the most effective ways to ground llms in external knowledge. instead of relying solely on the model’s parametric memory, rag augments queries with relevant documents retrieved from a knowledge base. Learn how retrieval augmented generation works, why enterprises are adopting it, and how kapture uses rag to power faster, more accurate cx at scale. Our tools allow you to ingest, parse, index and process your data and quickly implement complex query workflows combining data access with llm prompting. the most popular example of context augmentation is retrieval augmented generation or rag, which combines context with llms at inference time.

Comments are closed.