Convolutional Layer Standards Using Relu And Batch Normalization

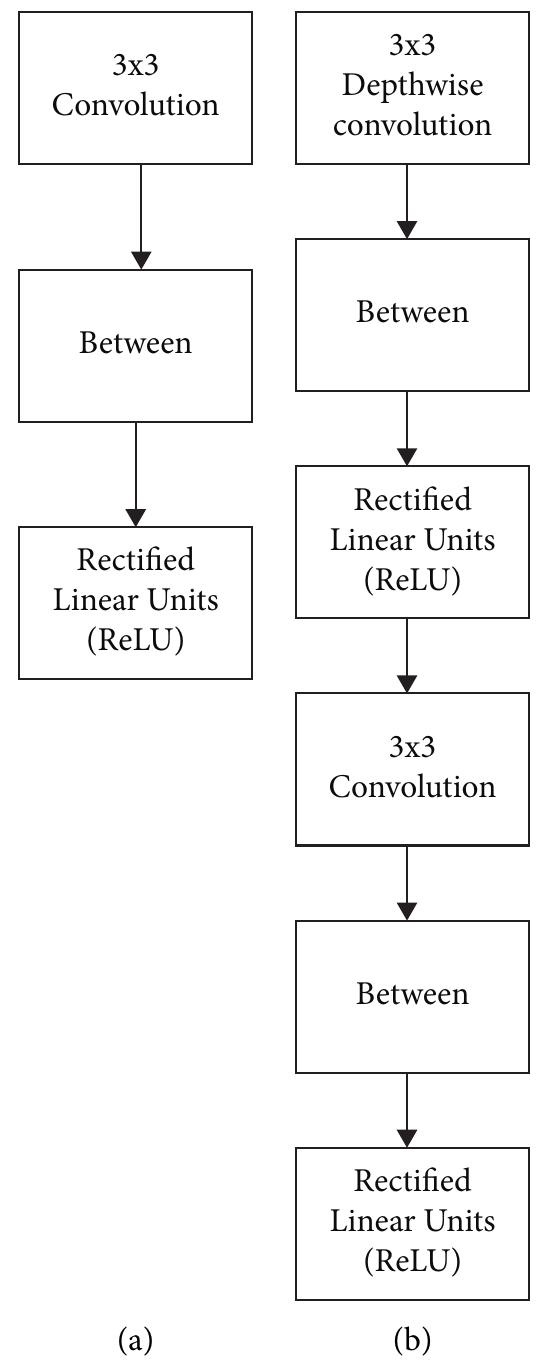

A Factorized Layer With Depthwise Convolution 1 1 Pointwise An explanation of why the conv → batchnorm → relu ordering is standard in cnns, grounded in mathematical reasoning and empirical results from modern deep learning architectures. Here, we’ve seen how to apply batch normalization into feed forward neural networks and convolutional neural networks. we’ve also explored how and why does it improve our models and our learning speed.

Convolutional Layer Standards Using Relu And Batch In this paper, we investigate the effect of batchnorm on the resulting hidden representations, that is, the vectors of activation values formed as samples are processed at each hidden layer. Googlenet is one of the first to focus on efficiency using 1x1 bottleneck convolutions and global avg pool instead of fc layers resnet showed us how to train extremely deep networks. In a cnn, each layer receives inputs from multiple channels (feature maps) and processes them through convolutional filters. batch normalization operates on each feature map separately, normalizing the activations across the mini batch. Here’s how you can implement batch normalization and layer normalization using pytorch. we’ll cover a simple feedforward network with bn and an rnn with ln to see these techniques in action.

A Cbl Combination Of Convolutional Layer Batch Normalization In a cnn, each layer receives inputs from multiple channels (feature maps) and processes them through convolutional filters. batch normalization operates on each feature map separately, normalizing the activations across the mini batch. Here’s how you can implement batch normalization and layer normalization using pytorch. we’ll cover a simple feedforward network with bn and an rnn with ln to see these techniques in action. Batch normalization is a regularization technique used in the hidden layers of deep networks designed to normalize the feature maps activations from one layer before being passed to the next layer. Layer that normalizes its inputs. batch normalization applies a transformation that maintains the mean output close to 0 and the output standard deviation close to 1. importantly, batch normalization works differently during training and during inference. We demonstrate that for convolutional neural networks (cnns) without skip connections, it is optimal to do relu activation before batch normalization as a result of higher gradient ow. For the first time, cvmidnet, a novel complex valued cnn based deep learning model with residual learning for medical image denoising, has been proposed and implemented. cvmidnet was implemented using a complex valued convolutional layer, complex valued batch normalization, and crelu activation to remove gaussian noise from chest x ray images.

Convolutional Layer Standards Using Relu And Batch Normalization Batch normalization is a regularization technique used in the hidden layers of deep networks designed to normalize the feature maps activations from one layer before being passed to the next layer. Layer that normalizes its inputs. batch normalization applies a transformation that maintains the mean output close to 0 and the output standard deviation close to 1. importantly, batch normalization works differently during training and during inference. We demonstrate that for convolutional neural networks (cnns) without skip connections, it is optimal to do relu activation before batch normalization as a result of higher gradient ow. For the first time, cvmidnet, a novel complex valued cnn based deep learning model with residual learning for medical image denoising, has been proposed and implemented. cvmidnet was implemented using a complex valued convolutional layer, complex valued batch normalization, and crelu activation to remove gaussian noise from chest x ray images.

Convolutional Layer Standards Using Relu And Batch Normalization We demonstrate that for convolutional neural networks (cnns) without skip connections, it is optimal to do relu activation before batch normalization as a result of higher gradient ow. For the first time, cvmidnet, a novel complex valued cnn based deep learning model with residual learning for medical image denoising, has been proposed and implemented. cvmidnet was implemented using a complex valued convolutional layer, complex valued batch normalization, and crelu activation to remove gaussian noise from chest x ray images.

Comments are closed.