Conversions To Apache Arrow Format Senx

Conversions To Apache Arrow Format Senx The function >arrow converts a list of gts into arrow streaming format (a byte array). at the same time, it moves the data off heap, so that other processes can pick up the data buffers with zero copy. The supported input types are described in the conversion table below. the output is a bytes representation of an arrow table, containing a map of metadata and columns.

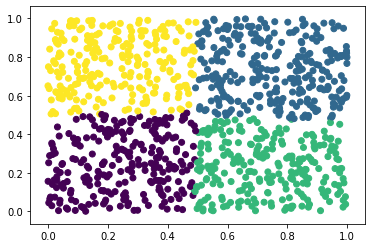

Conversions To Apache Arrow Format Senx Format apache arrow defines a language independent columnar memory format for flat and nested data, organized for efficient analytic operations on modern hardware like cpus and gpus. We walked through the core ideas behind apache arrow, looked at how it’s different from more traditional data formats, how to set it up, and how to work with it in python. Apache arrow was designed for exactly that: a language independent, columnar memory format that different tools can share without expensive conversion steps. that matters because data conversion is often the hidden tax in analytics. When two systems communicate, each converts its data into a standard format before transferring it. however, this process incurs serialization and deserialization costs. the idea behind apache arrow is to provide a highly efficient format for processing within a single system.

Conversions To Apache Arrow Format Senx Apache arrow was designed for exactly that: a language independent, columnar memory format that different tools can share without expensive conversion steps. that matters because data conversion is often the hidden tax in analytics. When two systems communicate, each converts its data into a standard format before transferring it. however, this process incurs serialization and deserialization costs. the idea behind apache arrow is to provide a highly efficient format for processing within a single system. These official libraries enable third party projects to work with arrow data without having to implement the arrow columnar format themselves. Learn to serialize and deserialize apache arrow data efficiently in hexo. streamline your data handling with this practical guide for developers. Returning results: processed data is converted back to arrow format and sent to the jvm, where it becomes a spark dataframe again. this streamlined process significantly reduces latency and memory usage. In this chapter, you’ll learn about a powerful alternative: the parquet format, an open standards based format widely used by big data systems. we’ll pair parquet files with apache arrow, a multi language toolbox designed for efficient analysis and transport of large datasets.

Apache Arrow Apache Arrow These official libraries enable third party projects to work with arrow data without having to implement the arrow columnar format themselves. Learn to serialize and deserialize apache arrow data efficiently in hexo. streamline your data handling with this practical guide for developers. Returning results: processed data is converted back to arrow format and sent to the jvm, where it becomes a spark dataframe again. this streamlined process significantly reduces latency and memory usage. In this chapter, you’ll learn about a powerful alternative: the parquet format, an open standards based format widely used by big data systems. we’ll pair parquet files with apache arrow, a multi language toolbox designed for efficient analysis and transport of large datasets.

Comments are closed.