Continuous Perception Benchmark

Continuous Perception Benchmark To fill in the gap of existing benchmarks, continuous perception benchmark (cpb) aims to build a video question answering dataset that requires continuous processing of video frames. To reveal this blind spot, we introduce the continuous perception benchmark (cp bench), a deliberately minimalistic yet diagnostic evaluation of a model’s ability to integrate visual information over time.

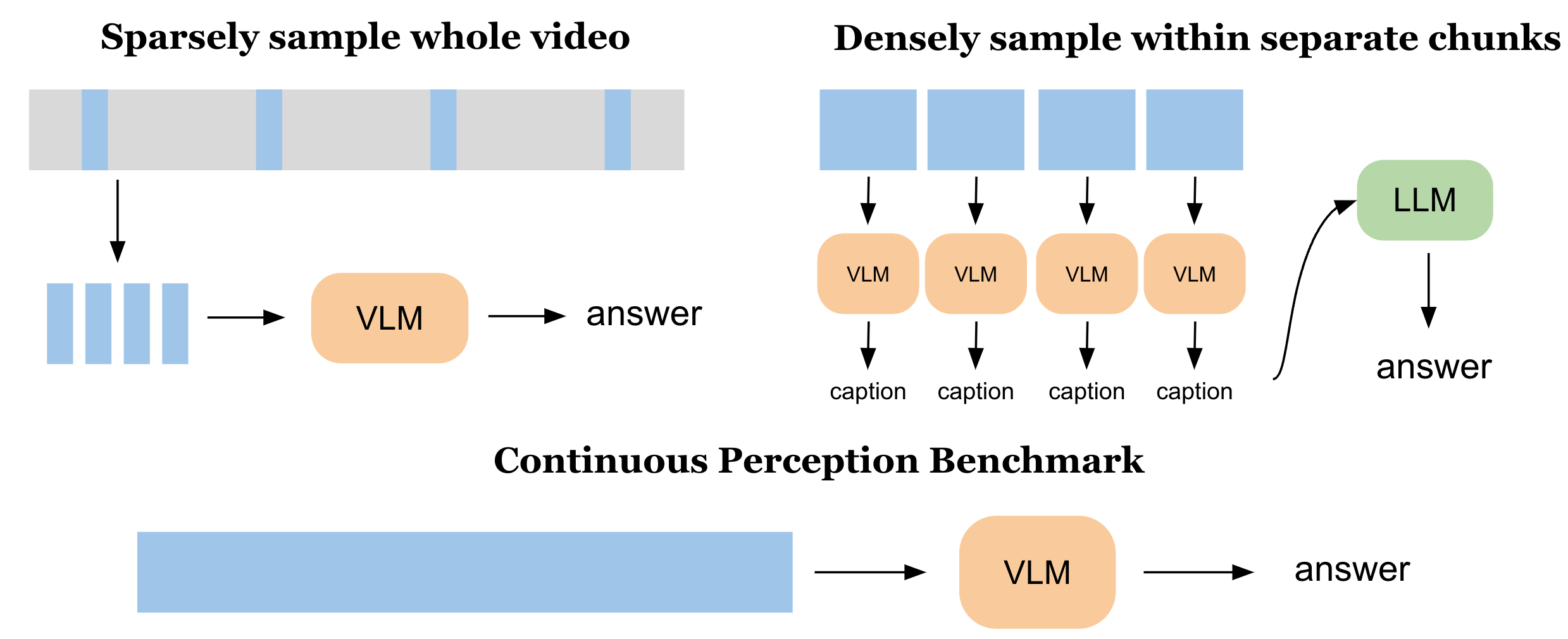

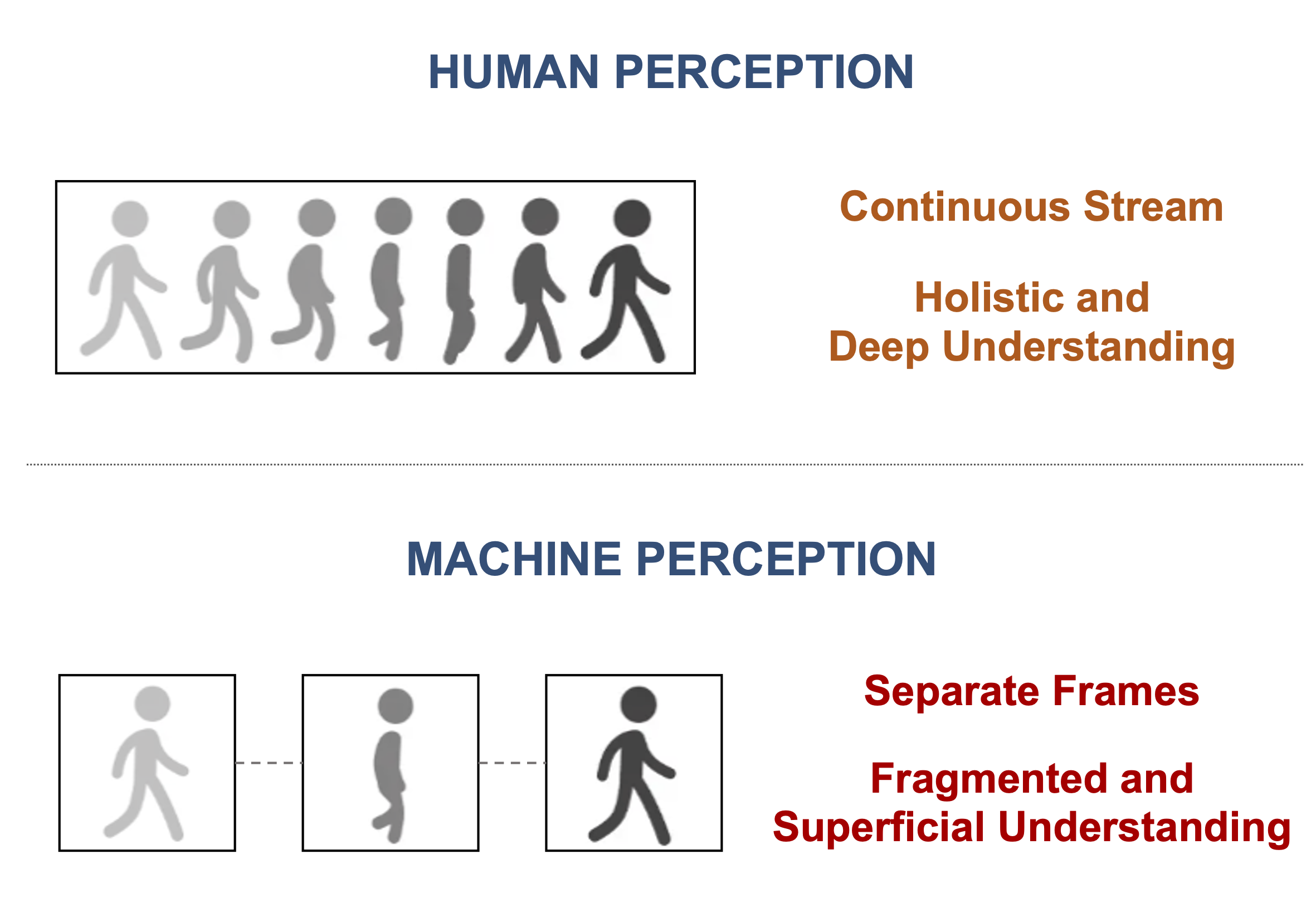

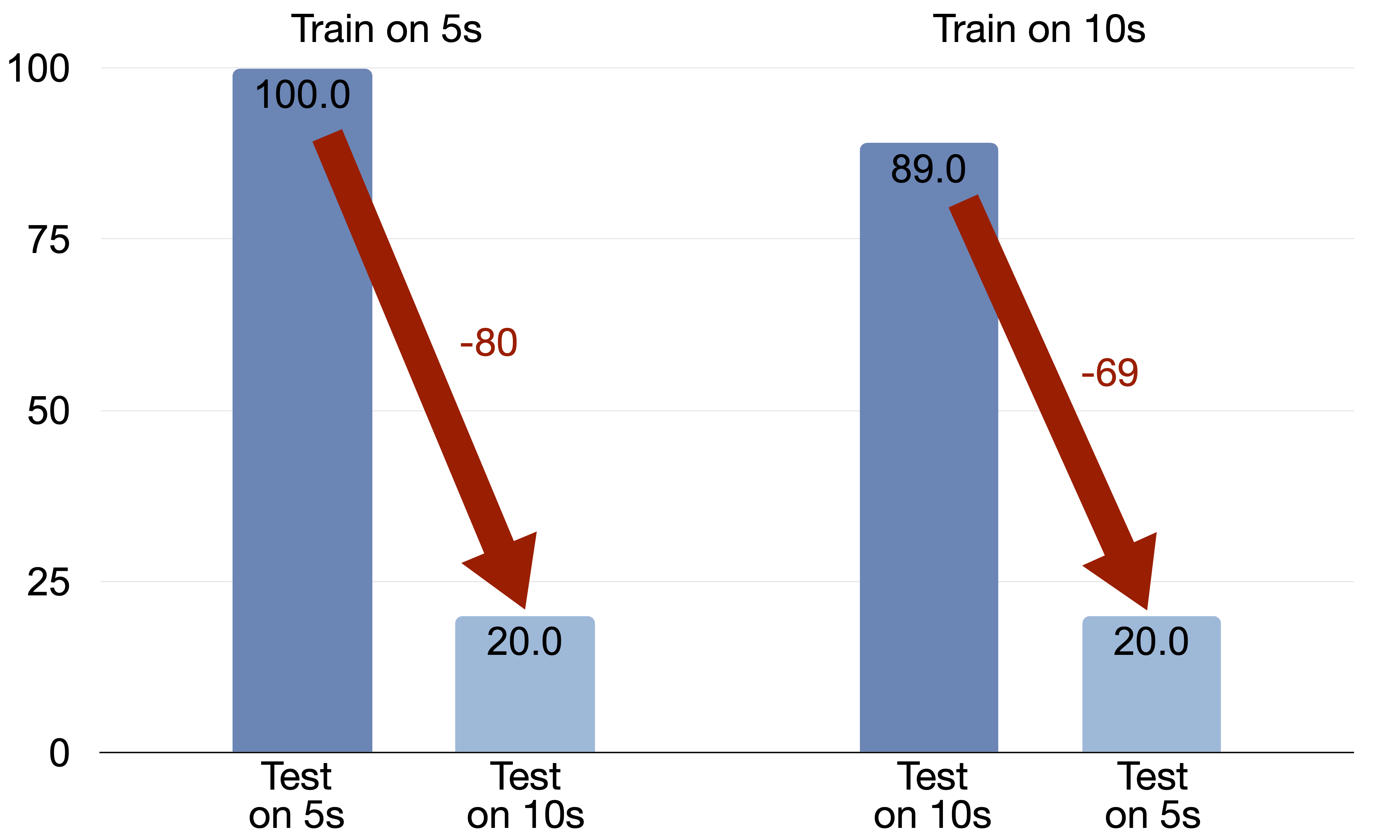

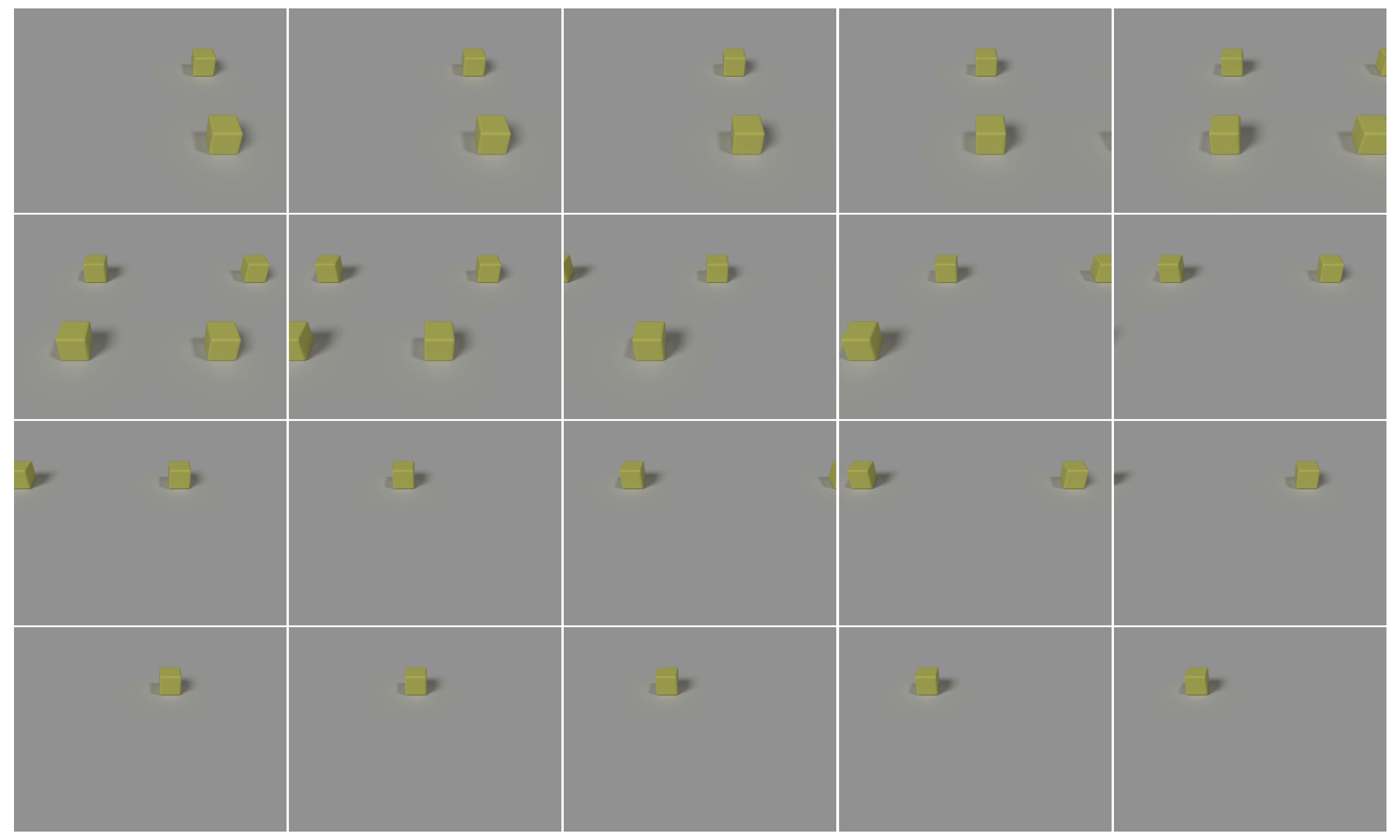

Continuous Perception Matters Diagnosing Temporal Integration Failures To facilitate the development of such models, we propose the continuous perception benchmark, a video question answering task that cannot be solved by focusing solely on a few frames or by captioning small chunks and then summarizing using language models. Rtv bench is a fine grained benchmark for online streaming video reasoning with multimodal large language models (mllms). it targets continuous perception, understanding, and reasoning over long, streaming videos. The continuous perception benchmark (cp bench) is a diagnostic dataset designed to evaluate whether modern vision language and multimodal models can integrate continuous visual information over time—an ability that is central to human visual perception but largely absent in contemporary architectures. We introduce rtv b e n c h, a fine grained benchmark for mllm real time video analysis, which contains 552 videos (167.2 hours) and 4,631 high quality qa pairs.

Continuous Perception Matters Diagnosing Temporal Integration Failures The continuous perception benchmark (cp bench) is a diagnostic dataset designed to evaluate whether modern vision language and multimodal models can integrate continuous visual information over time—an ability that is central to human visual perception but largely absent in contemporary architectures. We introduce rtv b e n c h, a fine grained benchmark for mllm real time video analysis, which contains 552 videos (167.2 hours) and 4,631 high quality qa pairs. These approaches convert asynchronous event streams into synchronized frames and ignore perception latency, creating a critical gap between benchmarks and real world performance. To facilitate the development of such models, we propose the continuous perception benchmark, a video question answering task that cannot be solved by focusing solely on a few frames or by captioning small chunks and then summarizing using language models. The continuous perception benchmark dataset was created by stanford university, aiming to advance the continuous perception capabilities of video understanding models. To facilitate the development of such models, we propose the continuous perception benchmark, a video question answering task that cannot be solved by focusing solely on a few frames or by captioning small chunks and then summarizing using language models.

Continuous Perception Matters Diagnosing Temporal Integration Failures These approaches convert asynchronous event streams into synchronized frames and ignore perception latency, creating a critical gap between benchmarks and real world performance. To facilitate the development of such models, we propose the continuous perception benchmark, a video question answering task that cannot be solved by focusing solely on a few frames or by captioning small chunks and then summarizing using language models. The continuous perception benchmark dataset was created by stanford university, aiming to advance the continuous perception capabilities of video understanding models. To facilitate the development of such models, we propose the continuous perception benchmark, a video question answering task that cannot be solved by focusing solely on a few frames or by captioning small chunks and then summarizing using language models.

Benchmark Development For The Evaluation Of Visual Pdf Data Mining The continuous perception benchmark dataset was created by stanford university, aiming to advance the continuous perception capabilities of video understanding models. To facilitate the development of such models, we propose the continuous perception benchmark, a video question answering task that cannot be solved by focusing solely on a few frames or by captioning small chunks and then summarizing using language models.

Github Lqnew Continuous Control Benchmark Benchmark Data I E

Comments are closed.