Content Moderation 06

Content Moderation 06 Your mission is operation safe harbor: implement robust content moderation and ai safety controls for your interview agent. as your agents process resumes and conduct interviews, it's critical to prevent harmful content, uphold professional standards, and protect sensitive data. Therefore, in practice, the platform actively conducts content moderation through various methods. in this paper, we consider two content moderation formats, pre moderation format and post moderation format, depending on whether the moderation takes place before or after the content’s publication.

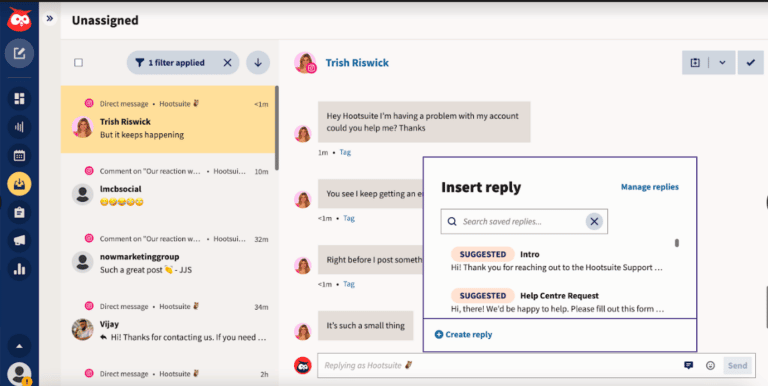

Content Moderation Explained Content moderation, in the context of websites that facilitate user generated content, is the systematic process of identifying, reducing, [1] or removing user contributions that are irrelevant, obscene, illegal, harmful, or insulting. The section starts by looking at the fact that a wide range of different types of content can be subject to content regulation (ranging from explicit legal prohibitions to moderation of legal content), with varying implications for human rights. Content moderation remains the most effective way to safeguard users, protect brand integrity, and ensure compliance with both national and international regulations. keep reading to discover what content moderation is and how to leverage it effectively for your business. Content moderation is the practice of monitoring and managing user generated content on a platform to ensure it adheres to community guidelines and legal standards, using a mix of automated tools and human reviewers.

5 Key Types Of Content Moderation Content moderation remains the most effective way to safeguard users, protect brand integrity, and ensure compliance with both national and international regulations. keep reading to discover what content moderation is and how to leverage it effectively for your business. Content moderation is the practice of monitoring and managing user generated content on a platform to ensure it adheres to community guidelines and legal standards, using a mix of automated tools and human reviewers. Learn what content moderation is, how it works, key moderation methods, and the challenges of balancing online safety, compliance, and free expression. To balance the imperative of tackling harmful online content while safeguarding fundamental rights and accounting for local context, indonesian policymakers should adopt a more balanced, transparent, and nuanced approach. Content moderation refers to the process of monitoring and filtering user generated content on online platforms to ensure that it complies with community guidelines, legal regulations, and ethical standards. Learn about the tools and team you’ll need to effectively identify and moderate sensitive text, image, video, and audio based user generated content.

Content Moderation Guide Tips Tools And Faqs Learn what content moderation is, how it works, key moderation methods, and the challenges of balancing online safety, compliance, and free expression. To balance the imperative of tackling harmful online content while safeguarding fundamental rights and accounting for local context, indonesian policymakers should adopt a more balanced, transparent, and nuanced approach. Content moderation refers to the process of monitoring and filtering user generated content on online platforms to ensure that it complies with community guidelines, legal regulations, and ethical standards. Learn about the tools and team you’ll need to effectively identify and moderate sensitive text, image, video, and audio based user generated content.

Content Moderation Guide Tips Tools And Faqs Content moderation refers to the process of monitoring and filtering user generated content on online platforms to ensure that it complies with community guidelines, legal regulations, and ethical standards. Learn about the tools and team you’ll need to effectively identify and moderate sensitive text, image, video, and audio based user generated content.

Comments are closed.