Confusion Matrix For Uncertainty Based Misclassification Detection

Confusion Matrix For Uncertainty Based Misclassification Detection A confusion matrix in machine learning is the difference between thinking your model works and knowing it does. let's say you've just trained a classification model to detect credit card fraud. Download scientific diagram | confusion matrix for uncertainty based misclassification detection.

Confusion Matrix For Uncertainty Based Misclassification Detection Techniques to deal with the off diagonal elements in confusion matrices are proposed. they are tailored to detect problems of bias of classification among classes. In essence, this paper uses visualisation to help understand how uncertainty in (discrete) confusion matrices manifests in various (continuous) performance metrics so that practitioners can put classifier performance estimates and comparisons into perspective. The confusion matrix contains all necessary information to determine metrics which are used to evaluate the performance of a classifier. popular examples are accuracy (acc), true positive rate (tpr), and true negative rate (tnr). Leveraging optimal transport theory and the principle of maximum entropy, we propose a unique confusion matrix applicable across single, multi, and soft label contexts.

The Confusion Matrix Of Misclassification Detection Tar Can Be The confusion matrix contains all necessary information to determine metrics which are used to evaluate the performance of a classifier. popular examples are accuracy (acc), true positive rate (tpr), and true negative rate (tnr). Leveraging optimal transport theory and the principle of maximum entropy, we propose a unique confusion matrix applicable across single, multi, and soft label contexts. We introduce a general framework for describing counts with classification errors from classifiers, including data from both the classifier and a confusion matrix. the framework incorporates uncertainty in the classification matrix as well as uncertainty in the generating process. Leveraging optimal transport theory and the principle of maximum entropy, we propose a unique confusion matrix applicable across single, multi, and soft label contexts. the transport based confusion matrix (tcm) extends the classic confusion matrix (cm), being identical in the single label context. This paper proposes the model agnostic approach confusionvis which allows to comparatively evaluate and select multi class classifiers based on their confusion matrices. Producing a confusion matrix and calculating the misclassification rate of a naive bayes classifier in r involves a few straightforward steps. in this guide, we'll use a sample dataset to demonstrate how to interpret the results.

The Confusion Matrix Of Misclassification Detection Tar Can Be We introduce a general framework for describing counts with classification errors from classifiers, including data from both the classifier and a confusion matrix. the framework incorporates uncertainty in the classification matrix as well as uncertainty in the generating process. Leveraging optimal transport theory and the principle of maximum entropy, we propose a unique confusion matrix applicable across single, multi, and soft label contexts. the transport based confusion matrix (tcm) extends the classic confusion matrix (cm), being identical in the single label context. This paper proposes the model agnostic approach confusionvis which allows to comparatively evaluate and select multi class classifiers based on their confusion matrices. Producing a confusion matrix and calculating the misclassification rate of a naive bayes classifier in r involves a few straightforward steps. in this guide, we'll use a sample dataset to demonstrate how to interpret the results.

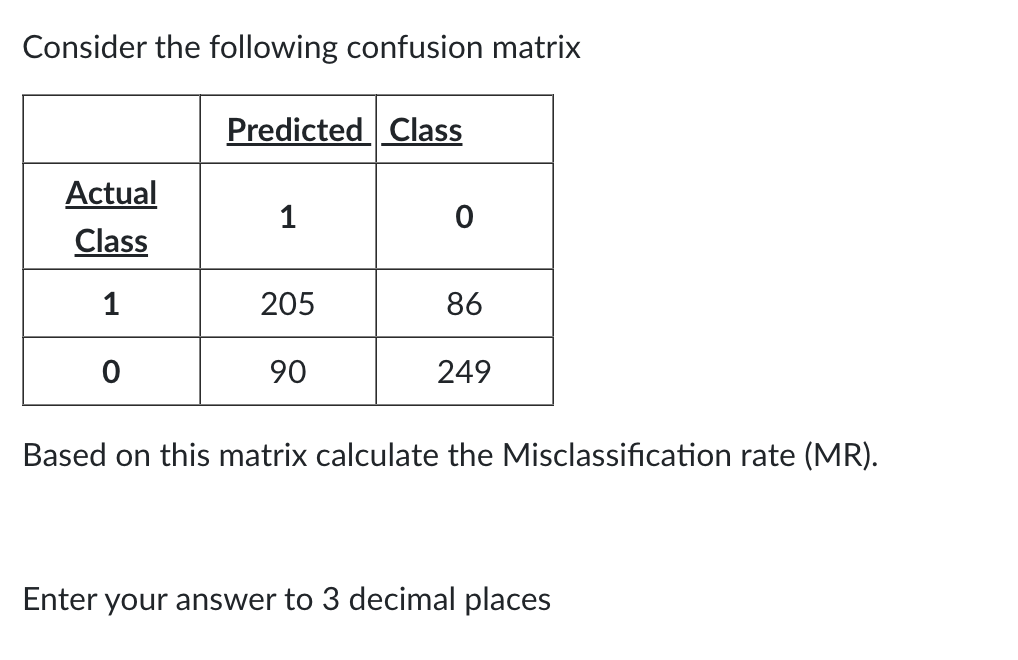

Solved Consider The Following Confusion Matrix Based On This Chegg This paper proposes the model agnostic approach confusionvis which allows to comparatively evaluate and select multi class classifiers based on their confusion matrices. Producing a confusion matrix and calculating the misclassification rate of a naive bayes classifier in r involves a few straightforward steps. in this guide, we'll use a sample dataset to demonstrate how to interpret the results.

Comments are closed.