Claude Code Vs Code Local Llm The Perfect Dev Setup

Vs Code Llm Dev Community That means you can point it at any local inference server that speaks the same api format — and in 2026, there are several excellent options for doing exactly that. this guide covers everything you need: how to get local models running, which models are best for code generation, and how to configure claude code to use them. If you’ve been curious about running claude code entirely offline — no anthropic api key, no cloud, no billing — this post documents exactly what it takes, including the models that fail.

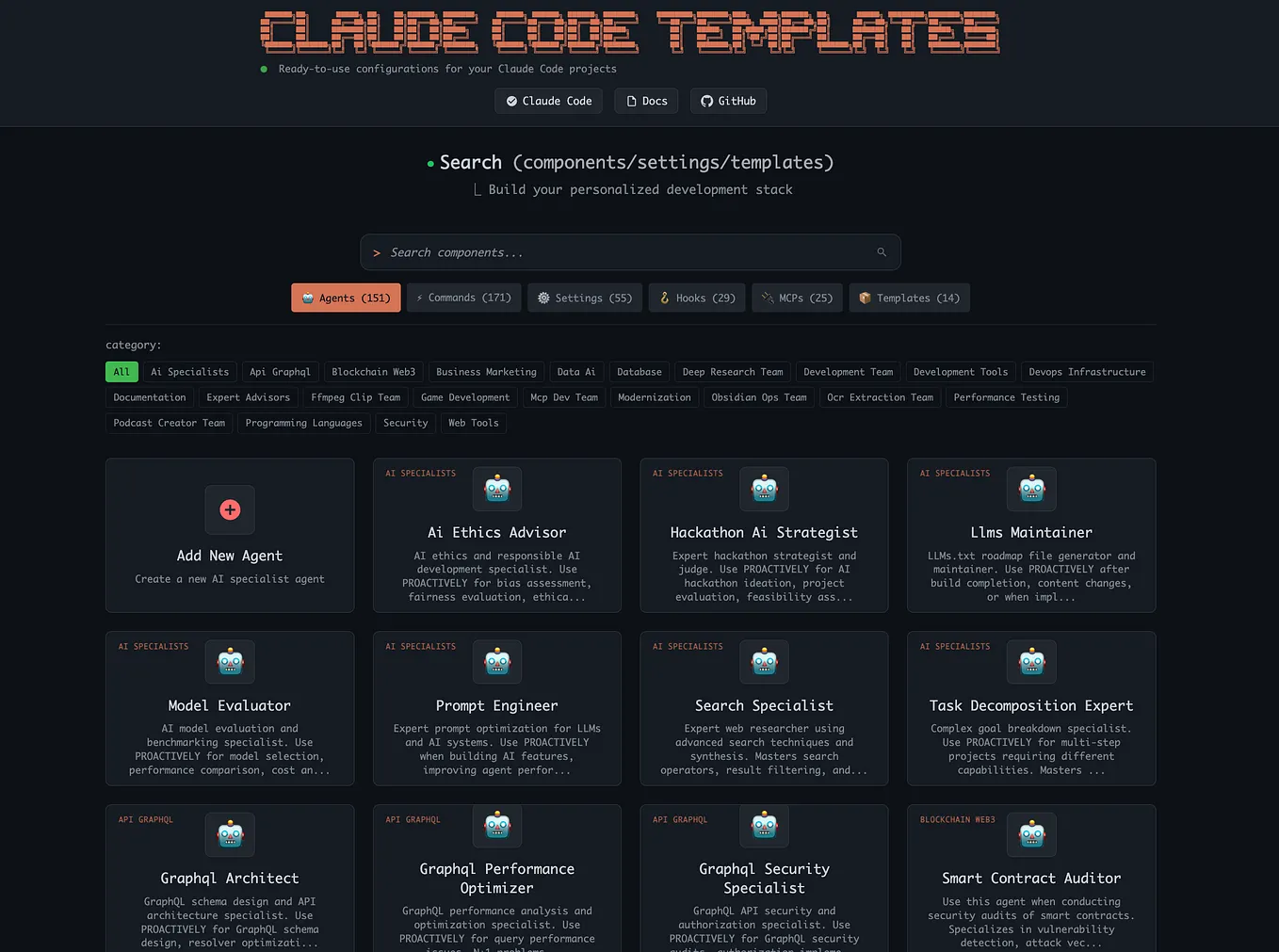

Run A Local Llm In Vs Code With Continue Dev Your Private Ai Coding Learn how to set up claude code with a local large language model (llm) using the code llmss project. this guide walks you through installation, configuration, and real world use cases for developers who want ai powered coding assistance without relying on cloud based services. In this video, i show how to integrate claude code directly into visual studio code using the official anthropic plugin — and then take it one step further by connecting a local llm. This tutorial explains how to install claude code, pull and run local models using ollama, and configure your environment for a seamless local coding experience. Learn how to set up a local llm for coding with ollama, continue.dev, and vs code. covers model selection, hardware planning ide integration.

Run A Local Llm In Vs Code With Continue Dev Your Private Ai Coding This tutorial explains how to install claude code, pull and run local models using ollama, and configure your environment for a seamless local coding experience. Learn how to set up a local llm for coding with ollama, continue.dev, and vs code. covers model selection, hardware planning ide integration. We need to install llama.cpp to deploy serve local llms to use in claude code etc. we follow the official build instructions for correct gpu bindings and maximum performance. Step by step guide to running qwen3.5 locally with llama.cpp and connecting it to claude code via an openai compatible endpoint — including the critical fix for 90% slower inference caused by kv cache invalidation. Clauder is a vs code extension that integrates local llm models (via ollama) to act as an intelligent project management interface, orchestrating claude code for enterprise grade development workflows. I got tired of copy pasting the same block of exports every time i wanted to use my local setup, so i wrote a short bash script that handles all of it in a single command.

Running Claude Code With A Local Llm A Step By Step Guide We need to install llama.cpp to deploy serve local llms to use in claude code etc. we follow the official build instructions for correct gpu bindings and maximum performance. Step by step guide to running qwen3.5 locally with llama.cpp and connecting it to claude code via an openai compatible endpoint — including the critical fix for 90% slower inference caused by kv cache invalidation. Clauder is a vs code extension that integrates local llm models (via ollama) to act as an intelligent project management interface, orchestrating claude code for enterprise grade development workflows. I got tired of copy pasting the same block of exports every time i wanted to use my local setup, so i wrote a short bash script that handles all of it in a single command.

Claude Code In Vs Code Devcontainers Medium Clauder is a vs code extension that integrates local llm models (via ollama) to act as an intelligent project management interface, orchestrating claude code for enterprise grade development workflows. I got tired of copy pasting the same block of exports every time i wanted to use my local setup, so i wrote a short bash script that handles all of it in a single command.

Comments are closed.