Calculate Binary Cross Entropy Using Tensorflow 2 Lindevs

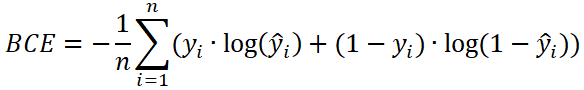

Calculate Binary Cross Entropy Using Tensorflow 2 Lindevs Binary cross entropy (bce) is a loss function that is used to solve binary classification problems (when there are only two classes). bce is the measure of how far away from the actual label (0 or 1) the prediction is. Computes the cross entropy loss between true labels and predicted labels. inherits from: loss. use this cross entropy loss for binary (0 or 1) classification applications. the loss function requires the following inputs: y true (true label): this is either 0 or 1.

Calculate Binary Cross Entropy Using Tensorflow 2 Lindevs Today, in this post, we’ll be covering binary cross entropy and categorical cross entropy — which are common loss functions for binary (two class) classification problems and. In this article, i’ll cover everything you need to know about implementing and optimizing binary cross entropy in tensorflow, from basic implementations to advanced techniques. Binary cross entropy (log loss) is a loss function used in binary classification problems. it quantifies the difference between the actual class labels (0 or 1) and the predicted probabilities output by the model. Binary cross entropy (also known as log loss) is a loss function commonly used for binary classification tasks. it measures the difference between the true labels and the predicted.

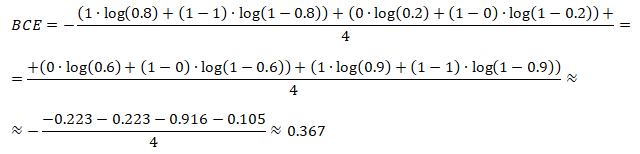

Calculate Binary Cross Entropy Using Tensorflow 2 Lindevs Binary cross entropy (log loss) is a loss function used in binary classification problems. it quantifies the difference between the actual class labels (0 or 1) and the predicted probabilities output by the model. Binary cross entropy (also known as log loss) is a loss function commonly used for binary classification tasks. it measures the difference between the true labels and the predicted. Binary crossentropy between an output tensor and a target tensor. target, output, from logits=false . target: a tensor with the same shape as output. output: a tensor. from logits: whether output is expected to be a logits tensor. by default, we consider that output encodes a probability distribution. a tensor. Computes the cross entropy loss between true labels and predicted labels. see migration guide for more details. use this cross entropy loss when there are only two label classes (assumed to be 0 and 1). for each example, there should be a single floating point value per prediction. This article discusses two methods to find binary cross entropy loss, the tensorflow framework and theoretical computation. The binary cross entropy loss is commonly used in binary classification tasks where each input sample belongs to one of the two classes. it measures the dissimilarity between the target and output probabilities or logits.

Binary Cross Entropy Towards Data Science Binary crossentropy between an output tensor and a target tensor. target, output, from logits=false . target: a tensor with the same shape as output. output: a tensor. from logits: whether output is expected to be a logits tensor. by default, we consider that output encodes a probability distribution. a tensor. Computes the cross entropy loss between true labels and predicted labels. see migration guide for more details. use this cross entropy loss when there are only two label classes (assumed to be 0 and 1). for each example, there should be a single floating point value per prediction. This article discusses two methods to find binary cross entropy loss, the tensorflow framework and theoretical computation. The binary cross entropy loss is commonly used in binary classification tasks where each input sample belongs to one of the two classes. it measures the dissimilarity between the target and output probabilities or logits.

Binary Cross Entropy Loss Function Download Scientific Diagram This article discusses two methods to find binary cross entropy loss, the tensorflow framework and theoretical computation. The binary cross entropy loss is commonly used in binary classification tasks where each input sample belongs to one of the two classes. it measures the dissimilarity between the target and output probabilities or logits.

Comments are closed.