Building Data Pipelines In Python Peerdh

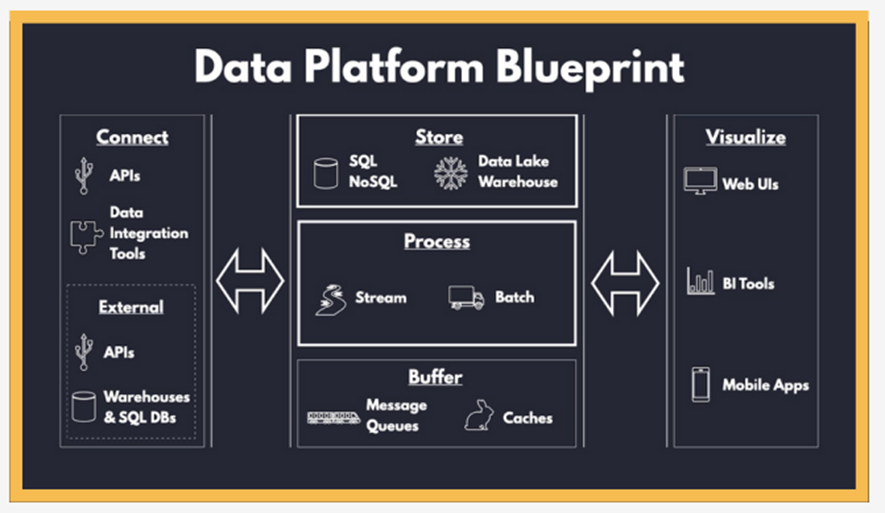

Mastering Data Pipelines With Python Pdf While c is a powerful language for building data pipelines, python has gained immense popularity due to its simplicity and rich ecosystem of libraries. this article will guide you through the process of building data pipelines in python, focusing on key components, tools, and best practices. To build a real time data pipeline, you need to consider several components: data ingestion, processing, storage, and visualization. each of these components plays a vital role in ensuring that data flows smoothly from source to destination.

Building Data Pipelines In Python Peerdh Python is a popular choice for this due to its simplicity and the powerful libraries available. this article will guide you through the process of building an efficient data pipeline using python. Python is a popular choice for building these pipelines due to its simplicity and powerful libraries. this article will cover how to create a data pipeline using python, focusing on the steps involved and providing code examples to illustrate the process. Building real time data pipelines with python for dashboard integration is not just a technical task; it’s a way to bring your data to life. with the right tools and a bit of creativity, you can create dashboards that provide valuable insights at a glance. When combined with python, it allows developers to build efficient and scalable data pipelines. this article will guide you through the process of setting up a real time data pipeline using apache kafka and python.

Building Data Pipelines In Python Peerdh Building real time data pipelines with python for dashboard integration is not just a technical task; it’s a way to bring your data to life. with the right tools and a bit of creativity, you can create dashboards that provide valuable insights at a glance. When combined with python, it allows developers to build efficient and scalable data pipelines. this article will guide you through the process of setting up a real time data pipeline using apache kafka and python. Building an etl pipeline in python is straightforward. by following these steps, you can extract data from various sources, transform it to meet your needs, and load it into your desired destination. This tutorial guided you through building an end to end data science pipeline, covering data handling, preprocessing, modeling, evaluation, and deployment. best practices and troubleshooting tips were also discussed to ensure robust implementation. Pipeline # class sklearn.pipeline.pipeline(steps, *, transform input=none, memory=none, verbose=false) [source] # a sequence of data transformers with an optional final predictor. pipeline allows you to sequentially apply a list of transformers to preprocess the data and, if desired, conclude the sequence with a final predictor for predictive modeling. intermediate steps of the pipeline must. Easy to use anyone with python knowledge can deploy a workflow. apache airflow® does not limit the scope of your pipelines; you can use it to build ml models, transfer data, manage your infrastructure, and more.

Data Pipelines With Python Peerdh Building an etl pipeline in python is straightforward. by following these steps, you can extract data from various sources, transform it to meet your needs, and load it into your desired destination. This tutorial guided you through building an end to end data science pipeline, covering data handling, preprocessing, modeling, evaluation, and deployment. best practices and troubleshooting tips were also discussed to ensure robust implementation. Pipeline # class sklearn.pipeline.pipeline(steps, *, transform input=none, memory=none, verbose=false) [source] # a sequence of data transformers with an optional final predictor. pipeline allows you to sequentially apply a list of transformers to preprocess the data and, if desired, conclude the sequence with a final predictor for predictive modeling. intermediate steps of the pipeline must. Easy to use anyone with python knowledge can deploy a workflow. apache airflow® does not limit the scope of your pipelines; you can use it to build ml models, transfer data, manage your infrastructure, and more.

Building Data Pipelines With Python Understanding Pipeline Frameworks Pipeline # class sklearn.pipeline.pipeline(steps, *, transform input=none, memory=none, verbose=false) [source] # a sequence of data transformers with an optional final predictor. pipeline allows you to sequentially apply a list of transformers to preprocess the data and, if desired, conclude the sequence with a final predictor for predictive modeling. intermediate steps of the pipeline must. Easy to use anyone with python knowledge can deploy a workflow. apache airflow® does not limit the scope of your pipelines; you can use it to build ml models, transfer data, manage your infrastructure, and more.

Comments are closed.