Build Visual Ai Agents With Vision Language Models

Vision Language Models How They Work Overcoming Key Challenges Encord Nvidia nim microservices offer flexible customization, streamlined api integration, and smooth deployment to build dynamic visual ai agents tailored to unique business needs, using core types of vision models: vlms, embedding models, and computer vision (cv) models. Build visual ai agents with vision language models. nvidia via microservices, an extension of nvidia metropolis microservices, are cloud native building blocks to accelerate the development of visual ai agents powered by vlms and nim whether deployed at the edge or cloud.

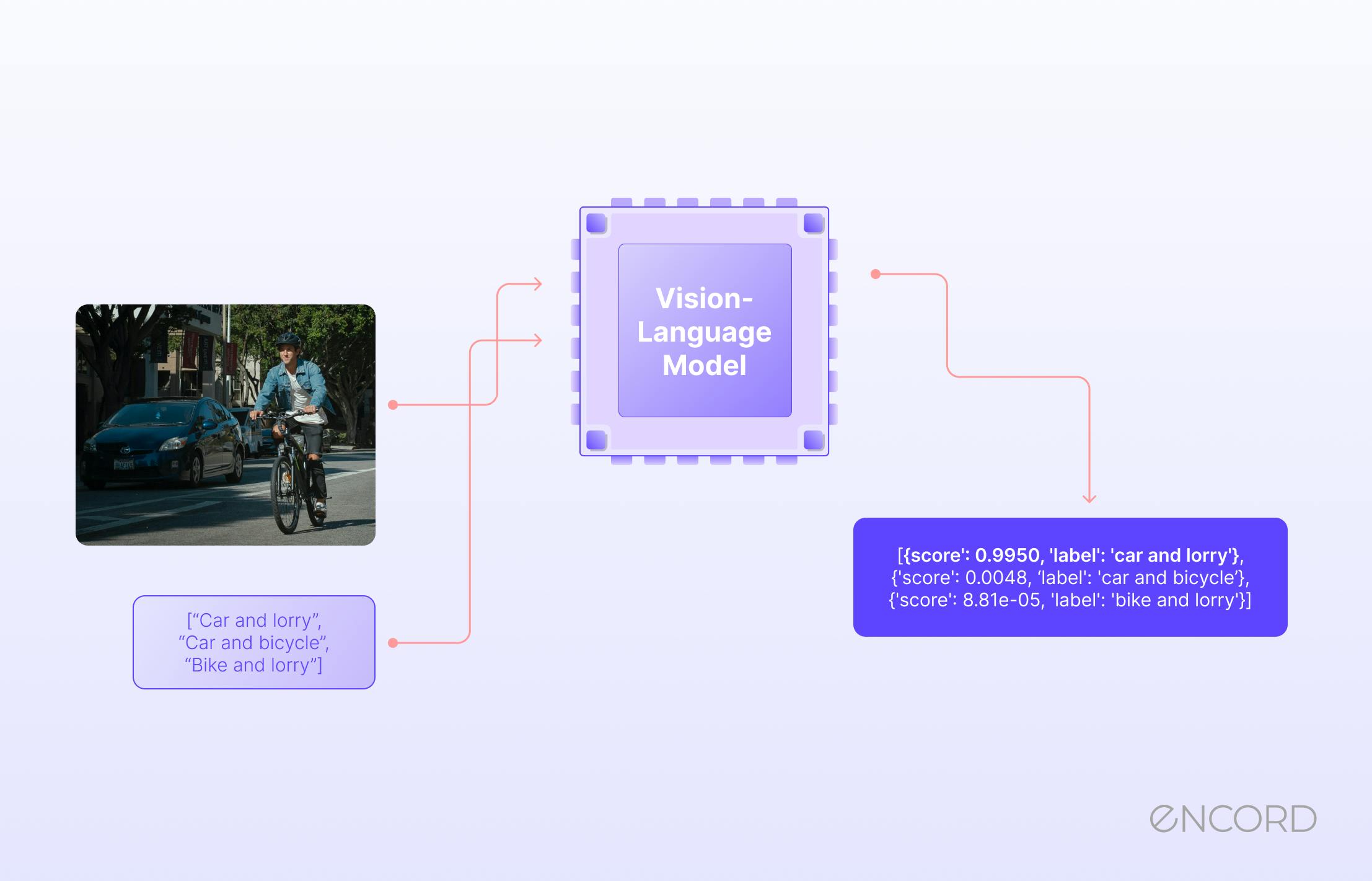

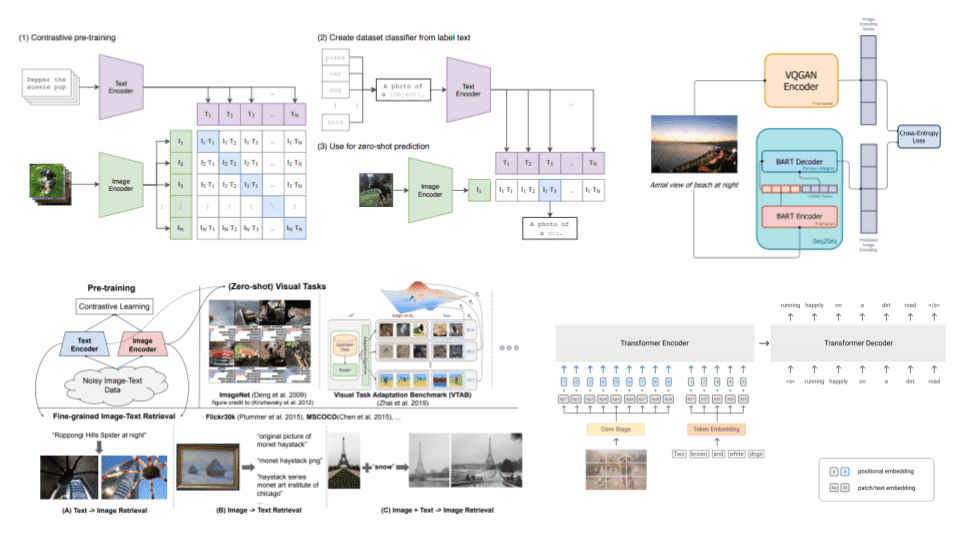

Vision Language Model Applications Learning Strategies In this paper, we introduce visiongpt to consolidate and automate the integration of state of the art foundation models, thereby facilitating vision language understanding and the development of vision oriented ai. We introduce vagen, a reinforcement learning framework that trains vision language model (vlm) agents to build internal world models through explicit visual state reasoning. The article discusses the development of multimodal visual ai agents using nvidia nim microservices, highlighting the importance of vision language models (vlms) in processing and analyzing diverse visual data. In this diagram, we illustrate the workflow of integrating the nvidia isaac sim robot simulation environment with a vision language model running on the jetson orin agx, utilizing.

Vision Language Models Unlocking The Future Of Multimodal Ai The article discusses the development of multimodal visual ai agents using nvidia nim microservices, highlighting the importance of vision language models (vlms) in processing and analyzing diverse visual data. In this diagram, we illustrate the workflow of integrating the nvidia isaac sim robot simulation environment with a vision language model running on the jetson orin agx, utilizing. In this post, we show you how to seamlessly build an ai agent with these two technologies with a summarization microservice to help process large amounts of videos with vlms and nim microservices and produce curated summaries. Visual language action models (vlams) are ai systems that integrate visual perception, natural language understanding, and action planning to enable agents to interpret their environment, follow language instructions, and perform corresponding actions. Fortunately, smolagents provides built in support for vision language models (vlms), enabling agents to process and interpret images effectively. in this example, imagine alfred, the butler at wayne manor, is tasked with verifying the identities of the guests attending the party. In this post, we'll explore what vision ai agents are, how foundation models like gemini 3 pro power them, why they represent a generational shift in computer vision, and how you can start building your own using roboflow workflows.

Ai Large Language Visual Models Ai Digitalnews In this post, we show you how to seamlessly build an ai agent with these two technologies with a summarization microservice to help process large amounts of videos with vlms and nim microservices and produce curated summaries. Visual language action models (vlams) are ai systems that integrate visual perception, natural language understanding, and action planning to enable agents to interpret their environment, follow language instructions, and perform corresponding actions. Fortunately, smolagents provides built in support for vision language models (vlms), enabling agents to process and interpret images effectively. in this example, imagine alfred, the butler at wayne manor, is tasked with verifying the identities of the guests attending the party. In this post, we'll explore what vision ai agents are, how foundation models like gemini 3 pro power them, why they represent a generational shift in computer vision, and how you can start building your own using roboflow workflows.

Vision Language Models Towards Multi Modal Deep Learning Ai Summer Fortunately, smolagents provides built in support for vision language models (vlms), enabling agents to process and interpret images effectively. in this example, imagine alfred, the butler at wayne manor, is tasked with verifying the identities of the guests attending the party. In this post, we'll explore what vision ai agents are, how foundation models like gemini 3 pro power them, why they represent a generational shift in computer vision, and how you can start building your own using roboflow workflows.

Comments are closed.