Bug Issue 3069 Microsoft Deepspeed Github

Bug Issue 3069 Microsoft Deepspeed Github Have a question about this project? sign up for a free github account to open an issue and contact its maintainers and the community. by clicking “sign up for github”, you agree to our terms of service and privacy statement. we’ll occasionally send you account related emails. already on github? sign in to your account [bug] #3069 closed. Deepspeed is a deep learning optimization library that makes distributed training and inference easy, efficient, and effective. issues · deepspeedai deepspeed.

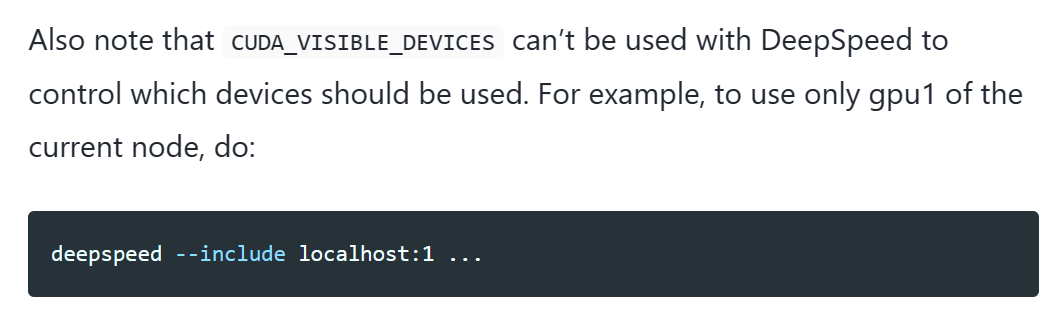

Try To Employ The Deepspeed Zero2 Issue 108 Microsoft Lmops Github Deepspeed was an important part of microsoft’s ai at scale initiative to enable next generation ai capabilities at scale, where you can find more information here. Sign up for a free github account to open an issue and contact its maintainers and the community. what is the error that you're seeing? trying to specify which cuda devices to use? this looks to be a duplicate of #3070, answered there as well. Explore the github discussions forum for deepspeedai deepspeed. discuss code, ask questions & collaborate with the developer community. Deepspeed is an easy to use deep learning optimization software suite that enables unprecedented scale and speed for dl training and inference. visit us at deepspeed.ai or our github repo.

Request Issue 3134 Microsoft Deepspeed Github Explore the github discussions forum for deepspeedai deepspeed. discuss code, ask questions & collaborate with the developer community. Deepspeed is an easy to use deep learning optimization software suite that enables unprecedented scale and speed for dl training and inference. visit us at deepspeed.ai or our github repo. Github microsoft deepspeed issue stats last synced: 1 day ago total issues: 1,624 total pull requests: 1,233 average time to close issues: 5 months average time to close pull requests: about 2 months total issue authors: 962 total pull request authors: 250 average comments per issue: 3.78 average comments per pull request: 1.95 merged pull. Problems under the deepspeed github repository are an excellent place to begin with if one wants to debug. users can surf some of which already exist or open a new one if one has detected a bug or error. Currently, i am trying to fine tune the korean llama model (13b) on a private dataset through deepspeed and flash attention 2, trl sfttrainer. i am using 2 * a100 80g gpus for the fine tuning, however, i could not conduct the fine tuning. I need everyone’s help, i had started an issue but so far i haven’t found out how to make the fix:.

Comments are closed.