Benichn Benichn Github

Benichn Benichn Github Swe bench is a benchmark for evaluating large language models on real world software issues collected from github. given a codebase and an issue, a language model is tasked with generating a patch that resolves the described problem. Multi swe bench is a benchmark for evaluating the issue resolving capabilities of llms across multiple programming languages. the dataset consists of 1,632 issue resolving tasks spanning 7 programming languages: java, typescript, javascript, go, rust, c, and c .

Github Benichn Vgmgui A Graphic Interface Of Vgmstream Quick start guide this guide will help you get started with swe bench, from installation to running your first evaluation. setup first, install swe bench and its dependencies:. To this end, we introduce swe bench, an evaluation framework consisting of 2,294 software engineering problems drawn from real github issues and corresponding pull requests across 12 popular python repositories. Something went wrong, please refresh the page to try again. if the problem persists, check the github status page or contact support. Swe bench live is a live benchmark for issue resolving, designed to evaluate an ai system's ability to complete real world software engineering tasks.

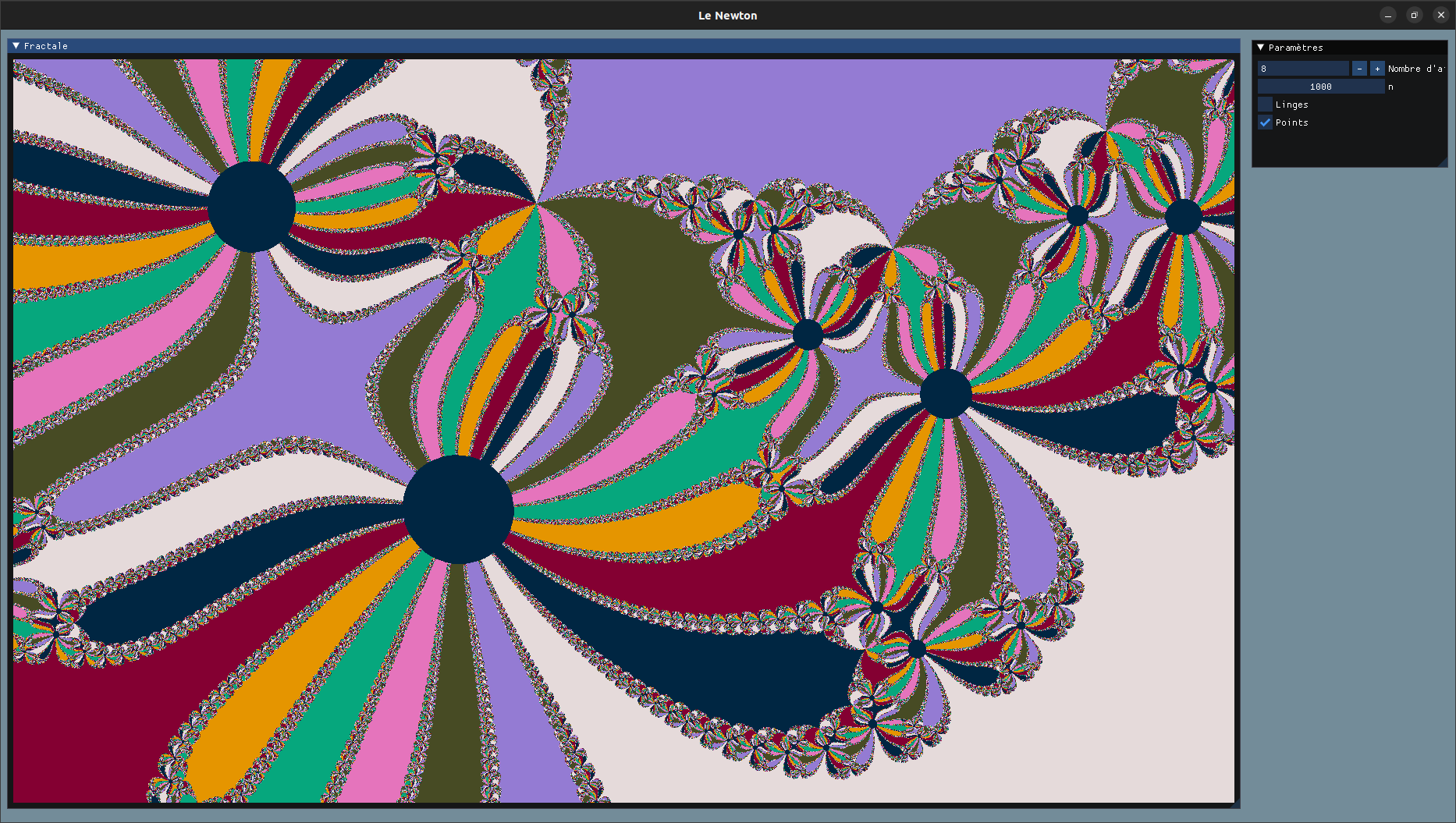

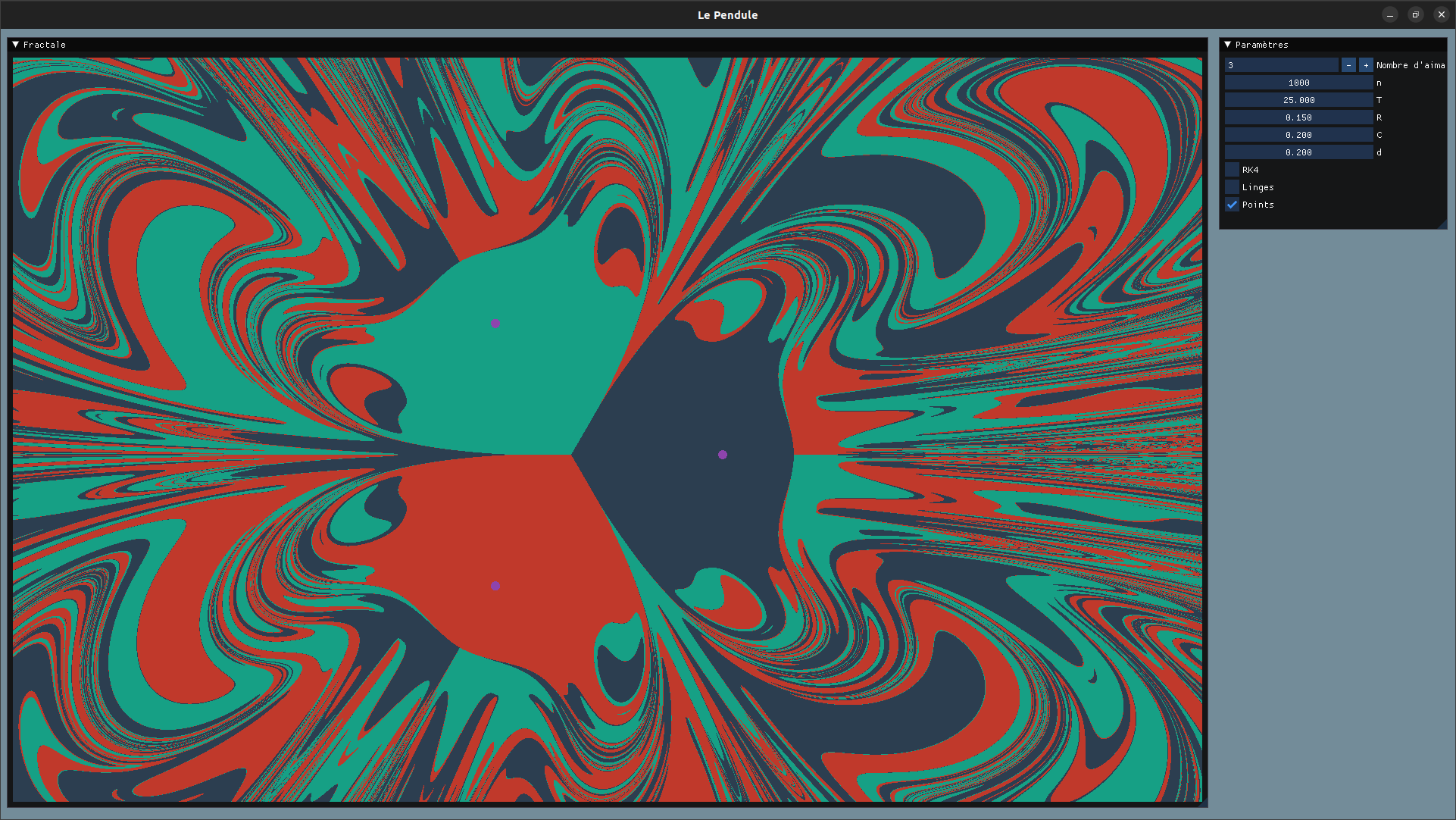

Github Benichn Fractales Something went wrong, please refresh the page to try again. if the problem persists, check the github status page or contact support. Swe bench live is a live benchmark for issue resolving, designed to evaluate an ai system's ability to complete real world software engineering tasks. The beyond the imitation game benchmark (big bench) is a collaborative benchmark intended to probe large language models and extrapolate their future capabilities. Evaluates ai’s ability to resolve genuine software engineering issues sourced from 12 popular python github repositories, reflecting realistic coding and debugging scenarios. Code for the paper "mle bench: evaluating machine learning agents on machine learning engineering". we have released the code used to construct the dataset, the evaluation logic, as well as the agents we evaluated for this benchmark. Livebench is designed to limit potential contamination by releasing new questions monthly, as well as having questions based on recently released datasets, arxiv papers, news articles, and imdb movie synopses.

Github Benichn Fractales The beyond the imitation game benchmark (big bench) is a collaborative benchmark intended to probe large language models and extrapolate their future capabilities. Evaluates ai’s ability to resolve genuine software engineering issues sourced from 12 popular python github repositories, reflecting realistic coding and debugging scenarios. Code for the paper "mle bench: evaluating machine learning agents on machine learning engineering". we have released the code used to construct the dataset, the evaluation logic, as well as the agents we evaluated for this benchmark. Livebench is designed to limit potential contamination by releasing new questions monthly, as well as having questions based on recently released datasets, arxiv papers, news articles, and imdb movie synopses.

Comments are closed.