Bayesian Maximum Aposteriori Estimation Map Extending Maximum Likelihood Estimation

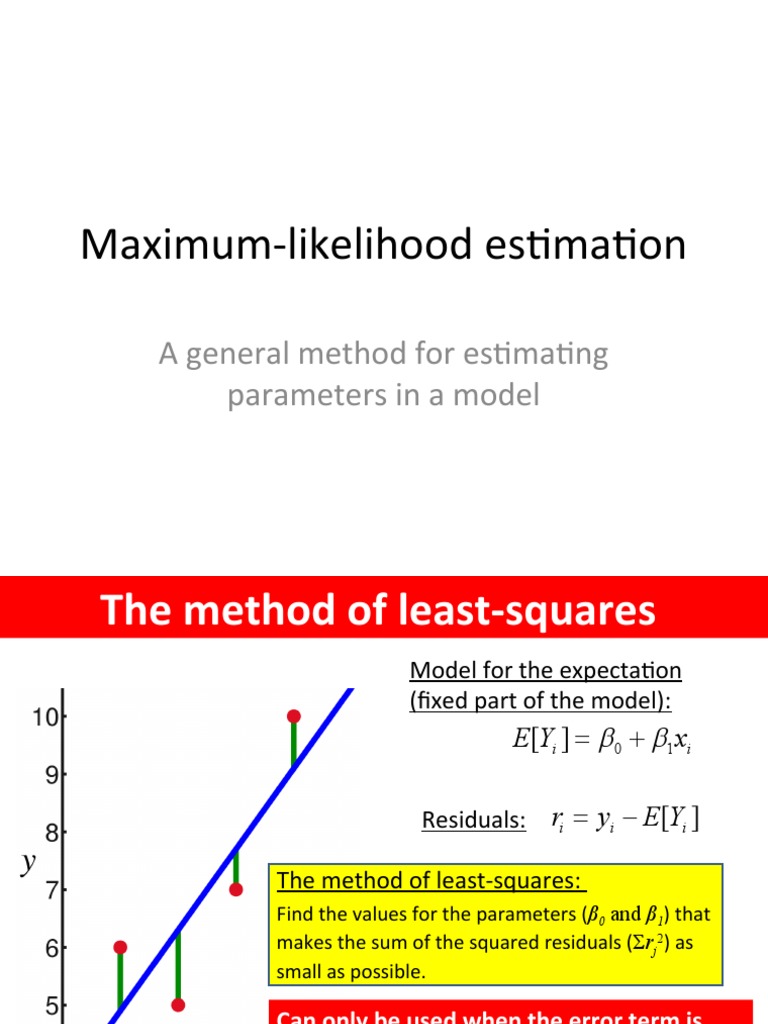

Maximum Likelihood Estimation Pdf Errors And Residuals Least Squares It is closely related to the method of maximum likelihood (ml) estimation, but employs an augmented optimization objective which incorporates a prior density over the quantity one wants to estimate. map estimation is therefore a regularization of maximum likelihood estimation. Maximum a posteriori (map) estimation is a fundamental statistical method used in bayesian inference. map estimation offers a technique for the estimation of an unknown parameter using prior knowledge within the estimation.

Maximum Likelihood Estimation And Bayesian Estimation Coding Ninjas Understand the critical differences between maximum likelihood estimation (mle) and maximum a posteriori (map) in statistical inference. In bayesian statistics, a maximum a posteriori probability (map) estimate is an estimate of an unknown quantity, that equals the mode of the posterior distribution. the map can be used to obtain a point estimate of an unobserved quantity on the basis of empirical data. Map estimation is based on bayes’ theorem, which you can think of as a way to update your beliefs when you get new information: posterior ∝ likelihood × prior. map finds the parameter values that maximize this posterior probability. •categorical data (i.e., multinomial, bernoulli binomial) •also known as additive smoothing laplace estimate imagine ;=1 of each outcome (follows from laplace’s "law of succession") example: laplace estimate for probabilities from previously mentioned experiment (100 coins: 58 heads, 42 tails).

Topology Recovered By Bayesian Inference And Maximum Likelihood Map estimation is based on bayes’ theorem, which you can think of as a way to update your beliefs when you get new information: posterior ∝ likelihood × prior. map finds the parameter values that maximize this posterior probability. •categorical data (i.e., multinomial, bernoulli binomial) •also known as additive smoothing laplace estimate imagine ;=1 of each outcome (follows from laplace’s "law of succession") example: laplace estimate for probabilities from previously mentioned experiment (100 coins: 58 heads, 42 tails). Maximum aposteriori estimation (map) is a bayesian extension to the maximum likelihood estimate (mle) to include prior information into the estimate. this is a major technique in. In bayesian inference, the prior distribution of a parameter and the likelihood of the observed data are combined to obtain the posterior distribution of the parameter. In this article, we introduced mle (maximum likelihood estimation), map (maximum a posteriori estimation) and bayesian inference. we used the example of an unfair coin throughout for demonstration. Learn bayesian maximum a posteriori (map) estimation as an extension of maximum likelihood estimation that incorporates prior information to improve parameter estimation, particularly valuable when working with sparse or expensive data such as in seismic inversion applications.

Mae Of Bayesian Maximum Likelihood Methods For Download Scientific Maximum aposteriori estimation (map) is a bayesian extension to the maximum likelihood estimate (mle) to include prior information into the estimate. this is a major technique in. In bayesian inference, the prior distribution of a parameter and the likelihood of the observed data are combined to obtain the posterior distribution of the parameter. In this article, we introduced mle (maximum likelihood estimation), map (maximum a posteriori estimation) and bayesian inference. we used the example of an unfair coin throughout for demonstration. Learn bayesian maximum a posteriori (map) estimation as an extension of maximum likelihood estimation that incorporates prior information to improve parameter estimation, particularly valuable when working with sparse or expensive data such as in seismic inversion applications.

Mae Of Bayesian Maximum Likelihood Methods For Download Scientific In this article, we introduced mle (maximum likelihood estimation), map (maximum a posteriori estimation) and bayesian inference. we used the example of an unfair coin throughout for demonstration. Learn bayesian maximum a posteriori (map) estimation as an extension of maximum likelihood estimation that incorporates prior information to improve parameter estimation, particularly valuable when working with sparse or expensive data such as in seismic inversion applications.

Comments are closed.