Automated Llm Evaluation Benchmarks

Automated Llm Evaluation Benchmarks To make this benchmark automatic, the user is mocked up by an llm, which makes this evaluation quite costly to run and prone to errors. despite these limitations, it’s quite used, notably because it reflects real use cases well. Llm benchmarks are standardized tests for llm evaluations. this guide covers 30 benchmarks from mmlu to chatbot arena, with links to datasets and leaderboards.

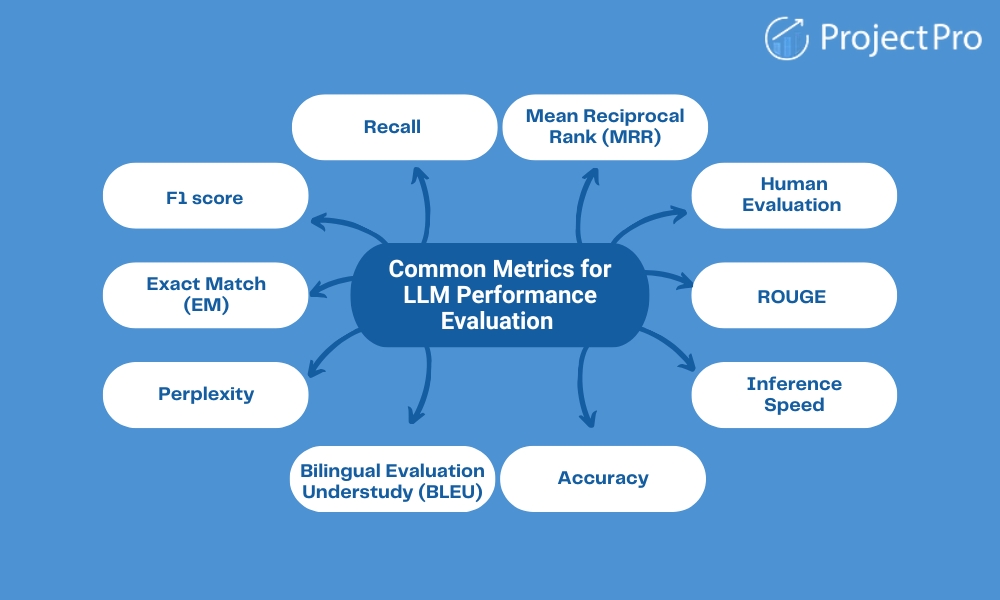

Llm Evaluation Benchmarks Every Ai Engineer Should Know Arena hard auto is an automatic evaluation benchmark for instruction tuned llms consisting of 500 challenging real world prompts curated by benchbuilder. it includes open ended software engineering problems, mathematical questions, and creative writing tasks. Explore top llm evaluation tools like deepchecks, langsmith, and humanloop to advance ai performance and reliability. The definitive llm leaderboard — ranking the best ai models including claude, gpt, gemini, deepseek, llama, and more across coding, reasoning, math, agentic, and chat benchmarks. compare llm rankings, tier lists, and pricing. Complete guide to llm evaluation metrics, benchmarks, and best practices. learn about bleu, rouge, glue, superglue, and other evaluation frameworks.

Llm Evaluation Benchmarks Every Ai Engineer Should Know The definitive llm leaderboard — ranking the best ai models including claude, gpt, gemini, deepseek, llama, and more across coding, reasoning, math, agentic, and chat benchmarks. compare llm rankings, tier lists, and pricing. Complete guide to llm evaluation metrics, benchmarks, and best practices. learn about bleu, rouge, glue, superglue, and other evaluation frameworks. Understand llm evaluation with our comprehensive guide. learn how to define benchmarks and metrics, and measure progress for optimizing your llm performance. In this paper, we propose a straightforward, replicable, and accurate automated evaluation method by leveraging a lightweight llm as the judge, named rocketeval. Learn the fundamentals of large language model (llm) evaluation, including key metrics and frameworks used to measure model performance, safety, and reliability. explore practical evaluation techniques, such as automated tools, llm judges, and human assessments tailored for domain specific use cases. Explore the top llm evaluation benchmarks, what they measure, and how each works to assess ai language model performance accurately.

Llm Evaluation Benchmarks Every Ai Engineer Should Know Understand llm evaluation with our comprehensive guide. learn how to define benchmarks and metrics, and measure progress for optimizing your llm performance. In this paper, we propose a straightforward, replicable, and accurate automated evaluation method by leveraging a lightweight llm as the judge, named rocketeval. Learn the fundamentals of large language model (llm) evaluation, including key metrics and frameworks used to measure model performance, safety, and reliability. explore practical evaluation techniques, such as automated tools, llm judges, and human assessments tailored for domain specific use cases. Explore the top llm evaluation benchmarks, what they measure, and how each works to assess ai language model performance accurately.

:format(webp))

Llm Evaluation And Benchmarks Learn the fundamentals of large language model (llm) evaluation, including key metrics and frameworks used to measure model performance, safety, and reliability. explore practical evaluation techniques, such as automated tools, llm judges, and human assessments tailored for domain specific use cases. Explore the top llm evaluation benchmarks, what they measure, and how each works to assess ai language model performance accurately.

Comments are closed.