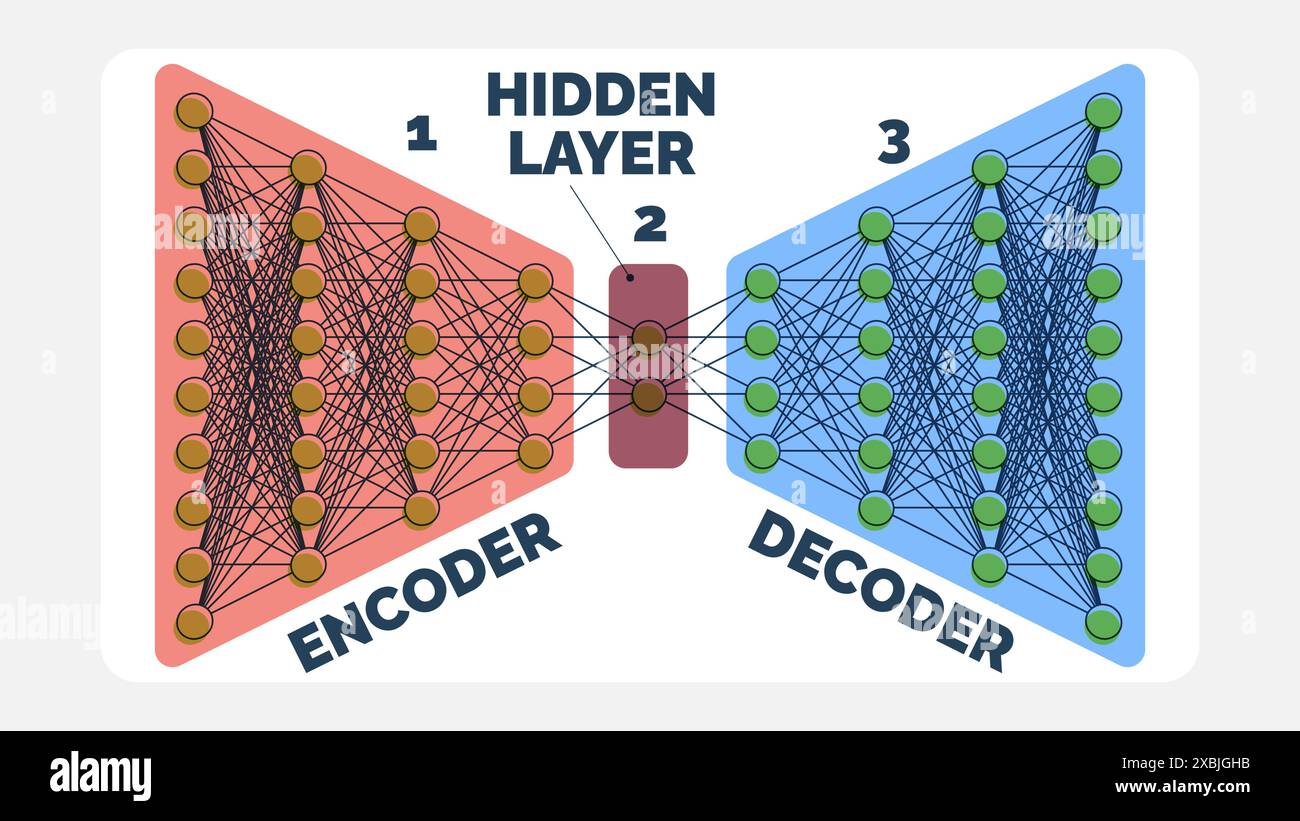

Autoencoder Neural Network Data Encoding Hidden Layer And Decoding

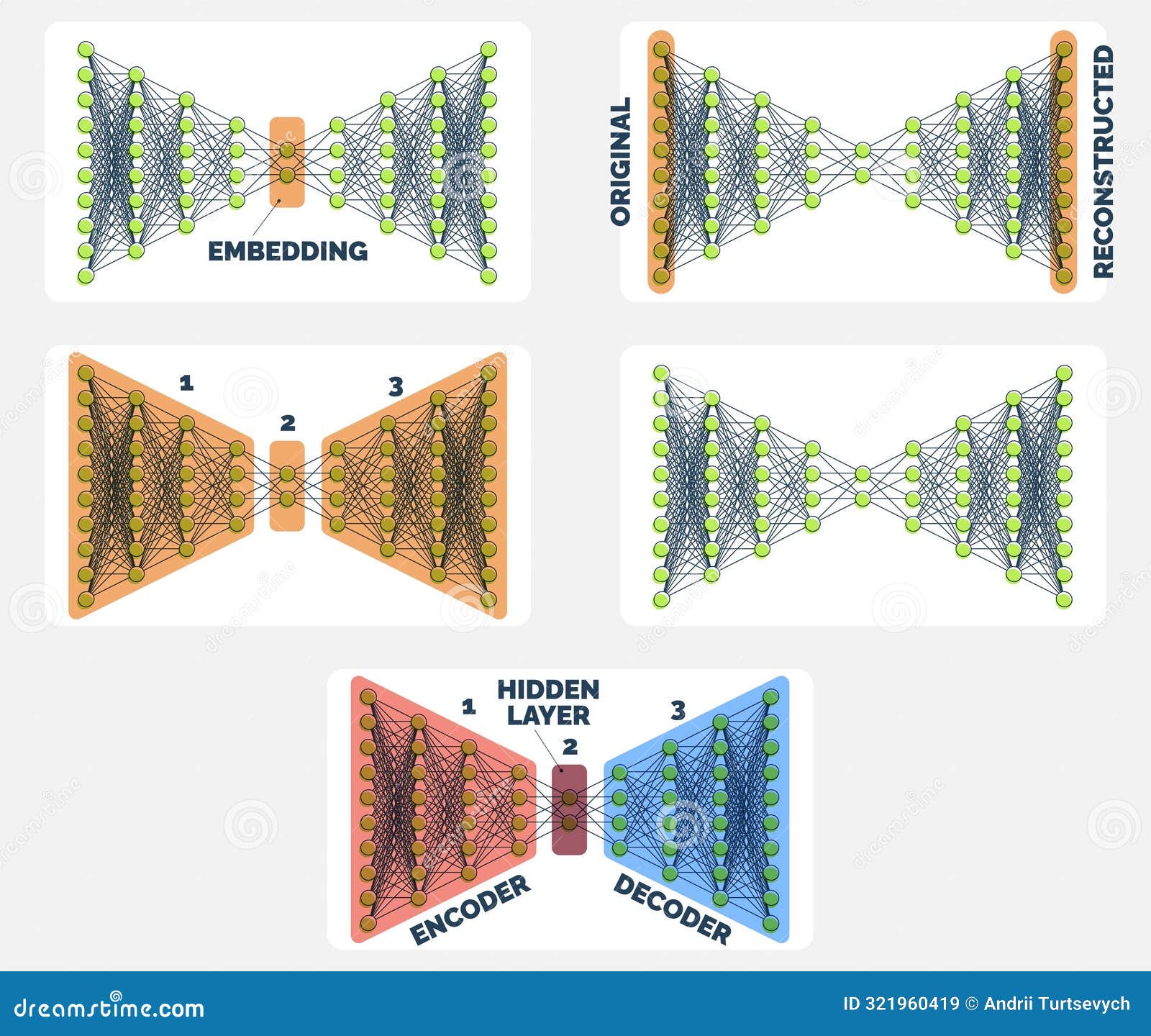

Autoencoder Neural Network Data Encoding Hidden Layer And Decoding Autoencoders are neural networks that compress input data into a smaller representation and then reconstruct it, helping the model learn important patterns efficiently. An autoencoder has two main parts: an encoder that maps the message to a code, and a decoder that reconstructs the message from the code. an autoencoder is a type of artificial neural network used to learn efficient codings of unlabeled data (unsupervised learning).

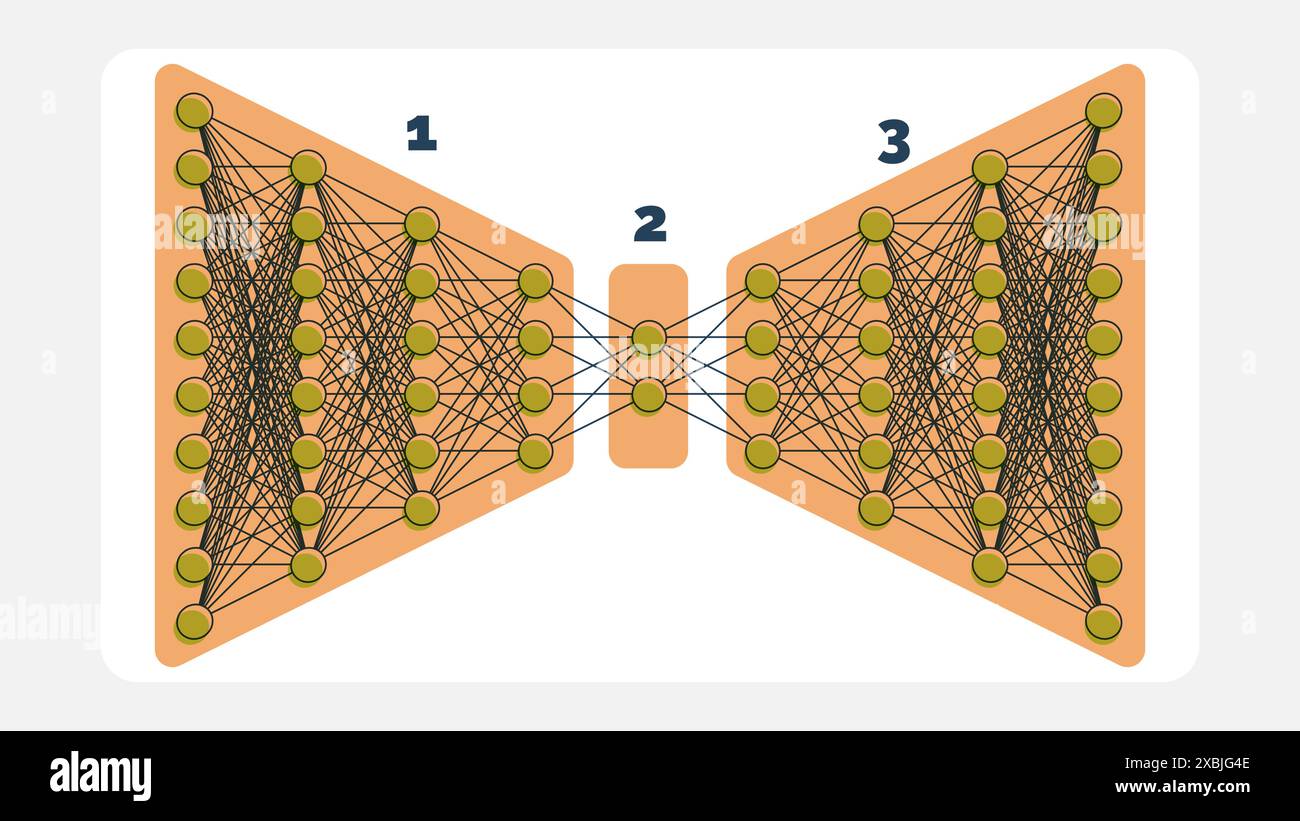

Detailed Autoencoder Network Illustration Encoding Hidden Layer And Autoencoders have become a fundamental technique in deep learning (dl), significantly enhancing representation learning across various domains, including image processing, anomaly detection, and. At a high level, autoencoders are a type of artificial neural network used primarily for unsupervised learning. their main goal is to learn a compressed, or “encoded,” representation of. Autoencoders are a special type of unsupervised feedforward neural network (no labels needed!). the main application of autoencoders is to accurately capture the key aspects of the provided data to provide a compressed version of the input data, generate realistic synthetic data, or flag anomalies. Autoencoders are foundational tools in modern deep learning. in this article, we break down the essential concepts behind autoencoders, explore different types, and walk through an implementation using a denoising autoencoder on the stl 10 dataset.

Comprehensive Autoencoder Neural Network Guide Encoding To Decoding Autoencoders are a special type of unsupervised feedforward neural network (no labels needed!). the main application of autoencoders is to accurately capture the key aspects of the provided data to provide a compressed version of the input data, generate realistic synthetic data, or flag anomalies. Autoencoders are foundational tools in modern deep learning. in this article, we break down the essential concepts behind autoencoders, explore different types, and walk through an implementation using a denoising autoencoder on the stl 10 dataset. Deep autoencoders, which utilize multiple layers of encoding and decoding, significantly enhance the capability to capture hierarchical features of the data. this progression allows the models to handle tasks requiring detailed feature extraction. An autoencoder is a special type of neural network that is trained to copy its input to its output. for example, given an image of a handwritten digit, an autoencoder first encodes the image into a lower dimensional latent representation, then decodes the latent representation back to an image. Deep learning with python, françois chollet, 2021 (manning publications) offers clear explanations of how neural networks, including autoencoders, function, with a focus on practical implementation and the role of hidden layers in learning compressed representations. As shown in fig. 2, a complete autoencoder (ae) consists of three different layers, i.e., input layer, hidden layer, and output layer. the neuron numbers in each layer are n, t, and n, respectively.

Comments are closed.