Audio Generation With Diffusion Models Pdf Data Compression

Audio Generation With Diffusion Models Pdf Data Compression Audio generation requires an understanding of multiple aspects, such as the temporal dimension, long term structure, multiple layers of overlapping sounds, and the nuances that only trained listeners can detect. in this work, we investigate the potential of diffusion models for audio generation. In this work, we investigate the potential of diffusion models for audio generation.

Text To Audio Generation With Latent Diffusion Models Audio generation with diffusion models free download as pdf file (.pdf), text file (.txt) or read online for free. audio generation with deep learning diffusion models. We propose an audio generation model based on existing pre trained tta models, which accepts not only text as a condition but also incorporates other control conditions to achieve finer grained and more precise control on au dio generation. Icit control over the sound effects generated, as specified by users. in this paper, we propose a new tta task, namely, cus tomized text to audio generation (ctta), where the audio content produc. In this work, we make an initial attempt at understanding the inner workings of audio latent diffusion models by investigating how their audio outputs compare with the training data, similar to how a doctor auscultates a patient by listening to the sounds of their organs.

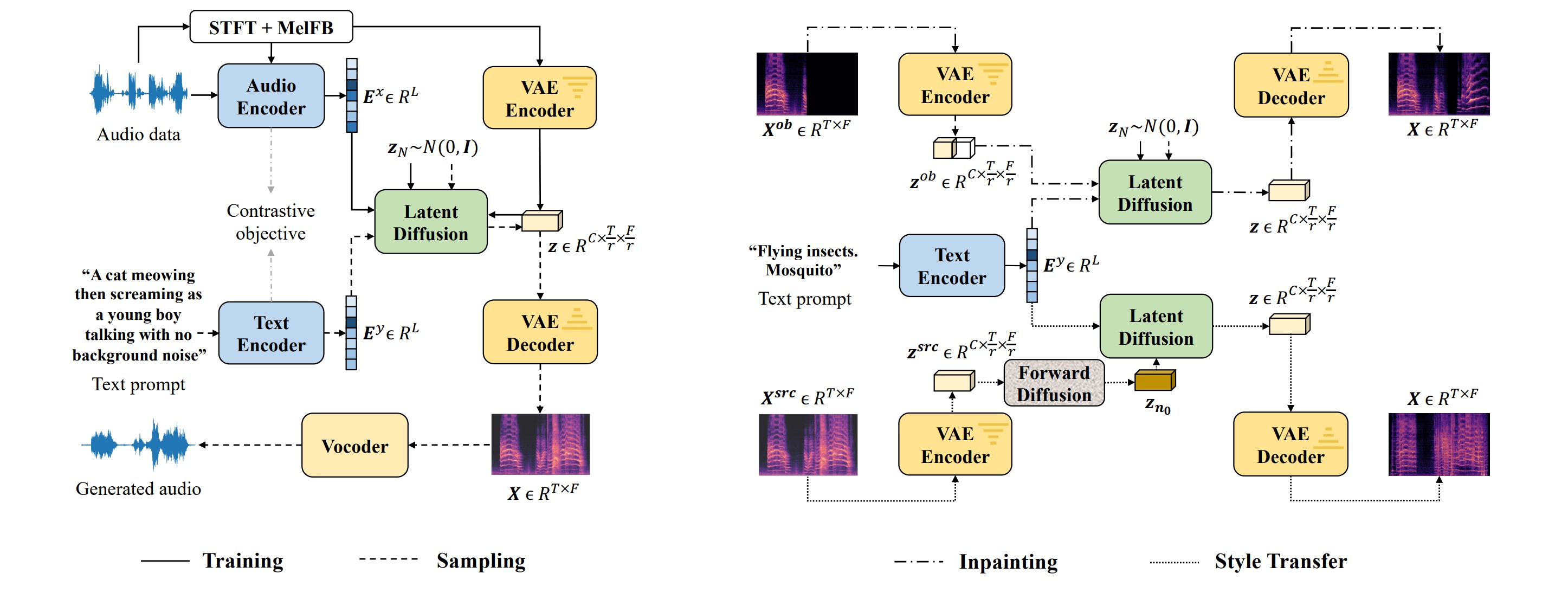

Github Carlosholivan Audiogenerationdiffusion State Of The Art Of Icit control over the sound effects generated, as specified by users. in this paper, we propose a new tta task, namely, cus tomized text to audio generation (ctta), where the audio content produc. In this work, we make an initial attempt at understanding the inner workings of audio latent diffusion models by investigating how their audio outputs compare with the training data, similar to how a doctor auscultates a patient by listening to the sounds of their organs. We have presented a new method audioldm for text to audio (tta) generation, with contrastive language audio pretraining (clap) models and latent diffusion mod els (ldms). To address this issue, we propose a novel model that enhances the controllability of existing pre trained text to audio models by incorporating additional conditions including content (times tamp) and style (pitch contour and energy contour) as supplements to the text. In this work, we propose an advanced system that integrates the autoregressive language model with the diffusion model, achieving flexible and refined audio generation. Audio generation with diffusion models this repository is maintained by carlos hernández oliván ([email protected]) and it presents the state of the art of audio generation with diffusion models.

Comments are closed.