Apache Spark Picklingerror Could Not Serialize Object Typeerror

Spark 41125 Simple Call To Createdataframe Fails With Picklingerror I want to make sentiment analysis using kafka and spark. what i want to do is read streaming data from kafka and then using spark to batch the data. after that, i want to analyze the batch using function sentimentpredict () that i have maked using tensorflow. this is what i have do so far. Pickling error: could not serialize object: typeerror: cannot pickle ' thread.rlock' object. to resolve these errors, let’s first understand what serialization means in spark — what.

Pyspark Serializers And Its Types Marshal Pickle Dataflair I thought it was due to spark 2.4 to 3 changes, and probably some breaking changes related to pandas udf api, however i've changed to the newer template and same behavior still happens. The error pickle.picklingerror: could not serialize object: typeerror: can't pickle thread.lock objects when i run it on spark cluster,how to solve it?. It's not really possible to serialize fasttext's code, because part of it is native (in c ). possible solution would be to save model to disk, then for each spark partition load model from disk and apply it to the data. This walkthrough dives into a complex problem faced during the integration of oop constructs within pyspark, uncovering serialization issues and providing pragmatic solutions.

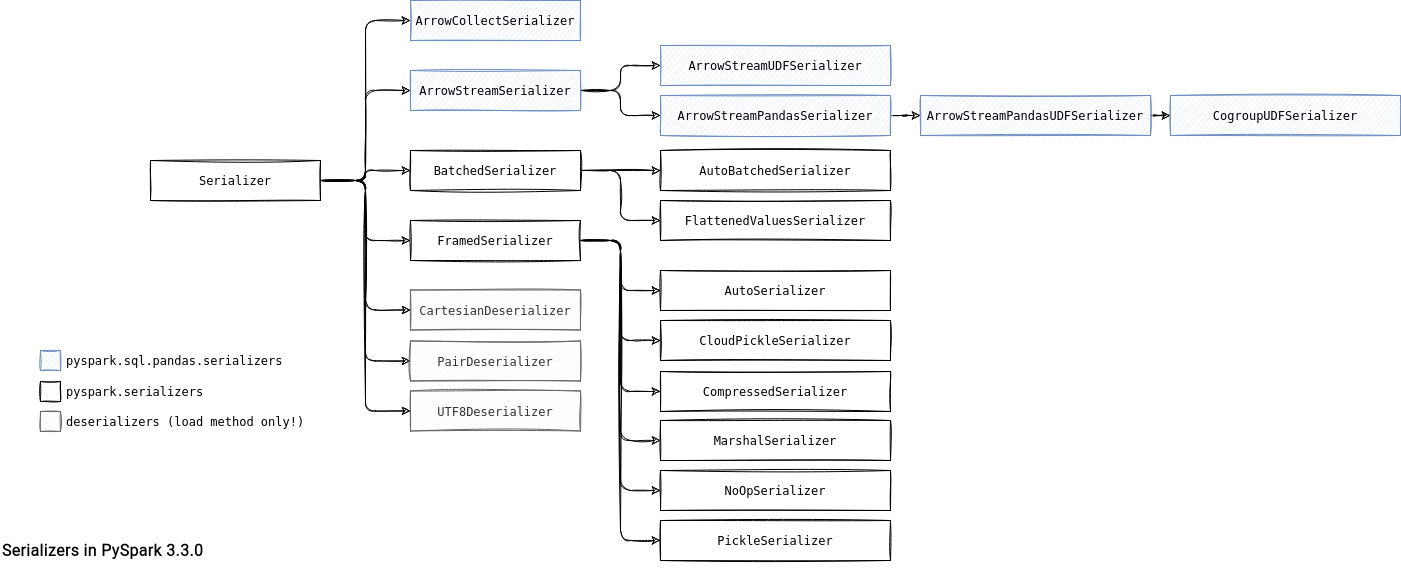

Serializers In Pyspark On Waitingforcode Articles About Pyspark It's not really possible to serialize fasttext's code, because part of it is native (in c ). possible solution would be to save model to disk, then for each spark partition load model from disk and apply it to the data. This walkthrough dives into a complex problem faced during the integration of oop constructs within pyspark, uncovering serialization issues and providing pragmatic solutions. Pickling saves an object's state, but locks represent transient runtime state, not serializable data. this guide explains why this error occurs and presents methods to work around it by excluding locks during pickling. One of those issues is having their class object being pickled and send across all the worker nodes, resulting in the following error: picklingerror: could not serialize object: typeerror: can't pickle

Comments are closed.