Apache Spark Development Lifecycle Workday Apachecon 2020 Ppt

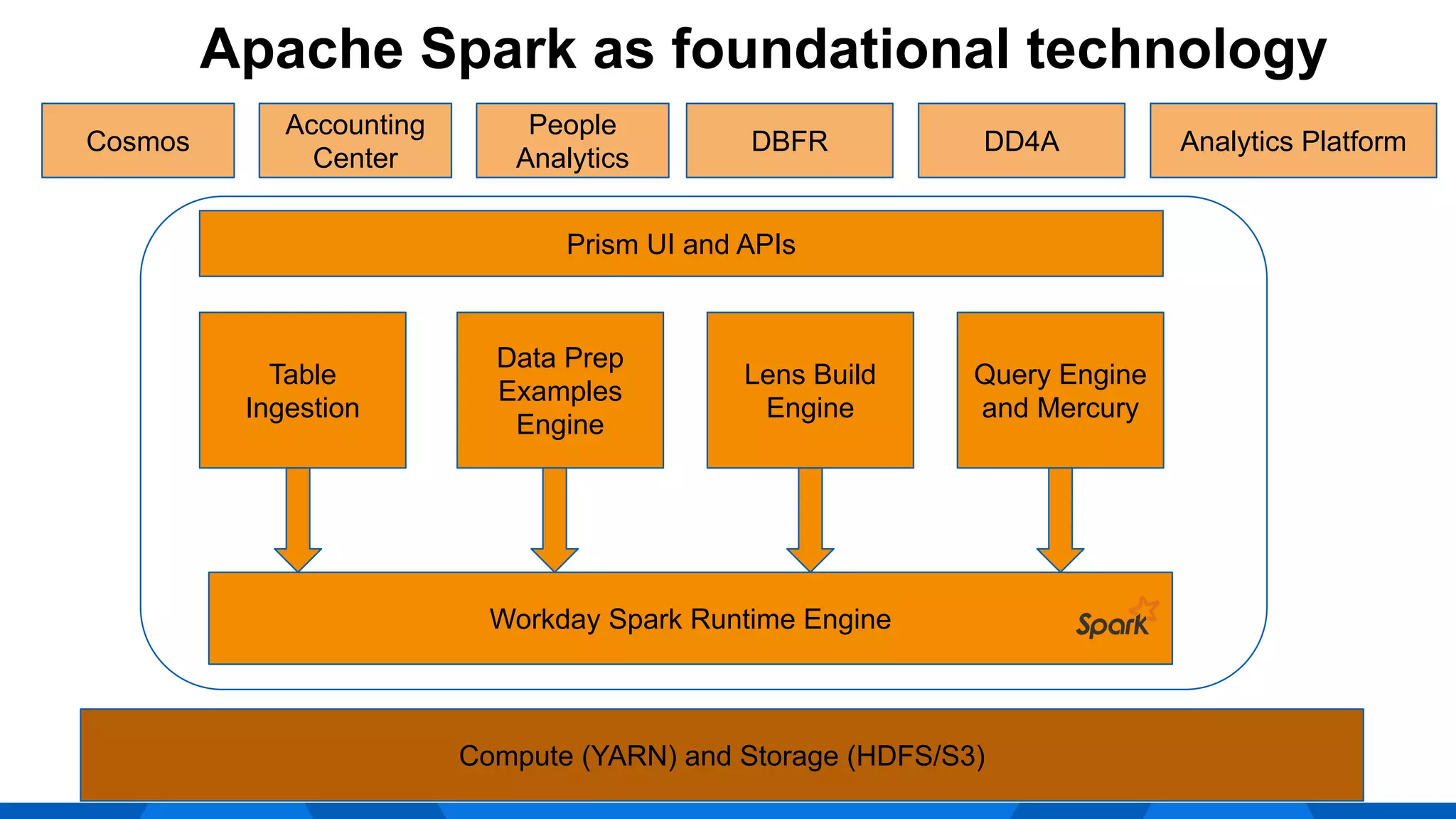

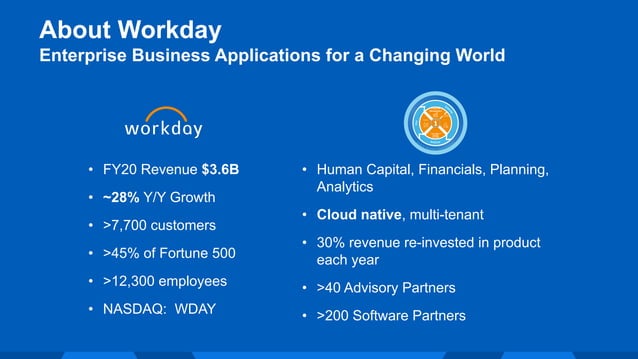

Spark Ppt Pdf Apache Spark Computer Science Workday uses apache spark as the foundational technology for its prism analytics product. it has developed a custom spark upgrade model to handle upgrading spark across its multi tenant environment. In the light of these, this session aims to cover spark feature development lifecycle at workday by covering custom spark upgrade model, benchmark & monitoring pipeline and spark runtime.

Apache Spark Development Lifecycle Workday Apachecon 2020 Ppt Apache spark is a cluster computing framework designed for fast, general purpose processing. it supports batch, streaming, and iterative processing using resilient distributed datasets (rdds). This page contains links to presentations and other key papers about the asf and how apache projects work that you may find useful. many of these have been presented at apachecon conferences or other open source conferences. Create a dynamic yet engaging management presentation with apache spark presentation templates and google slides. Discover all the effective and impactful apache spark presentation templates and google slides.

Apache Spark Development Lifecycle Workday Apachecon 2020 Ppt Create a dynamic yet engaging management presentation with apache spark presentation templates and google slides. Discover all the effective and impactful apache spark presentation templates and google slides. Extends the distributed fault tolerant collections api and interactive console of spark with a new graph api which leverages recent advances in graph systems (e.g., graphlab) to enable users to easily and interactively build, transform, and reason about graph structured data at scale. We will start with an introduction to apache spark programming. then we will move to know the spark history. moreover, we will learn why spark is needed. afterward, will cover all fundamental of spark components. furthermore, we will learn about sparku2019s core abstraction and spark rdd. Apache is where the future is invented. 300 projects, from desktop to mobile to the server room. come to apachecon new orleans in fall of 2020 to learn about the software you'll be using to run your business next year. Apache spark is a lightning fast cluster computing technology, designed for fast computation. it is based on hadoop mapreduce and it extends the mapreduce model to eficiently use it for more types of computations, which includes interactive queries and stream processing.

Apache Spark Development Lifecycle Workday Apachecon 2020 Ppt Extends the distributed fault tolerant collections api and interactive console of spark with a new graph api which leverages recent advances in graph systems (e.g., graphlab) to enable users to easily and interactively build, transform, and reason about graph structured data at scale. We will start with an introduction to apache spark programming. then we will move to know the spark history. moreover, we will learn why spark is needed. afterward, will cover all fundamental of spark components. furthermore, we will learn about sparku2019s core abstraction and spark rdd. Apache is where the future is invented. 300 projects, from desktop to mobile to the server room. come to apachecon new orleans in fall of 2020 to learn about the software you'll be using to run your business next year. Apache spark is a lightning fast cluster computing technology, designed for fast computation. it is based on hadoop mapreduce and it extends the mapreduce model to eficiently use it for more types of computations, which includes interactive queries and stream processing.

Comments are closed.