Alphafold2 Demo Using Intel Xeon Cpu Max Series

Cpu Intel Xeon Max Series Meluncur Pertama Berbasis X86 Alphafold2, developed by deepmind, is one such workload that intel has enabled on its intel® xeon® cpu max series. using the available trained model for inference, a series of 8. No one is better than the other, and the differences are in 3 points: (1) this repo is major in acceleration of inference, in compatible to weights released from deepmind; (2) this repo delivers a reliable pipeline accelerated on intel® core xeon and intel® optane® pmem by intel® oneapi.

Intel Julkaisi 4 Sukupolven Xeon Scalable Ja Xeon Cpu Max Series Alphafold2, developed by deepmind, is one such workload that intel has enabled on its intel® xeon® cpu max series. using the available trained model for inference, a series of 8 proteins’ three dimensional structure can be predicted. Easy to use protein structure and complex prediction using alphafold2 and alphafold2 multimer. sequence alignments templates are generated through mmseqs2 and hhsearch. In this blog, we benchmark the different gpu configurations and cpu only configurations to evaluate performance in alphafold2 and evaluate the total time in seconds for a protein prediction. The easiest way to generate alphafold models is using the colab pages. depending on the gpu assigned to you by colab, you can model up to 2000 residues (tesla t4 & p100) or 1000 residues (tesla k80).

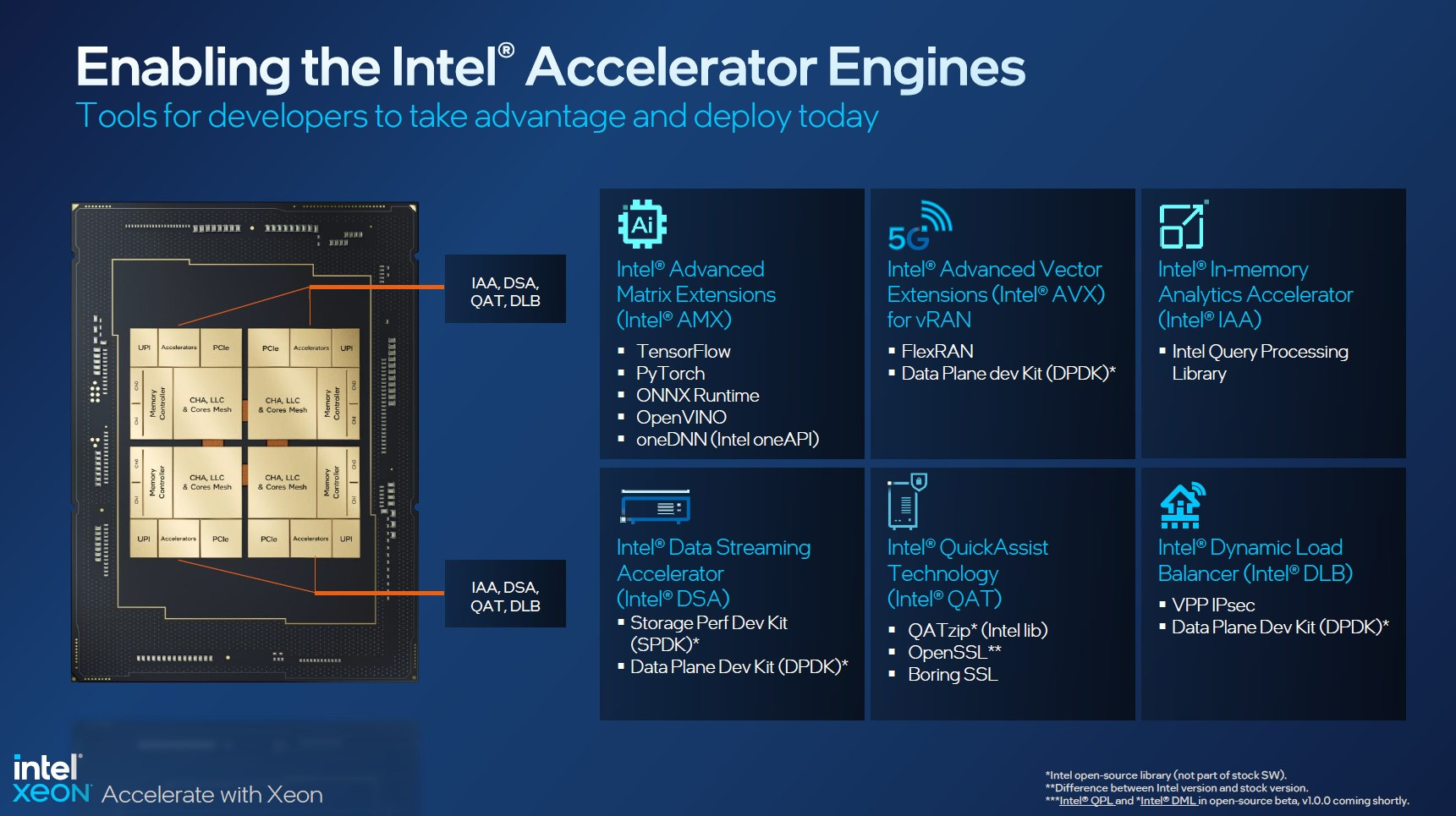

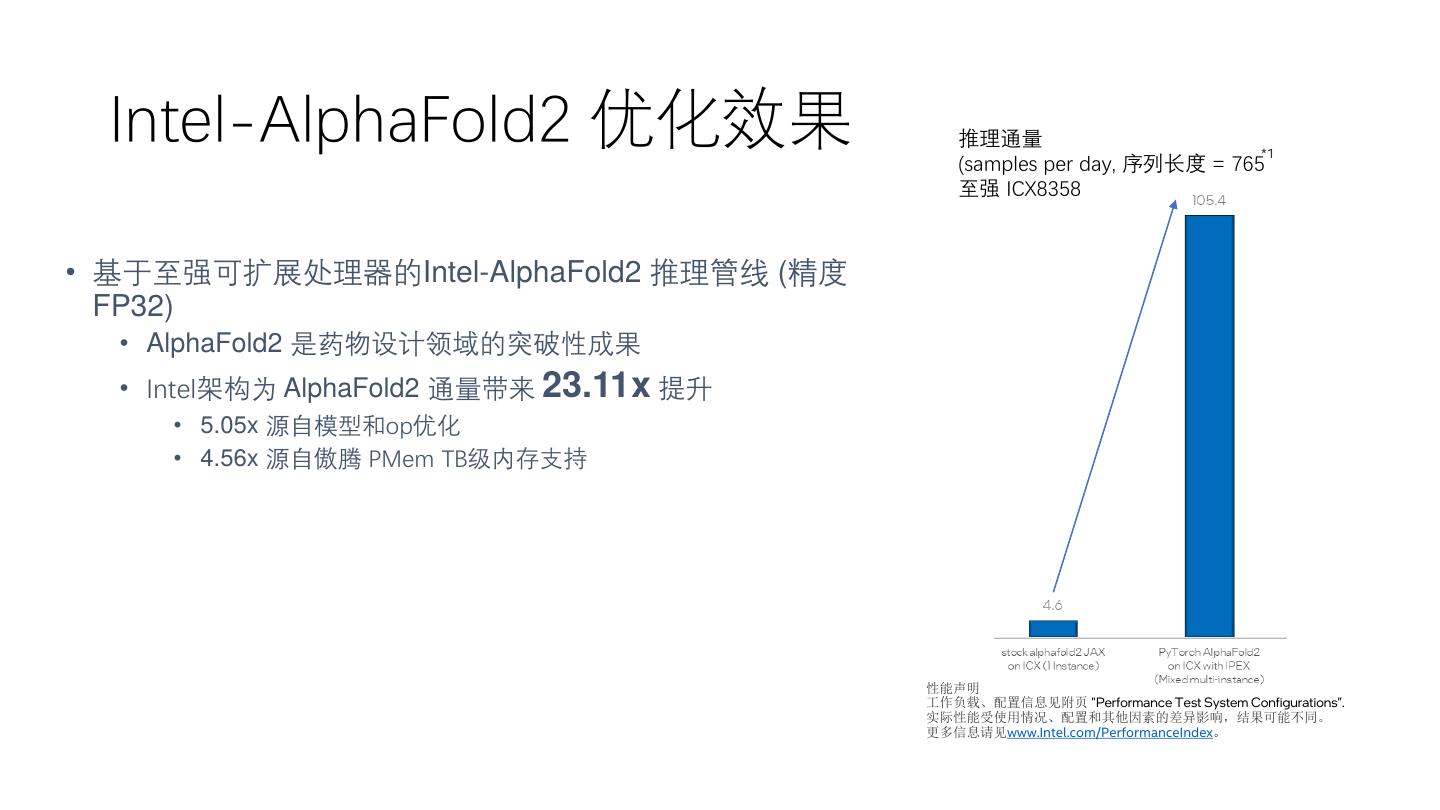

Using Alphafold2 Colab Step By Step Protein Structure Prediction In this blog, we benchmark the different gpu configurations and cpu only configurations to evaluate performance in alphafold2 and evaluate the total time in seconds for a protein prediction. The easiest way to generate alphafold models is using the colab pages. depending on the gpu assigned to you by colab, you can model up to 2000 residues (tesla t4 & p100) or 1000 residues (tesla k80). No one is better than the other, and the differences are in 3 points: (1) this repo is major in acceleration of inference, in compatible to weights released from deepmind; (2) this repo delivers a reliable pipeline accelerated on intel® core xeon and intel® optane® pmem by intel® oneapi. See the performance boost from intel® max series cpus with hbm, intel® amx and bf16 features on deepmind’s alphafold 2 workload for inference. See the intel® xeon® cpu max series performance improvements of deepmind’s alphafold2 utilizing intel® advanced matrix extensions and bf16. Declaration 1 this repository contains an inference pipeline of alphafold2 with a bona fide translation from haiku jax ( github deepmind alphafold) to pytorch.

1 基于intel Alphafold2实现高通量和超长序列蛋白结构解析 杨威 No one is better than the other, and the differences are in 3 points: (1) this repo is major in acceleration of inference, in compatible to weights released from deepmind; (2) this repo delivers a reliable pipeline accelerated on intel® core xeon and intel® optane® pmem by intel® oneapi. See the performance boost from intel® max series cpus with hbm, intel® amx and bf16 features on deepmind’s alphafold 2 workload for inference. See the intel® xeon® cpu max series performance improvements of deepmind’s alphafold2 utilizing intel® advanced matrix extensions and bf16. Declaration 1 this repository contains an inference pipeline of alphafold2 with a bona fide translation from haiku jax ( github deepmind alphafold) to pytorch.

1 基于intel Alphafold2实现高通量和超长序列蛋白结构解析 杨威 See the intel® xeon® cpu max series performance improvements of deepmind’s alphafold2 utilizing intel® advanced matrix extensions and bf16. Declaration 1 this repository contains an inference pipeline of alphafold2 with a bona fide translation from haiku jax ( github deepmind alphafold) to pytorch.

Comments are closed.