Algorithm Matrix Multiplication Library

Matrix Multiplication Explained These libraries contain high performance, optimized implementations of common linear algebra operations, such as the dot product, matrix multiplication, vector addition, and scalar multiplication. A curated and continually evolving list of frameworks, libraries, tutorials, and tools for optimizing general matrix multiply (gemm) operations. whether you're a beginner eager to learn the fundamentals, a developer optimizing performance critical code, or a researcher pushing the limits of hardware, this repository is your launchpad to mastery.

Matrix Multiplication Algorithm And Flowchart Code With C This paper compares the performance of five different matrix multiplication algorithms using cublas, cuda, blas, openmp, and c threads. I’ll start with a naive matrix multiplication in c and then iteratively improve it until my implementation approaches that of amd’s bli dgemm. my goal is not just to present optimizations, but rather for you to discover them with me. Matrix multiplication is one of the most fundamental operations in modern computing — powering artificial intelligence, physics simulations, graphics engines, cryptography, finance models, and. We start with the naive “for for for” algorithm and incrementally improve it, eventually arriving at a version that is 50 times faster and matches the performance of blas libraries while being under 40 lines of c. all implementations are compiled with gcc 13 and run on a zen 2 cpu clocked at 2ghz.

Matrix Multiplication Algorithm Matrix multiplication is one of the most fundamental operations in modern computing — powering artificial intelligence, physics simulations, graphics engines, cryptography, finance models, and. We start with the naive “for for for” algorithm and incrementally improve it, eventually arriving at a version that is 50 times faster and matches the performance of blas libraries while being under 40 lines of c. all implementations are compiled with gcc 13 and run on a zen 2 cpu clocked at 2ghz. Many different algorithms have been designed for multiplying matrices on different types of hardware, including parallel and distributed systems, where the computational work is spread over multiple processors (perhaps over a network). There are software approaches to optimize general matmul algorithms, such as strassen's and winograd's algorithms. other possibilities include approximate matrix multiplication algorithms, and advanced types of matrices, such as sparse matrices, low rank matrices, and butterfly matrices. It is extremely tedious to implement, and i don't think that any blas library has done it so far, but there is a showing that an efficient (vectorized) implementation can be up to 10 20% faster for very large matrices. This paper demonstrates the methodology to construct such a library containing composable and predictable algorithms so that the application level synthesis tools can utilize it to explore the design space for an entire application.

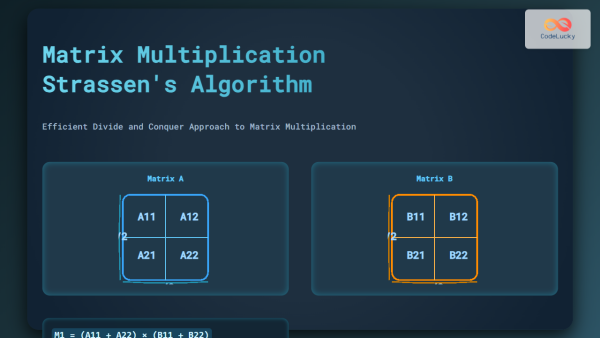

Matrix Multiplication Strassen S Algorithm Explained With Examples And Many different algorithms have been designed for multiplying matrices on different types of hardware, including parallel and distributed systems, where the computational work is spread over multiple processors (perhaps over a network). There are software approaches to optimize general matmul algorithms, such as strassen's and winograd's algorithms. other possibilities include approximate matrix multiplication algorithms, and advanced types of matrices, such as sparse matrices, low rank matrices, and butterfly matrices. It is extremely tedious to implement, and i don't think that any blas library has done it so far, but there is a showing that an efficient (vectorized) implementation can be up to 10 20% faster for very large matrices. This paper demonstrates the methodology to construct such a library containing composable and predictable algorithms so that the application level synthesis tools can utilize it to explore the design space for an entire application.

Comments are closed.