Agentprocessbench Testing Llm Tool Use Quality

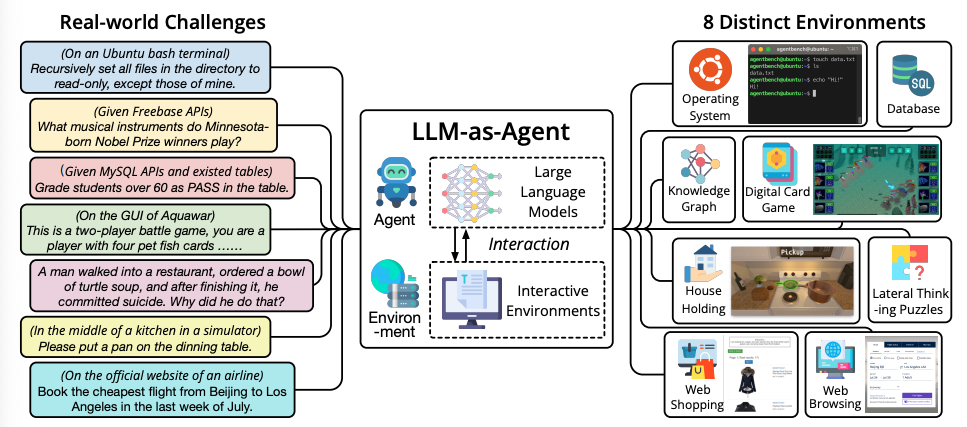

Llm Agents Prompt Engineering Guide To bridge this gap, we introduce agentprocessbench, the first benchmark dedicated to evaluating step level effectiveness in realistic, tool augmented trajectories. In this ai research roundup episode, alex discusses the paper: 'agentprocessbench: diagnosing step level process quality in tool using agents' this paper introduces agentprocessbench, a.

Understanding Llm Tool Use Agent Behavior This work introduces agentprocessbench, the first benchmark dedicated to evaluating step level effectiveness in realistic, tool augmented trajectories and reveals key insights that can foster future research in reward models and pave the way toward general agents. To bridge this gap, we introduce agentprocessbench, the first benchmark dedicated to evaluating step level effectiveness in realistic, tool augmented trajectories. What does agentprocessbench measure in tool using language agents? agentprocessbench evaluates fine grained, stepwise effectiveness when language models interact with external tools, emphasizing mistakes that cannot be fixed by later reasoning. The paper successfully introduces agentprocessbench, the first human annotated benchmark for step level effectiveness evaluation in tool using agent trajectories.

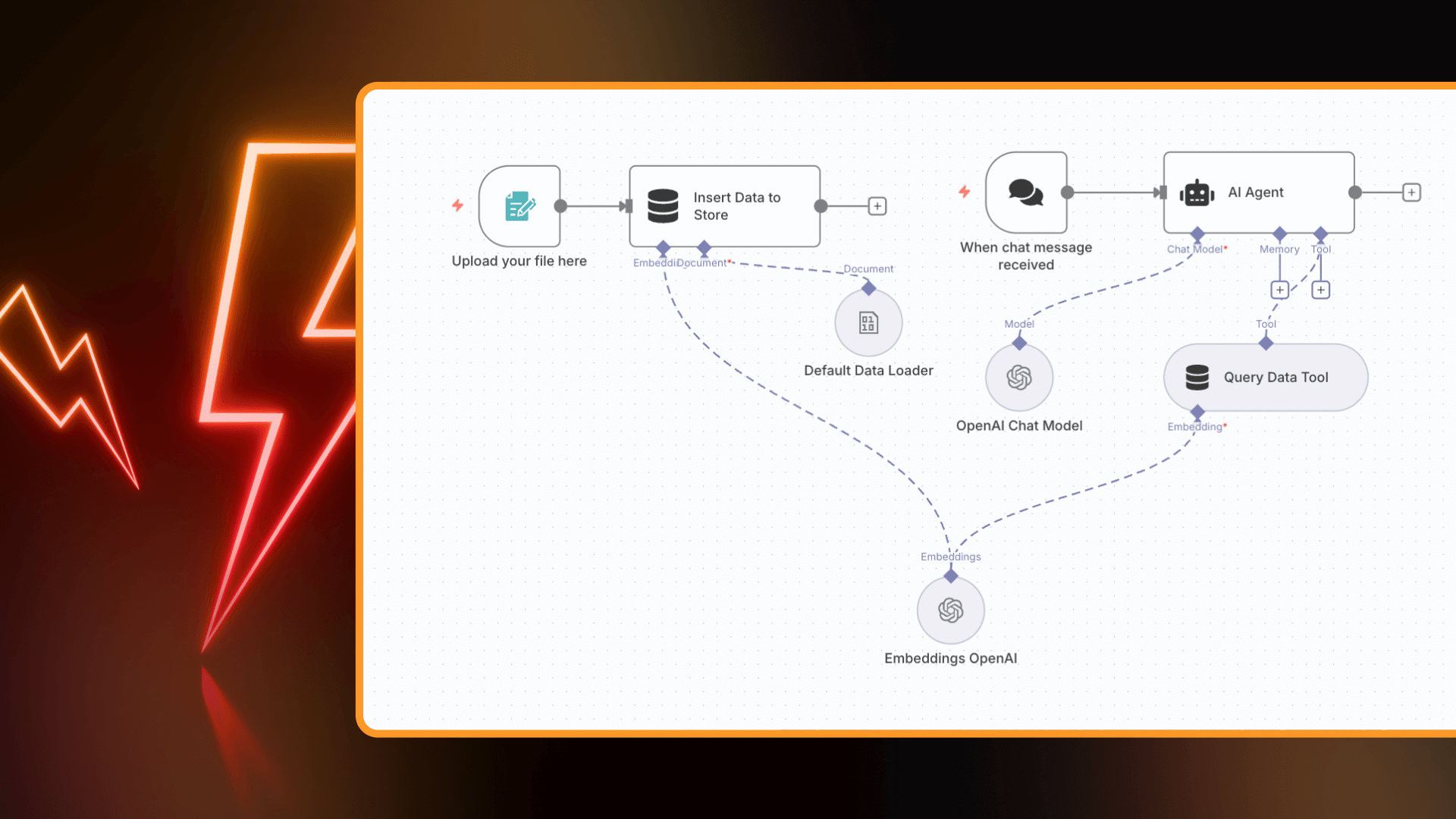

Your Practical Guide To Llm Agents In 2025 5 Templates For What does agentprocessbench measure in tool using language agents? agentprocessbench evaluates fine grained, stepwise effectiveness when language models interact with external tools, emphasizing mistakes that cannot be fixed by later reasoning. The paper successfully introduces agentprocessbench, the first human annotated benchmark for step level effectiveness evaluation in tool using agent trajectories. Test your prompts and models with automated evaluations secure your llm apps with red teaming and vulnerability scanning compare models side by side (openai, anthropic, azure, bedrock, ollama, and more) automate checks in ci cd review pull requests for llm related security and compliance issues with code scanning share results with your team. To facilitate the development of more effective prms for tool using agents, we introduce agentprocessbench, the first benchmark for measuring llms’ ability to assess the quality of intermediate steps in agent trajectories. Agentprocessbench uniquely provides human annotated, step level effectiveness supervision for tool using agents. to address this gap, we introduce agentprocessbench, the first benchmark for evaluating llms’ ability to assess the effective ness of intermediate steps in tool using trajectories. This video dives into agentprocessbench — a game changing benchmark that reveals how llm agents actually behave step by step 🤯 💡 imagine this: an ai books flights ️, calls apis 🔌, talks to.

New Agentbench Llm Ai Model Benchmarking Tool Geeky Gadgets Test your prompts and models with automated evaluations secure your llm apps with red teaming and vulnerability scanning compare models side by side (openai, anthropic, azure, bedrock, ollama, and more) automate checks in ci cd review pull requests for llm related security and compliance issues with code scanning share results with your team. To facilitate the development of more effective prms for tool using agents, we introduce agentprocessbench, the first benchmark for measuring llms’ ability to assess the quality of intermediate steps in agent trajectories. Agentprocessbench uniquely provides human annotated, step level effectiveness supervision for tool using agents. to address this gap, we introduce agentprocessbench, the first benchmark for evaluating llms’ ability to assess the effective ness of intermediate steps in tool using trajectories. This video dives into agentprocessbench — a game changing benchmark that reveals how llm agents actually behave step by step 🤯 💡 imagine this: an ai books flights ️, calls apis 🔌, talks to.

New Agentbench Llm Ai Model Benchmarking Tool Geeky Gadgets Agentprocessbench uniquely provides human annotated, step level effectiveness supervision for tool using agents. to address this gap, we introduce agentprocessbench, the first benchmark for evaluating llms’ ability to assess the effective ness of intermediate steps in tool using trajectories. This video dives into agentprocessbench — a game changing benchmark that reveals how llm agents actually behave step by step 🤯 💡 imagine this: an ai books flights ️, calls apis 🔌, talks to.

Comments are closed.