Adversarial Incremental Learning

Github Rovlet Adversarial Incremental Learning Framework For We first explore a series of baselines that integrate incremental learning with existing adversarial training methods, finding that they lead to conflicts between acquiring new knowledge and retaining past knowledge. To address the aforementioned problems, this paper proposes an incremental adversarial training method (incat). this method first uses clean samples and a pre trained neural hybrid assembly.

Adversarial Incremental Learning To this end, we propose adversarial assistance based incremental ids, short as advas iids, a new scheme that introduces adversarial samples into incremental learning in the form of disentangled data distribution to boost learning effect on clean samples. Concretely, we propose a conditional adversarial network a = {b, g, d} for incremental learning. as shown in figure 1, there are three parts in it, which are a base sub net b, a generator g, and a discriminator d. The process of incremental learning in generative adversarial networks (gans) presents an immense challenge in the form of catastrophic forgetting, which occurs. This is the official repository for "enhancing robustness in incremental learning with adversarial training" (aaai 2025). we extend the codebase from mammoth to implement flair, a novel method for enhancing adversarial robustness in class incremental learning.

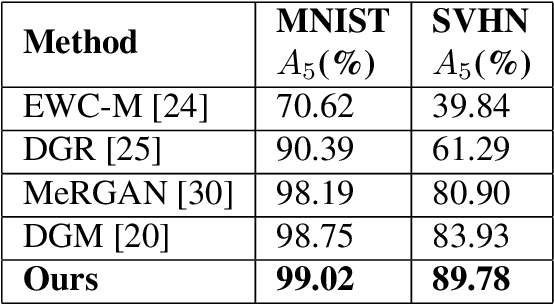

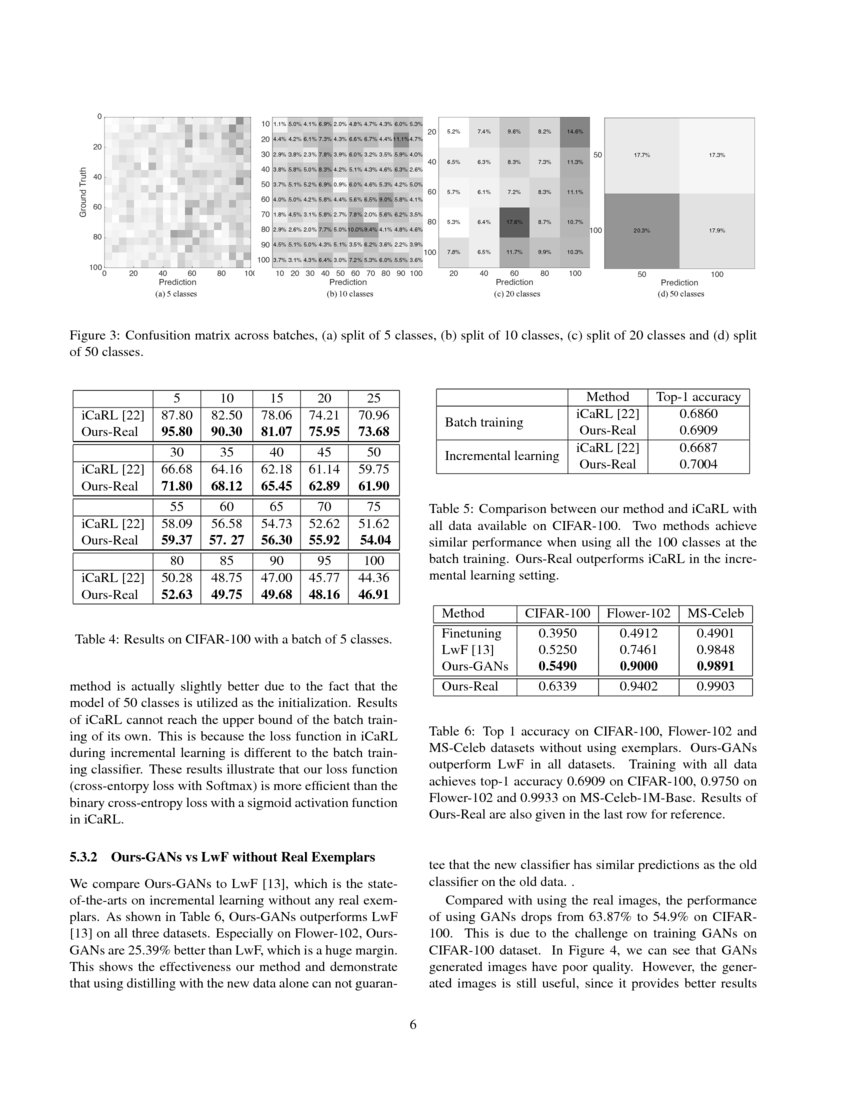

Incremental Classifier Learning With Generative Adversarial Networks The process of incremental learning in generative adversarial networks (gans) presents an immense challenge in the form of catastrophic forgetting, which occurs. This is the official repository for "enhancing robustness in incremental learning with adversarial training" (aaai 2025). we extend the codebase from mammoth to implement flair, a novel method for enhancing adversarial robustness in class incremental learning. In this study, we investigate adversarially robust class incremental learning (arcil), a method that combines adversarial robustness with incremental learning. we observe that combining incremental learning with naive adversarial training easily leads to a loss of robustness. As for image generation, generative adversarial networks (gans) have proved their superior performance and can be adopted as a generative memory in incremental learning to mitigate catastrophic forgetting. To this end, we introduce a novel cil framework called the perturbation volume up framework (pvf). this framework divides each epoch into multiple iterations, wherein three main tasks are performed sequentially: intensifying adversarial data, extracting new knowledge, and reinforcing old knowledge. In this paper, we focus on exemplar free class incremental learning (efcil) where only class mean features (proto types) and an old model preserved in the previous task are available during the new task.

Adversarial Machine Learning Nattytech In this study, we investigate adversarially robust class incremental learning (arcil), a method that combines adversarial robustness with incremental learning. we observe that combining incremental learning with naive adversarial training easily leads to a loss of robustness. As for image generation, generative adversarial networks (gans) have proved their superior performance and can be adopted as a generative memory in incremental learning to mitigate catastrophic forgetting. To this end, we introduce a novel cil framework called the perturbation volume up framework (pvf). this framework divides each epoch into multiple iterations, wherein three main tasks are performed sequentially: intensifying adversarial data, extracting new knowledge, and reinforcing old knowledge. In this paper, we focus on exemplar free class incremental learning (efcil) where only class mean features (proto types) and an old model preserved in the previous task are available during the new task.

Pdf Incremental Adversarial Learning For Optimal Path Planning To this end, we introduce a novel cil framework called the perturbation volume up framework (pvf). this framework divides each epoch into multiple iterations, wherein three main tasks are performed sequentially: intensifying adversarial data, extracting new knowledge, and reinforcing old knowledge. In this paper, we focus on exemplar free class incremental learning (efcil) where only class mean features (proto types) and an old model preserved in the previous task are available during the new task.

Comments are closed.