Adding Models To Ollama Debuggercafe

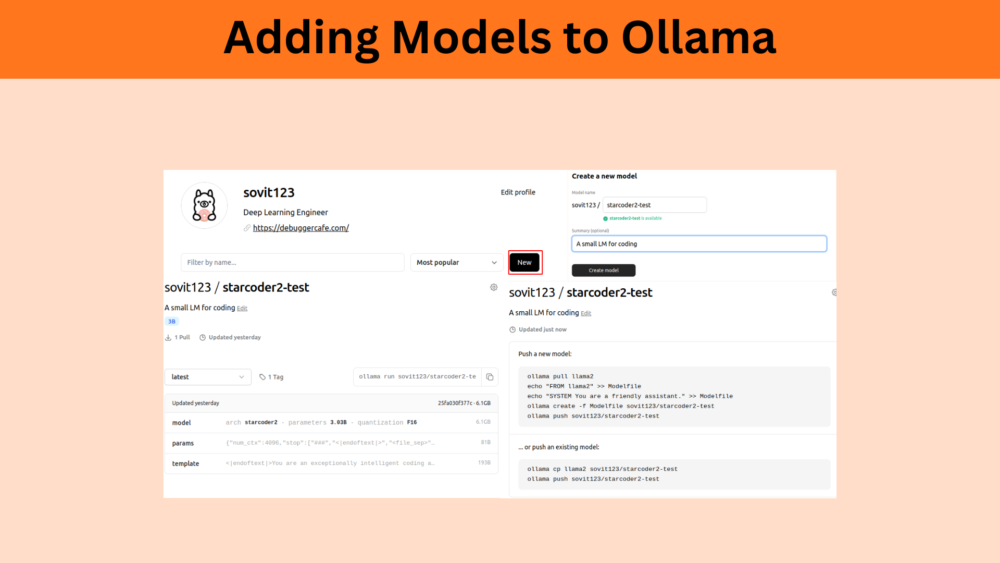

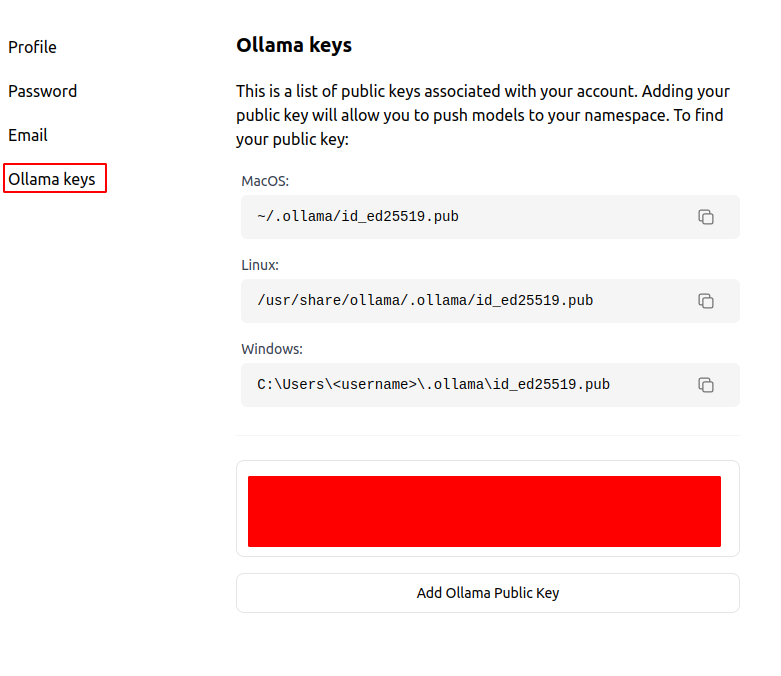

Adding Models Ollama Archives Debuggercafe In this article, we will cover the process of adding models to ollama. ollama already provides thousands of llms. starting from text completion ones, instruction tuned, to code llms, it has everything. so, what is the need for adding new models to ollama?. To push a model to ollama , first make sure that it is named correctly with your username. you may have to use the ollama cp command to copy your model to give it the correct name. once you’re happy with your model’s name, use the ollama push command to push it to ollama .

Adding Models To Ollama Debuggercafe If you want to get started, read my post on setting up a local llm. this post gives an alternative to connecting to the container from the cli and running a command. this is part of a series of experiments with ai systems. using the ollama webui server, it’s easy to add models. In case you have just fine tuned merged a model and you want to run it locally, you too might want to add it to ollama. here’s how:. Now we will see how to use, and download different models provided by ollama with the help of the command line interface (cli). open your terminal and follow these steps. To push a model to ollama , first make sure that it is named correctly with your username. you may have to use the ollama cp command to copy your model to give it the correct name. once you're happy with your model's name, use the ollama push command to push it to ollama .

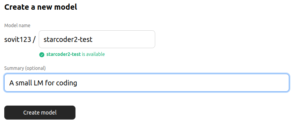

Adding Models To Ollama Debuggercafe Now we will see how to use, and download different models provided by ollama with the help of the command line interface (cli). open your terminal and follow these steps. To push a model to ollama , first make sure that it is named correctly with your username. you may have to use the ollama cp command to copy your model to give it the correct name. once you're happy with your model's name, use the ollama push command to push it to ollama . This guide covers the full path: installing ollama, pulling gemma 4, running it on the cli, setting a custom model storage location (essential if your mac’s internal drive is tight), wiring it into claude code, codex cli, and opencode, and configuring advanced settings with modelfiles and context tuning. In this article, we are adding a custom model to ollama. we take a fine tuned hugging face model, starcoder2 3b, convert it to gguf format, add it to local ollama, and push the model to ollama hub. A practical gemma 4 ollama setup guide with install steps, model tags, ollama list and ollama ps checks, local api examples, and platform notes for mac, windows, and linux. The article provides a step by step guide on how to add a custom large language model (llm) to the ollama platform for local execution, including the process of model quantization to optimize performance.

Adding Models To Ollama Debuggercafe This guide covers the full path: installing ollama, pulling gemma 4, running it on the cli, setting a custom model storage location (essential if your mac’s internal drive is tight), wiring it into claude code, codex cli, and opencode, and configuring advanced settings with modelfiles and context tuning. In this article, we are adding a custom model to ollama. we take a fine tuned hugging face model, starcoder2 3b, convert it to gguf format, add it to local ollama, and push the model to ollama hub. A practical gemma 4 ollama setup guide with install steps, model tags, ollama list and ollama ps checks, local api examples, and platform notes for mac, windows, and linux. The article provides a step by step guide on how to add a custom large language model (llm) to the ollama platform for local execution, including the process of model quantization to optimize performance.

Comments are closed.