Adaptive Sample Selection For Robust Learning Under Label Noise

Pdf Adaptive Sample Selection For Robust Learning Under Label Noise In this paper, we propose an adaptive sample selection strategy that relies only on batch statistics of a given mini batch to provide robustness against label noise. In this paper we present a novel algorithm that adaptively selects samples based on the statistics of observed loss values in a minibatch and achieves good robustness to label noise.

An Illustration Of The Proposed Label Noise Robust Image Representation In this paper, we propose a data dependent, adaptive sample selection strategy that relies only on batch statistics of a given mini batch to provide robustness against label noise. Motivated by this, we propose a simple, adaptive curriculum based sample selection strategy called batch reweighting (bare). the idea is to focus on the current state of learning, in a given mini batch, for identifying the noisy labels in it. In this paper, we introduce a two stage adaptive sam ple selection approach tailored for the lnl problem. by leveraging the disagreement between dual models, the stability of model predictions, and feature similarities, our method dynamically identifies clean sam ples more effectively. This paper proposes an adaptive sample selection strategy that relies only on batch statistics of a given mini batch to provide robustness against label noise and empirically demonstrates the effectiveness of the algorithm on benchmark datasets.

Pdf Deep Learning Is Robust To Massive Label Noise In this paper, we introduce a two stage adaptive sam ple selection approach tailored for the lnl problem. by leveraging the disagreement between dual models, the stability of model predictions, and feature similarities, our method dynamically identifies clean sam ples more effectively. This paper proposes an adaptive sample selection strategy that relies only on batch statistics of a given mini batch to provide robustness against label noise and empirically demonstrates the effectiveness of the algorithm on benchmark datasets. This will train the neural network with cce loss on un augmented mnist dataset which will be corrupted with 40% symmetric label noise. the training will be carried for a batch size of 128, 200 epochs and a total of 5 runs. This paper introduces the adaptive nearest neighbours and eigenvector based (anne) sample selection methodology, a novel approach that integrates loss based sampling with the feature based sampling methods fine and adaptive knn to optimize performance across a wide range of noise rate scenarios. We propose a rigorous and adaptive sample selection approach for selecting high quality clean samples from the training set. we progressively extend the clean set by performing knn classification on pre filtered clean samples, maximizing the value of the clean set filtered in the previous stage. This paper proposes an adaptive sample selection method to train deep neural networks robustly and prevent noise contamination in the disagreement strategy. specifically, the proposed method calculates the threshold of the small loss criterion by considering the loss distribution of the whole batch at each iteration.

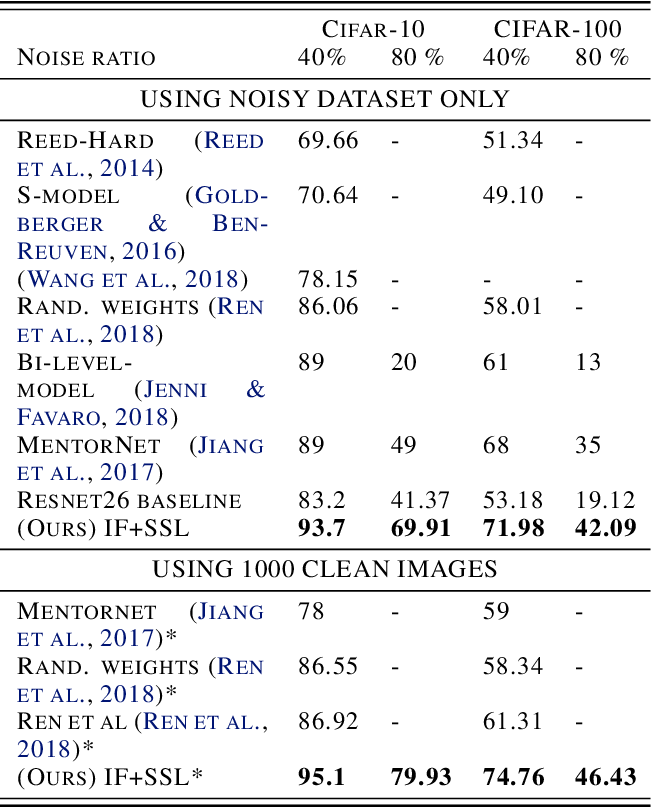

Robust Learning Under Label Noise With Iterative Noise Filtering This will train the neural network with cce loss on un augmented mnist dataset which will be corrupted with 40% symmetric label noise. the training will be carried for a batch size of 128, 200 epochs and a total of 5 runs. This paper introduces the adaptive nearest neighbours and eigenvector based (anne) sample selection methodology, a novel approach that integrates loss based sampling with the feature based sampling methods fine and adaptive knn to optimize performance across a wide range of noise rate scenarios. We propose a rigorous and adaptive sample selection approach for selecting high quality clean samples from the training set. we progressively extend the clean set by performing knn classification on pre filtered clean samples, maximizing the value of the clean set filtered in the previous stage. This paper proposes an adaptive sample selection method to train deep neural networks robustly and prevent noise contamination in the disagreement strategy. specifically, the proposed method calculates the threshold of the small loss criterion by considering the loss distribution of the whole batch at each iteration.

Comments are closed.