6 Finetuning For Classification Build A Large Language Model From

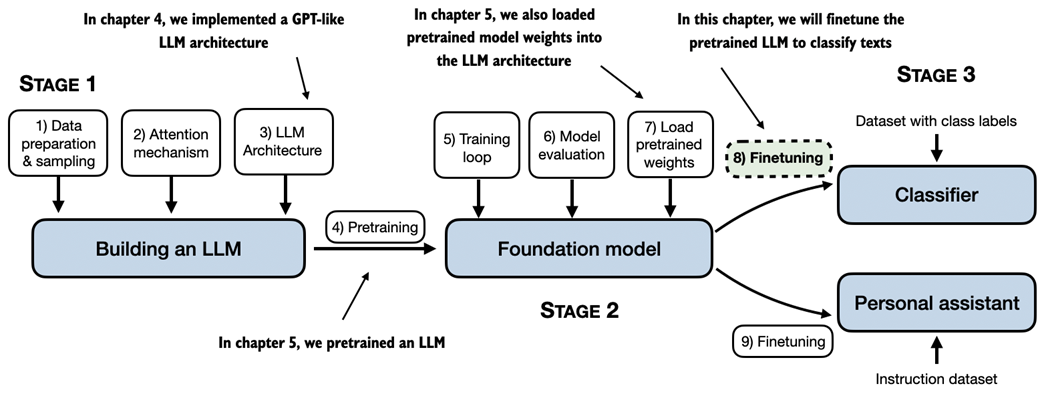

6 Finetuning For Classification Build A Large Language Model From This chapter focuses on finetuning a pretrained llm as a classifier. figure 6.1 shows two main ways of finetuning an llm: finetuning for classification (step 8) and finetuning an llm to follow instructions (step 9). in the next section, we will discuss these two ways of finetuning in more detail. Now we will reap the fruits of our labor by fine tuning the llm on a specific target task, such as classifying text. the concrete example we examine is classifying text messages as “spam” or “not spam.”.

6 Finetuning For Classification Build A Large Language Model From 6 finetuning for classification build a large language model (from scratch) free download as pdf file (.pdf), text file (.txt) or read online for free. This notebook explores the fine tuning process of llms with the purpose of creating a classification model based on sebastian raschka’s book (chapter 6). in particular, it discusses the following: all concepts, architectures, and implementation approaches are credited to sebastian raschka’s work. Chapter 6 provides a detailed walkthrough of fine tuning large language models for classification tasks. by modifying the architecture, preparing datasets, and employing supervised training techniques, the llm can be transformed into a task specific classifier. Learn how to create, train, and tweak large language models (llms) by building one from the ground up! in build a large language model (from scratch) bestselling author sebastian.

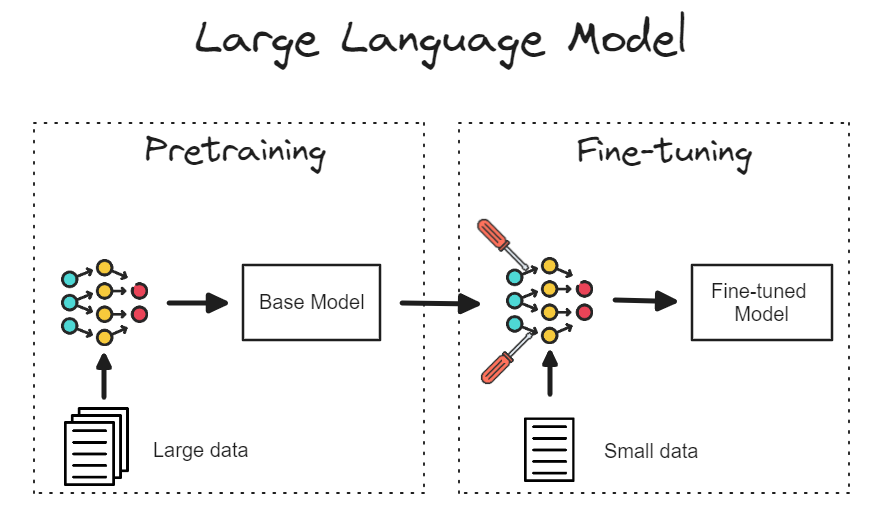

6 Finetuning For Classification Build A Large Language Model From Chapter 6 provides a detailed walkthrough of fine tuning large language models for classification tasks. by modifying the architecture, preparing datasets, and employing supervised training techniques, the llm can be transformed into a task specific classifier. Learn how to create, train, and tweak large language models (llms) by building one from the ground up! in build a large language model (from scratch) bestselling author sebastian. The main stages involved in coding and finetuning a large language model (llm) for text classification include: 1) coding the llm architecture, 2) pretraining the llm on a general text dataset, and 3) finetuning the pretrained llm for a specific task, such as classifying text messages as spam or not spam . Coding an llm from the ground up is an excellent exercise to understand its mechanics and limitations. also, it equips us with the required knowledge for pretraining or fine tuning existing open source llm architectures to our own domain specific datasets or tasks. It is the official codebase for the book build a large language model (from scratch). This is a supplementary video explaining how to finetune an llm as a classifier (here using a spam classification example) as a gentle introduction to fine tuning, before instruction.

Fine Tune Large Language Model In A Colab Notebook By Prasad Mahamulkar The main stages involved in coding and finetuning a large language model (llm) for text classification include: 1) coding the llm architecture, 2) pretraining the llm on a general text dataset, and 3) finetuning the pretrained llm for a specific task, such as classifying text messages as spam or not spam . Coding an llm from the ground up is an excellent exercise to understand its mechanics and limitations. also, it equips us with the required knowledge for pretraining or fine tuning existing open source llm architectures to our own domain specific datasets or tasks. It is the official codebase for the book build a large language model (from scratch). This is a supplementary video explaining how to finetune an llm as a classifier (here using a spam classification example) as a gentle introduction to fine tuning, before instruction.

The Art Of Fine Tuning Large Language Models Explained In Depth Pdf It is the official codebase for the book build a large language model (from scratch). This is a supplementary video explaining how to finetune an llm as a classifier (here using a spam classification example) as a gentle introduction to fine tuning, before instruction.

Comments are closed.