5 Techniques To Fine Tune Llms

Fine Tune Your Llms This report aims to serve as a comprehensive guide for researchers and practitioners, offering actionable insights into fine tuning llms while navigating the challenges and opportunities inherent in this rapidly evolving field. Thankfully, today, we have many optimal ways to fine tune llms, and five such popular techniques are depicted below: we covered them in detail here: understanding lora derived techniques for optimal llm fine tuning . implementing lora from scratch for fine tuning llms . implementing dora (an improved lora) from scratch .

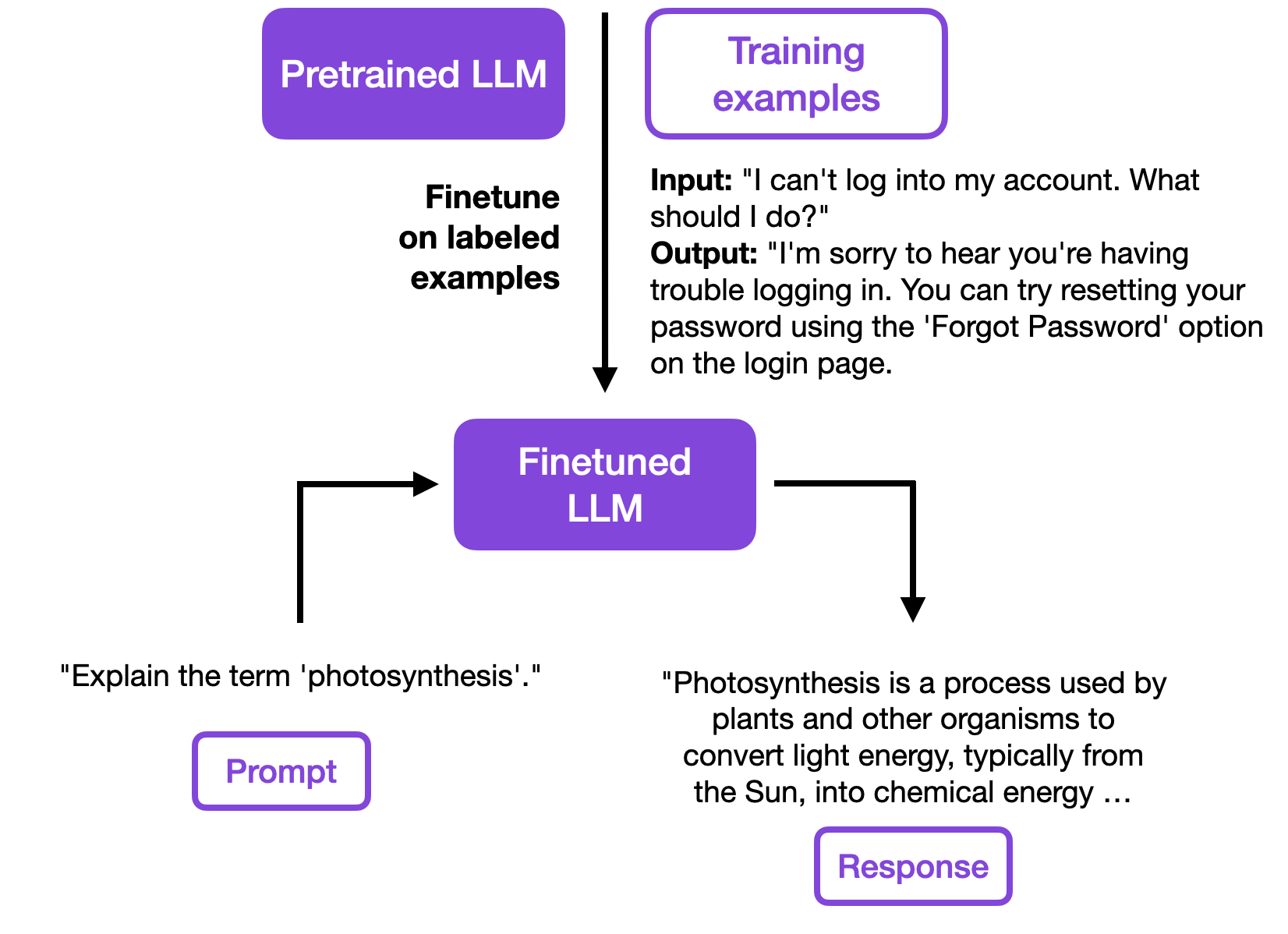

Fine Tuning Llms Overview Methods And Best Practices Fine tuning is the process of adjusting the parameters of a pre trained llm to a specific task or domain. learn about the methods and how to fine tune llms. This comprehensive guide will walk you through everything you need to know about fine tuning both large and small language models, from fundamental concepts to advanced techniques. This article delves into key strategies to enhance the performance of your llms, starting with prompt engineering and moving through retrieval augmented generation (rag) and fine tuning techniques. I share my top techniques for fine tuning llms, along with best practices, a step by step guide, and how it compares to rag and prompt engineering.

Fine Tuning Llms In Depth Analysis With Llama 2 This article delves into key strategies to enhance the performance of your llms, starting with prompt engineering and moving through retrieval augmented generation (rag) and fine tuning techniques. I share my top techniques for fine tuning llms, along with best practices, a step by step guide, and how it compares to rag and prompt engineering. The rapid advancement of large language models (llms) has revolutionized natural language processing. however, the cost of training or fine tuning these models remains a major bottleneck—traditional fine tuning requires adjusting billions of parameters and demands immense computational power. This report offers actionable insights for researchers and practitioners navigating llm fine tuning in an evolving landscape. Fine tuning refers to the process of taking a pre trained model and adapting it to a specific task by training it further on a smaller, domain specific dataset. Here are five efficient techniques to optimize this process: 1) lora (low rank adaptation) adds two low rank matrices (a & b) alongside weight matrices (w). instead of updating w, only a and b are trained. reduces memory usage significantly. 2) lora fa (frozen a lora) further reduces activation memory requirements.

Comments are closed.