4 Ways To Fine Tune Llms

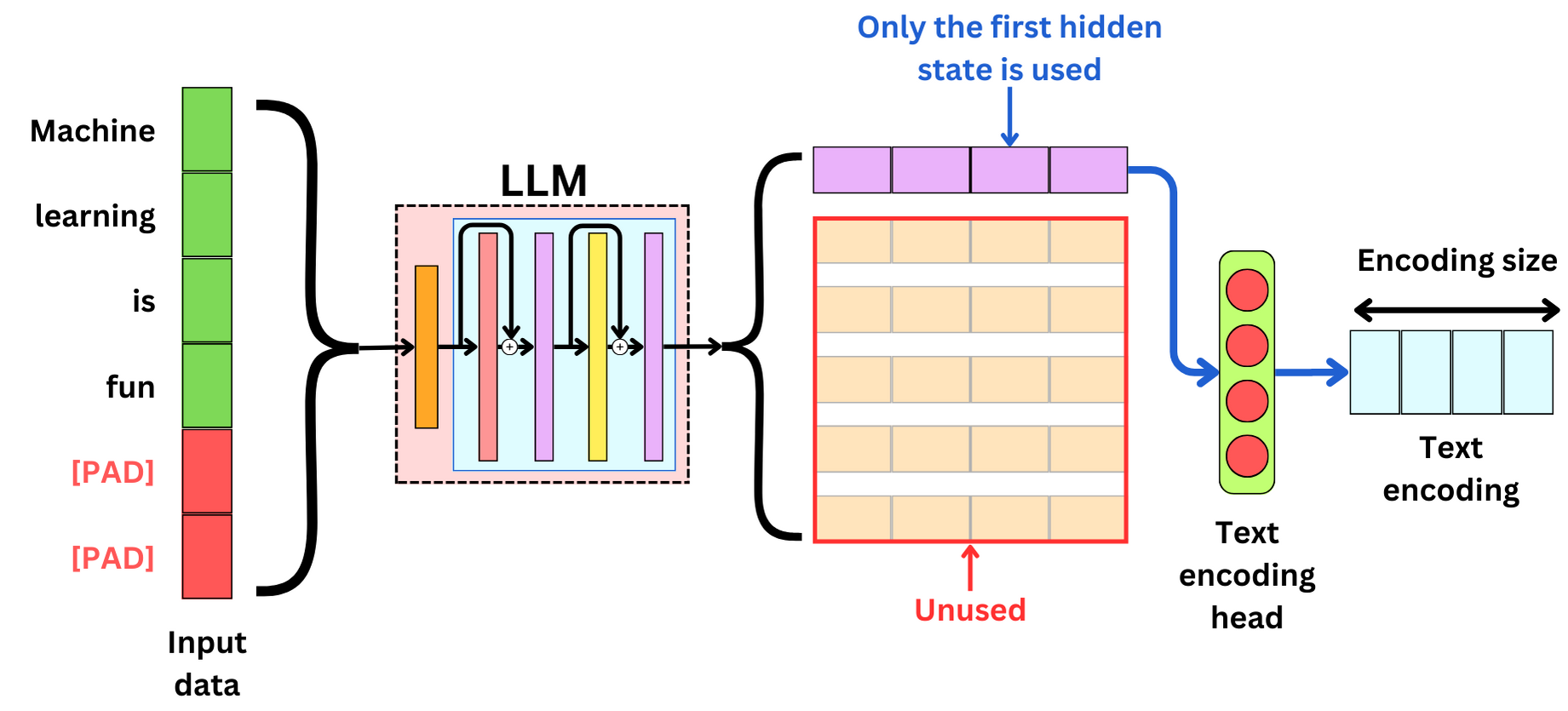

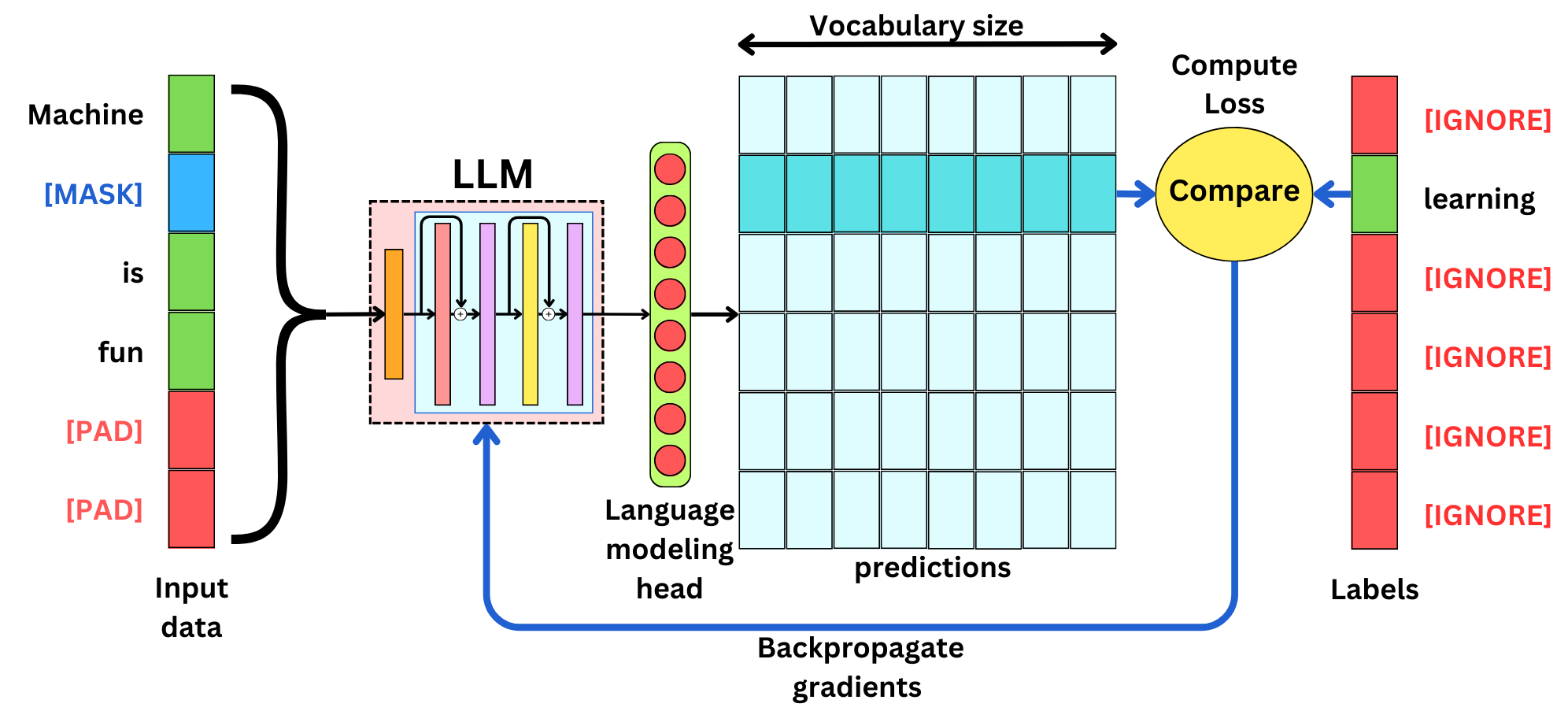

Introduction To Fine Tuning Llms Fine Tune Your Llms Video Tutorial This report aims to serve as a comprehensive guide for researchers and practitioners, offering actionable insights into fine tuning llms while navigating the challenges and opportunities inherent in this rapidly evolving field. Fine tuning is the process of adjusting the parameters of a pre trained llm to a specific task or domain. learn about the methods and how to fine tune llms.

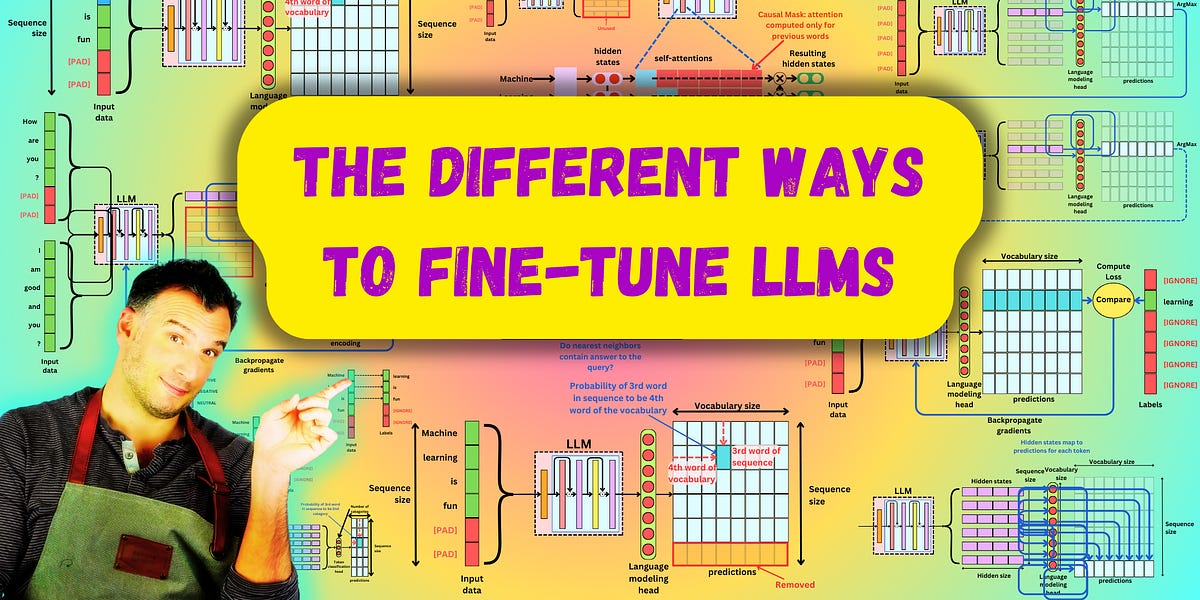

The Different Ways To Fine Tune Llms By Damien Benveniste This comprehensive guide will walk you through everything you need to know about fine tuning both large and small language models, from fundamental concepts to advanced techniques. Training: use frameworks like tensorflow, pytorch or high level libraries like transformers to fine tune the model. evaluate and iterate: test the model, refine it as necessary and re train to improve performance. Learn how fine tuning large language models (llms) improves their performance in tasks like language translation, sentiment analysis, and text generation. In this guide, we’ll cover the complete fine tuning process, from defining goals to deployment. we’ll also highlight why dataset creation is the most crucial step and how using a larger llm for filtering can make your smaller model much smarter.

The Different Ways To Fine Tune Llms By Damien Benveniste Learn how fine tuning large language models (llms) improves their performance in tasks like language translation, sentiment analysis, and text generation. In this guide, we’ll cover the complete fine tuning process, from defining goals to deployment. we’ll also highlight why dataset creation is the most crucial step and how using a larger llm for filtering can make your smaller model much smarter. I share my top techniques for fine tuning llms, along with best practices, a step by step guide, and how it compares to rag and prompt engineering. Fine tuning llms requires careful consideration of several best practices, including customization for domain specific tasks, ensuring data compliance, and leveraging limited labeled data. The workflow of axolotl enables us to change the yaml to suit our own needs instead of writing a bunch of code. in addition, since the llms are open source, there are a lot more model choices (i.e. special tokens, lora rank etc) that are usually absent in closed source llms finetuning. A hands on guide to fine tuning large language models, covering sft, dpo, rlhf, and a full python training pipeline.

The Different Ways To Fine Tune Llms By Damien Benveniste I share my top techniques for fine tuning llms, along with best practices, a step by step guide, and how it compares to rag and prompt engineering. Fine tuning llms requires careful consideration of several best practices, including customization for domain specific tasks, ensuring data compliance, and leveraging limited labeled data. The workflow of axolotl enables us to change the yaml to suit our own needs instead of writing a bunch of code. in addition, since the llms are open source, there are a lot more model choices (i.e. special tokens, lora rank etc) that are usually absent in closed source llms finetuning. A hands on guide to fine tuning large language models, covering sft, dpo, rlhf, and a full python training pipeline.

The Different Ways To Fine Tune Llms By Damien Benveniste The workflow of axolotl enables us to change the yaml to suit our own needs instead of writing a bunch of code. in addition, since the llms are open source, there are a lot more model choices (i.e. special tokens, lora rank etc) that are usually absent in closed source llms finetuning. A hands on guide to fine tuning large language models, covering sft, dpo, rlhf, and a full python training pipeline.

The Different Ways To Fine Tune Llms By Damien Benveniste

Comments are closed.