26 Contractive Autoencoders

Contractive Autoencoder Sehoon In this article, we will learn about contractive autoencoders which come in very handy while extracting features from the images, and how normal autoencoders have been improved to create contractive autoencoders. In this article, we have explained the idea and mathematics behind contractive autoencoders and the link with denoising autoencoder. we have presented a sample python implementation of contractive autoencoders as well.

Github Zaouk Contractive Autoencoders Contractive Auto Encoders Contractive autoencoders (caes) develop representations that are robust to minor input variations. these models explicitly aim to make the learned feature mapping, represented by the encoder function f (x) f (x), insensitive to small changes in the input data x x. This is a personal attempt to reimplement a contractive autoencoder (with fc layers uniquely) as described in the original paper by rifai et al. to the best of our knowledge, this is the first implementation done with native tensorflow. This chapter surveys the different types of autoencoders that are mainly used today. it also describes various applications and use cases of autoencoders. In this blog post, we will explore the fundamental concepts of contractive autoencoders in pytorch, discuss their usage methods, common practices, and best practices.

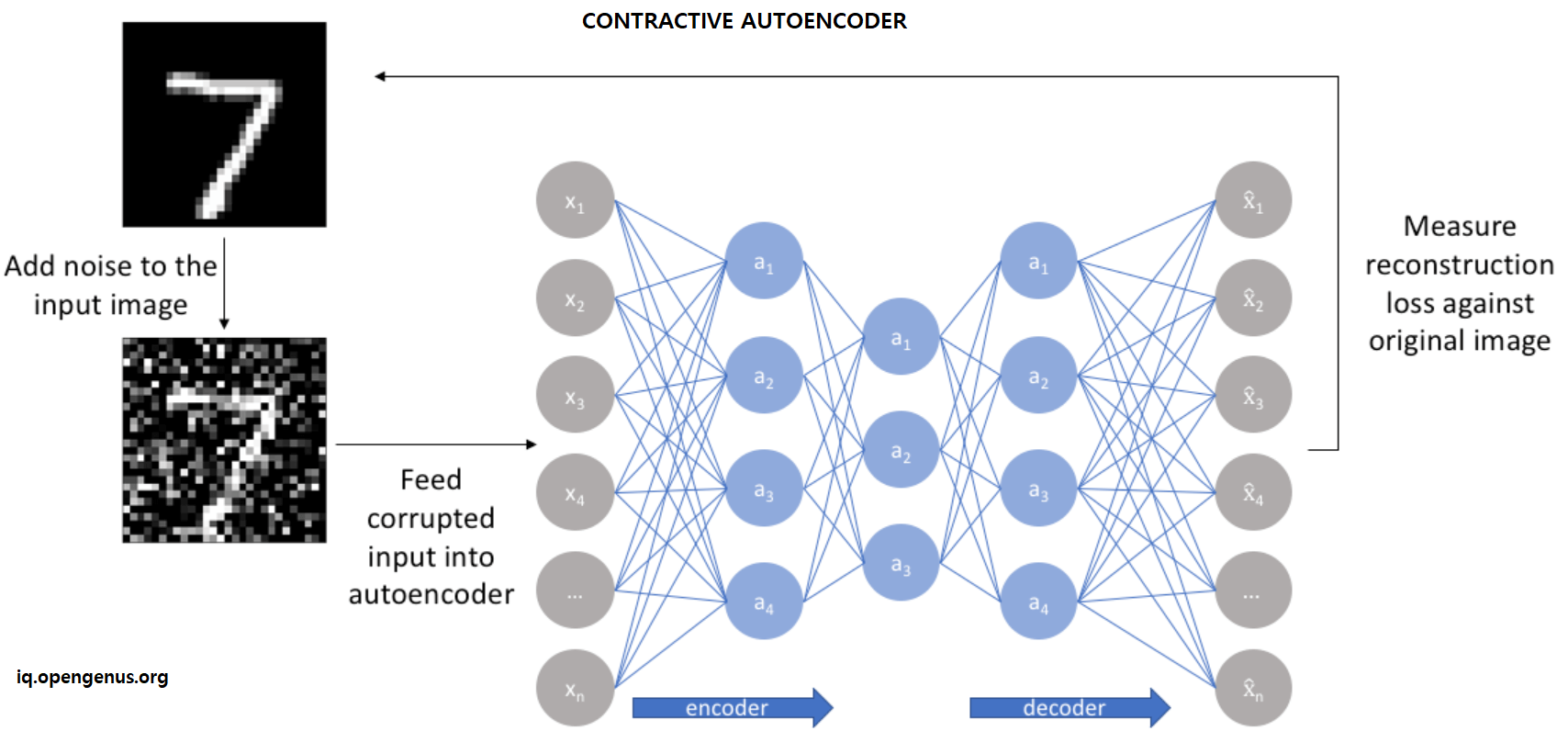

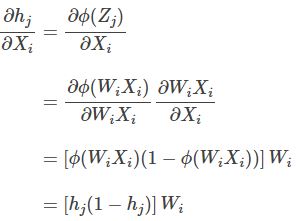

Contractive Autoencoders Explained With Implementation This chapter surveys the different types of autoencoders that are mainly used today. it also describes various applications and use cases of autoencoders. In this blog post, we will explore the fundamental concepts of contractive autoencoders in pytorch, discuss their usage methods, common practices, and best practices. Generative models viewed as autoencoders beyond regularized autoencoders generative models with latent variables and an inference procedure (for computing latent representations given input) can be viewed as a particular form of autoencoder. How does it work? performed semantic arithmetic in z space!. Contractive autoencoders (caes) autoencoders are designed to learn stable and reliable features from input data. during training, they add a special penalty to the learning process to make sure that small changes in the input will not cause big changes in the learned features. Autoencoders are neural networks that compress input data into a smaller representation and then reconstruct it, helping the model learn important patterns efficiently.

Contractive Autoencoders Explained With Implementation Generative models viewed as autoencoders beyond regularized autoencoders generative models with latent variables and an inference procedure (for computing latent representations given input) can be viewed as a particular form of autoencoder. How does it work? performed semantic arithmetic in z space!. Contractive autoencoders (caes) autoencoders are designed to learn stable and reliable features from input data. during training, they add a special penalty to the learning process to make sure that small changes in the input will not cause big changes in the learned features. Autoencoders are neural networks that compress input data into a smaller representation and then reconstruct it, helping the model learn important patterns efficiently.

Comments are closed.