19 Perceptron

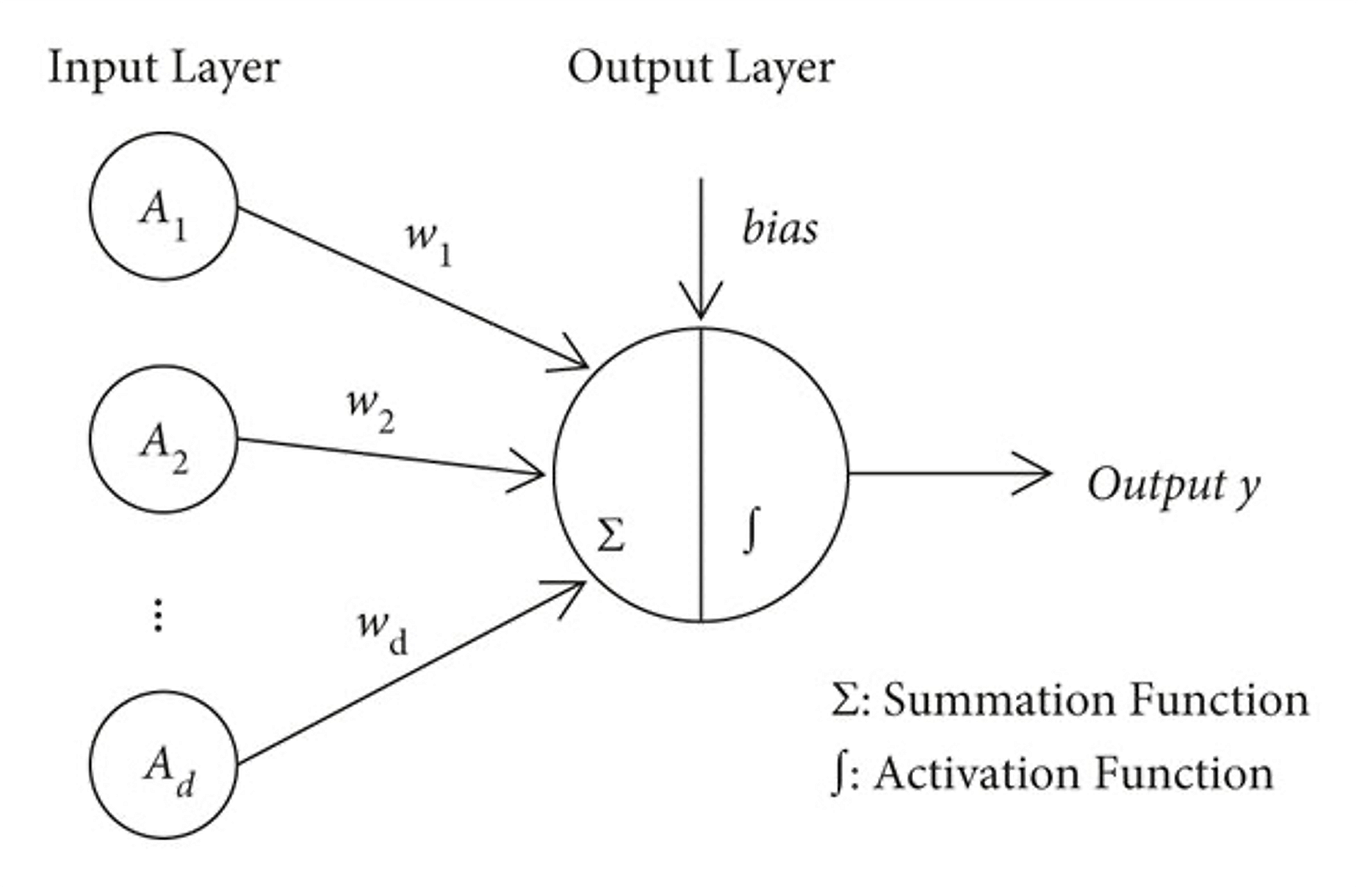

Perceptron 19 By Mumbaimafia On Deviantart A perceptron is the simplest form of a neural network that makes decisions by combining inputs with weights and applying an activation function. it is mainly used for binary classification problems. The perceptron defines the first step into neural networks: perceptrons are often used as the building blocks for more complex neural networks, such as multi layer perceptrons (mlps) or deep neural networks (dnns).

Understanding The Perceptron Algorithm Linear Separability And Fortunately enough this is not the case in the perceptron. in fact, the number of linearly realizable dichotomies on a set of points depend only on a mild condition, known as general position. Understanding the perceptron: the simplest neural network if you want to understand modern artificial intelligence, you need to start with one simple idea: the perceptron. the perceptron is the. The perceptron was arguably the first algorithm with a strong formal guarantee. if a data set is linearly separable, the perceptron will find a separating hyperplane in a finite number of updates. A perceptron is defined as a type of artificial neural network that consists of a single layer with no hidden layers, receiving input data, applying weights and a bias, and producing an output through an activation function to introduce nonlinearity.

Frank Rosenblatt S Perceptron Birth Of The Neural Network By Robert The perceptron was arguably the first algorithm with a strong formal guarantee. if a data set is linearly separable, the perceptron will find a separating hyperplane in a finite number of updates. A perceptron is defined as a type of artificial neural network that consists of a single layer with no hidden layers, receiving input data, applying weights and a bias, and producing an output through an activation function to introduce nonlinearity. Where a perceptron had been trained to distinguish between this was for military purposes it was looking at a scene of a forest in which there were camouflaged tanks in one picture and no camouflaged tanks in the other. To make this argument more formal consider β ^ dummy which is the output of a perceptron on d, with the tweak that when the random index is n 1, we skip to the next iteration without checking the test observation (x, y). Perceptrons are the very simplest kind of neural network, and the only kind of neural network that we understand well. the niceness of perceptrons and other linear models like logistic regression and support vector machines drove much machine learning research in the 1990s and 2000s. We can make a simplied version of the perceptron algorithm if we restrict ourselves to separators through the origin:we list it here because this is the version of the algorithm we'll study in more detail.

Comments are closed.