Why Are Transformers Replacing Cnns

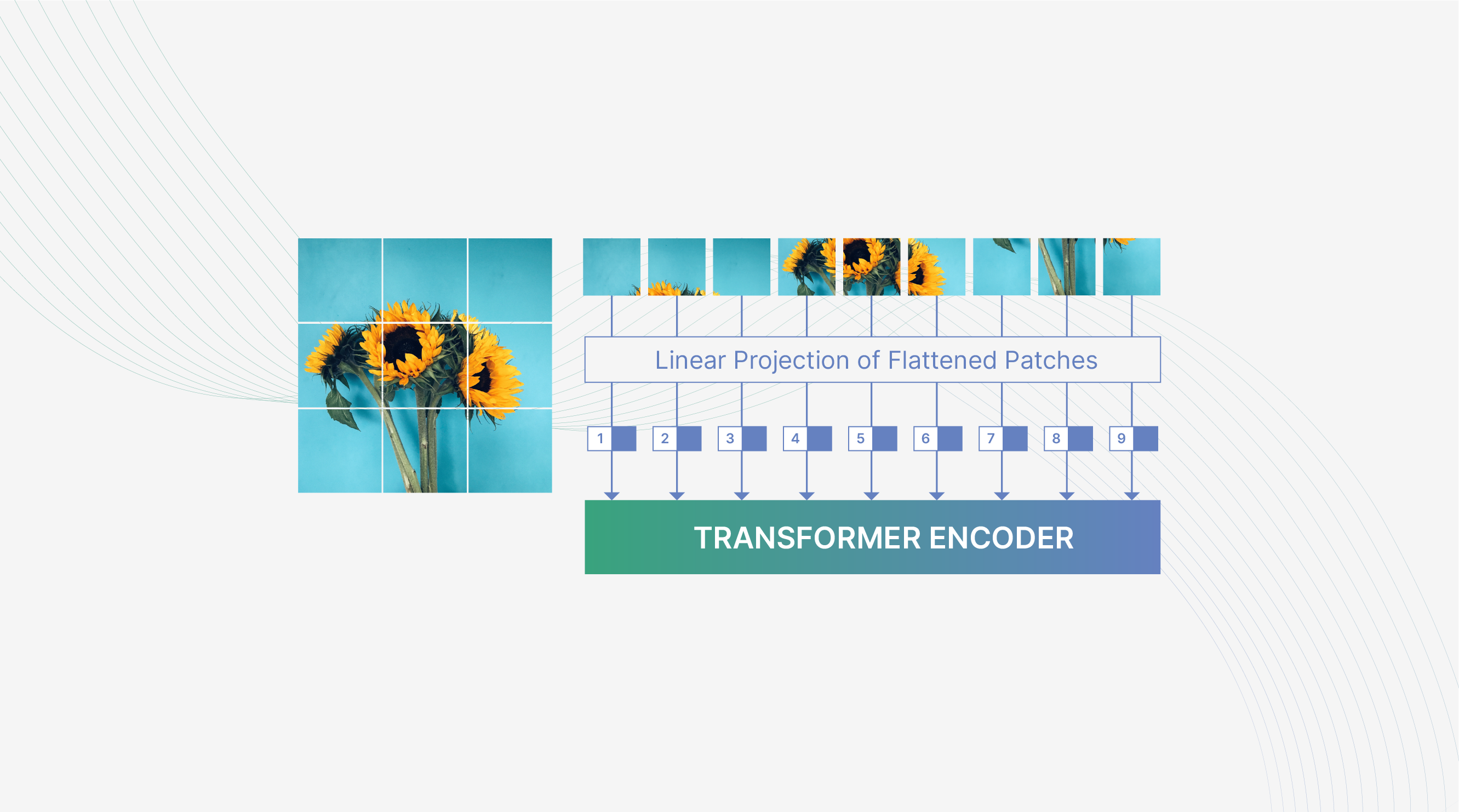

Are Transformers Replacing Cnns In Object Detection In this video we break down one of the biggest shifts in computer vision: why transformers replaced convolutional neural networks (cnns) — even though cnns were designed for images and. Vision transformers (vits) were introduced in 2020 as an alternative to cnns for image classification tasks. inspired by the success of transformers in natural language processing, vits apply the transformer architecture to image data.

Are Transformers Replacing Cnns In Object Detection Before them, rnns (recurrent neural networks) and cnns (convolutional neural networks) ruled the world of ai. in my earlier blogs, i’ve already broken down rnns, cnns, and transformers. Recent advancements in computer vision have highlighted the emergence of vision transformers (vits) as a promising alternative to convolutional neural networks (cnns) for scene interpretation, driven by their ability to model long range dependencies through self attention mechanisms. Now is it possible to entirely replace the cnn’s with an attention based model like the transformers? transformers have already replaced the lstms in the nlp domain. Transformers have emerged as a groundbreaking advancement in deep learning, addressing the inherent limitations of traditional architectures like recurrent neural networks (rnns) and convolutional neural networks (cnns).

Are Transformers Replacing Cnns In Object Detection Picsellia Now is it possible to entirely replace the cnn’s with an attention based model like the transformers? transformers have already replaced the lstms in the nlp domain. Transformers have emerged as a groundbreaking advancement in deep learning, addressing the inherent limitations of traditional architectures like recurrent neural networks (rnns) and convolutional neural networks (cnns). Transformers are replacing cnns in image classification because their self attention mechanism captures global context and complex interactions more effectively than cnns’ local convolutional filters, leading to better accuracy and flexibility. The shift from rnns and cnns to transformers marks a significant shift in ai modeling. transformers, through self attention, can consider the relevance of each part of a sequence concurrently, leading to better context understanding and efficiency when compared to rnns. Transformers have achieved higher metrics in many vision tasks, gaining a sota place. transformers need more training data to achieve similar results or surpass cnns. transformers may need more gpu resources to be trained. Specif ically, we found that the batch normalization commonly adopted in cnns leads to a significantly less compositional network, compared to the layer normalization commonly used in transformers. receptive field size of the model also impacts compositionality to a lesser extent.

Comments are closed.