Why Ai Struggles The Missing Context In Problem Solving

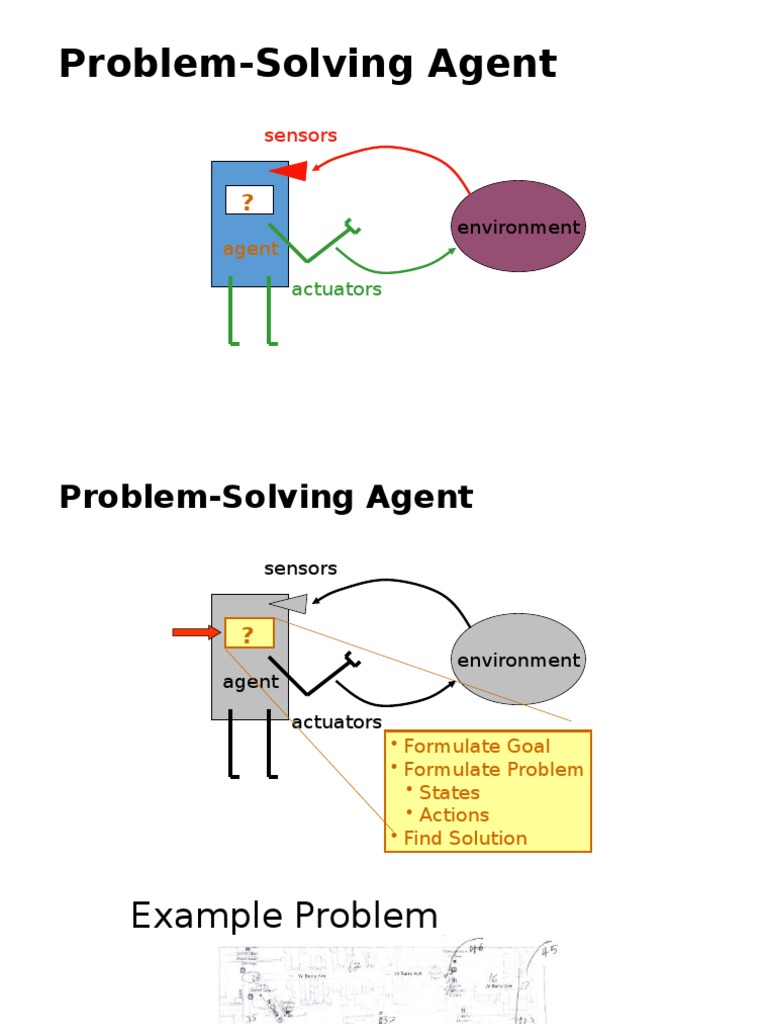

Problem Solving Ai Pdf Theoretical Computer Science Algorithms Context drift is the gradual loss of coherence, accuracy, or alignment with the original request as an ai conversation or task progresses. it happens when an ai starts strong but, over time,. After analyzing 1,565 peer reviewed papers from openai, google, microsoft, and stanford, researchers discovered the shocking truth. giving agents more context makes them perform worse. the data.

Lecture 4 Problem Solving In Ai Pdf Artificial Intelligence Most ai agents fail because they lack the right context at the right time. see how solving the context problem transforms agent accuracy and guest trust. The context gap isn’t just an internal production failure; it’s a competitive disadvantage. organizations that structure their data around relationships can reduce risk, improve decision making, offer more personalized service, and increase revenue by delivering effective, explainable ai. Discover how google and mit expose ai's hidden struggle with complex sequential tasks. learn why context breaks down in multi step challenges. Ai tools don’t “know” your situation — they only respond to what you give them. many weak outputs are the result of unclear or missing context, not poor technology. you can dramatically improve results by giving your ai tool a simple background brief.

Ai Problem Solving Hugo Jason Herrera Discover how google and mit expose ai's hidden struggle with complex sequential tasks. learn why context breaks down in multi step challenges. Ai tools don’t “know” your situation — they only respond to what you give them. many weak outputs are the result of unclear or missing context, not poor technology. you can dramatically improve results by giving your ai tool a simple background brief. Ai is limited today because it cannot truly understand or maintain context the way humans do. as context windows expand, tools like model context protocols, ai agents, and integrated systems will allow ai to act more intelligently, bridging the gap toward agi. Large language models (llms) struggle to recall information from the middle of long contexts. learn about this "lost in the middle" problem, its u shaped performance curve, and how context engineering techniques like rag and strategic placement can solve it. Now every agent is reading everything, inheriting everyone else’s mistakes, and paying the same context bill over and over again. if only some context is shared, a new problem appears. what is considered authoritative when agents disagree? what remains local, and how are conflicts reconciled?. Think of ai like a gps that doesn’t understand road conditions. it tells you the fastest route, but it can’t see the construction ahead. that’s the problem with ai that lacks contextual intelligence.

Comments are closed.