What Is Tokenization Total Processing

Tokenization To further explore tokenization, you can use our interactive tokenizer tool, which allows you to calculate the number of tokens and see how text is broken into tokens. alternatively, if you'd like to tokenize text programmatically, use tiktoken as a fast bpe tokenizer specifically used for openai models. Tokenization is the process of creating a digital representation of a real thing. tokenization can also be used to protect sensitive data or to efficiently process large amounts of data.

Windcave Tokenization Eftpos Payment Gateway Online Credit Tokenization is a critical step in natural language processing, serving as the foundation for many text analysis and machine learning tasks. by breaking down text into manageable units, tokenization simplifies the processing of textual data, enabling more effective and accurate nlp applications. Tokenization is the algorithmic process of breaking down a stream of raw data—such as text, images, or audio—into smaller, manageable units called tokens. By efficiently processing tokens, ai factories are manufacturing intelligence — the most valuable asset in the new industrial revolution powered by ai. what is tokenization? whether a transformer ai model is processing text, images, audio clips, videos or another modality, it will translate the data into tokens. this process is known as tokenization. efficient tokenization helps reduce the. Tokenization serves as the backbone for a myriad of applications in the digital realm, enabling machines to process and understand vast amounts of text data. by breaking down text into manageable chunks, tokenization facilitates more efficient and accurate data analysis.

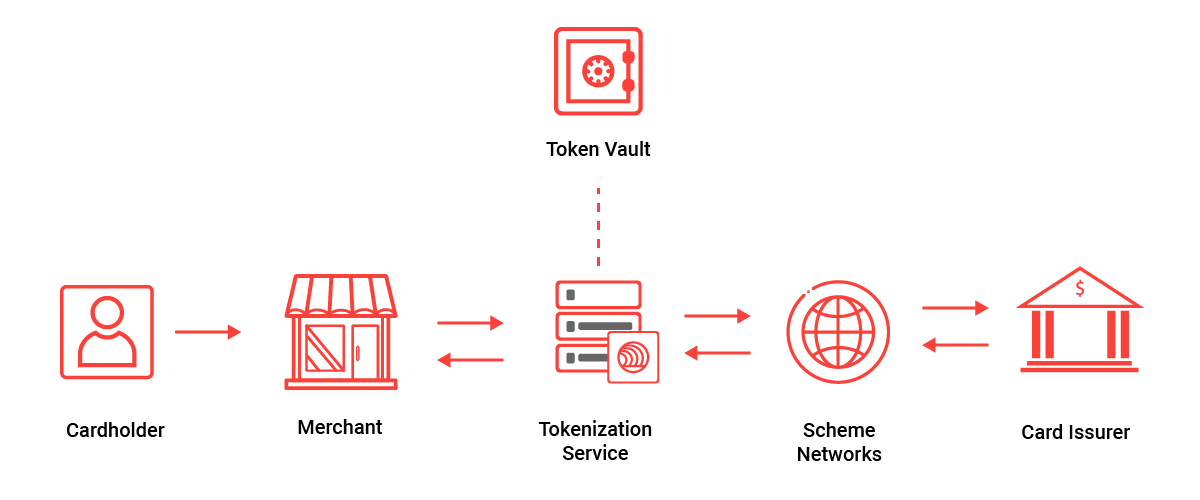

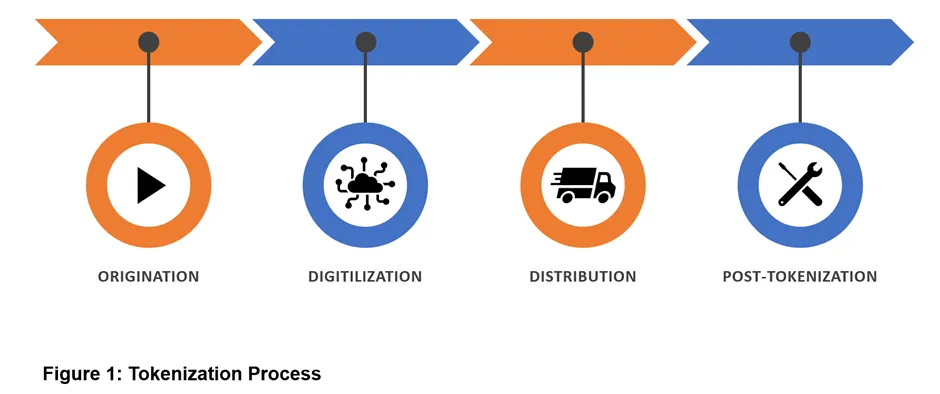

Tokenization Process Novopayment By efficiently processing tokens, ai factories are manufacturing intelligence — the most valuable asset in the new industrial revolution powered by ai. what is tokenization? whether a transformer ai model is processing text, images, audio clips, videos or another modality, it will translate the data into tokens. this process is known as tokenization. efficient tokenization helps reduce the. Tokenization serves as the backbone for a myriad of applications in the digital realm, enabling machines to process and understand vast amounts of text data. by breaking down text into manageable chunks, tokenization facilitates more efficient and accurate data analysis. Tokenization is often used in credit card processing. the pci council defines tokenization as "a process by which the primary account number (pan) is replaced with a surrogate value called a token. a pan may be linked to a reference number through the tokenization process. Tokenization is a foundational step in natural language processing (nlp). it breaks down text into smaller units – such as words, subwords, or characters – so that ai models can understand and generate language. A. tokenization is the process of breaking down text into smaller units called tokens, which are usually words or subwords. it’s a fundamental step in nlp for tasks like text processing and analysis. Tokenization: what is tokenization? tokenization is the process of breaking down text into individual words or tokens. it serves as a fundamental step in natural language processing, allowing algorithms to process and analyze language on a word by word basis.

Bloxima Your Gateway To Multiverse Tokenization is often used in credit card processing. the pci council defines tokenization as "a process by which the primary account number (pan) is replaced with a surrogate value called a token. a pan may be linked to a reference number through the tokenization process. Tokenization is a foundational step in natural language processing (nlp). it breaks down text into smaller units – such as words, subwords, or characters – so that ai models can understand and generate language. A. tokenization is the process of breaking down text into smaller units called tokens, which are usually words or subwords. it’s a fundamental step in nlp for tasks like text processing and analysis. Tokenization: what is tokenization? tokenization is the process of breaking down text into individual words or tokens. it serves as a fundamental step in natural language processing, allowing algorithms to process and analyze language on a word by word basis.

Comments are closed.