What Is Swe Bench

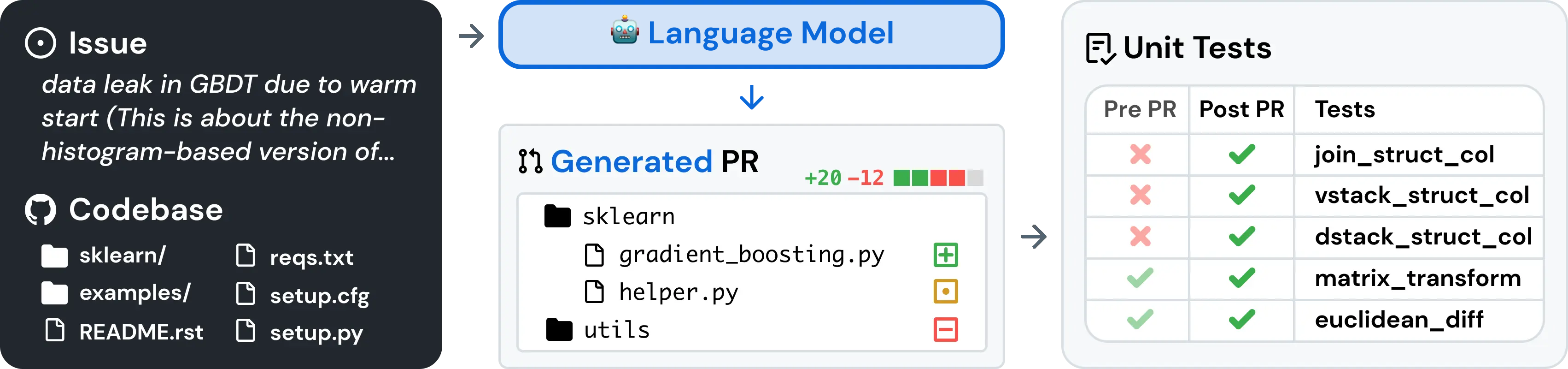

Swe Bench Swe bench is a benchmark for evaluating large language models on real world software issues collected from github. given a codebase and an issue, a language model is tasked with generating a patch that resolves the described problem. What is the swe bench verified leaderboard? the swe bench verified leaderboard ranks 83 ai models based on their performance on this benchmark. currently, claude mythos preview by anthropic leads with a score of 0.939. the average score across all models is 0.634.

Swe Bench Openlm Ai Swe bench tests real software engineering: codebase navigation, bug comprehension, multi file patches, and test suite compliance. swe bench is far more predictive of coding assistant quality. Learn what mmlu, gpqa diamond, swe bench, healthbench, and chatbot arena actually measure, and how labs game benchmark scores. Swe bench (software engineering benchmark) is a comprehensive benchmark designed to evaluate large language models and ai agents on their ability to solve real world software engineering tasks. Swe bench is a benchmark for evaluating large language models and ai agents on real world software engineering tasks.

Swe Bench Openlm Ai Swe bench (software engineering benchmark) is a comprehensive benchmark designed to evaluate large language models and ai agents on their ability to solve real world software engineering tasks. Swe bench is a benchmark for evaluating large language models and ai agents on real world software engineering tasks. Swe bench is a benchmark for evaluating large language models on real world software engineering tasks extracted from github repositories. Swe bench is a benchmark for evaluating large language models on real world software issues collected from github. given a codebase and an issue, a language model is tasked with generating a patch that resolves the described problem. Artifacts. while working on merging our swe bench to swe bench project repository, we release the dataset of swe bench to help other researchers replicate and extend our study3. Overview swe bench pro is a benchmark designed to provide a rigorous and realistic evaluation of ai agents for software engineering. it was developed to address several limitations in existing benchmarks by tackling four key challenges: data contamination: models have likely seen the evaluation code during training, making it hard to know if they are problem solving or recalling a memorized.

Swe Bench Llm Benchmark Swe bench is a benchmark for evaluating large language models on real world software engineering tasks extracted from github repositories. Swe bench is a benchmark for evaluating large language models on real world software issues collected from github. given a codebase and an issue, a language model is tasked with generating a patch that resolves the described problem. Artifacts. while working on merging our swe bench to swe bench project repository, we release the dataset of swe bench to help other researchers replicate and extend our study3. Overview swe bench pro is a benchmark designed to provide a rigorous and realistic evaluation of ai agents for software engineering. it was developed to address several limitations in existing benchmarks by tackling four key challenges: data contamination: models have likely seen the evaluation code during training, making it hard to know if they are problem solving or recalling a memorized.

Github Swe Gym Swe Bench Package Artifacts. while working on merging our swe bench to swe bench project repository, we release the dataset of swe bench to help other researchers replicate and extend our study3. Overview swe bench pro is a benchmark designed to provide a rigorous and realistic evaluation of ai agents for software engineering. it was developed to address several limitations in existing benchmarks by tackling four key challenges: data contamination: models have likely seen the evaluation code during training, making it hard to know if they are problem solving or recalling a memorized.

Comments are closed.