What Is Llmjacking The Hidden Cloud Security Threat Of Ai Models

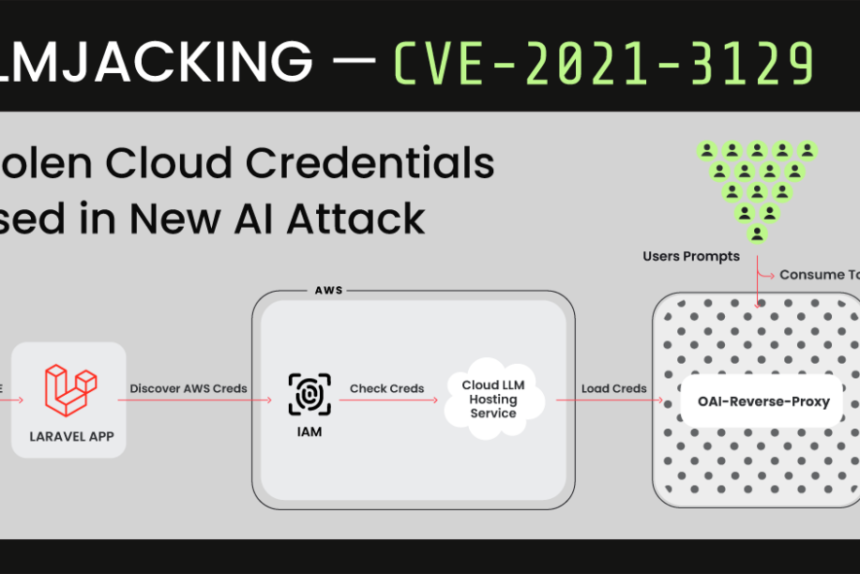

What Is Llmjacking The Hidden Cloud Security Threat Of Ai Models Ibm Llmjacking was understood as a threat that attackers were exploring and, in some cases, operationalizing, but the full picture of systematic reconnaissance, quality based victim triage, and commercial marketplace resale had not been documented and attributed. Llm jacking is the unauthorized consumption of your llm resources by an attacker who has compromised a legitimate cloud identity credential. the attacker’s goal is to use your expensive ai infrastructure for their own purposes, leaving you with the bill and the risk.

Hackers Hijacking Access To Cloud Based Ai Models With Exposed Keys A new security threat, known as llmjacking or llm jacking, has emerged on the cybersecurity landscape. llmjacking refers to a methodology used by threat actors in which they use stolen cloud credentials and infiltrate cloud hosted llms (large language models). Llm jacking is rising as attackers find new ways to hijack large language models. learn how these threats work, why they spread fast, and how to stay ahead. This form of exploitation, dubbed as ‘llmjacking’, involves hijacking your computing environment to operate large language models (llms) and leaving you with the hefty bill. Llmjacking is a term coined by the sysdig threat research team (trt) to describe an attacker using stolen credentials to gain access to a victim's large language model (llm). in essence, it is the act of hijacking an llm. any organization that uses cloud hosted llms is at risk of llmjacking.

Researchers Uncover Llmjacking Scheme Targeting Cloud Hosted Ai This form of exploitation, dubbed as ‘llmjacking’, involves hijacking your computing environment to operate large language models (llms) and leaving you with the hefty bill. Llmjacking is a term coined by the sysdig threat research team (trt) to describe an attacker using stolen credentials to gain access to a victim's large language model (llm). in essence, it is the act of hijacking an llm. any organization that uses cloud hosted llms is at risk of llmjacking. Llmjacking stands apart from typical cloud threats by targeting the compute and access patterns of llms. unlike cryptojacking, which hijacks hardware for mining, llmjacking abuses exposed api keys or misconfigurations to covertly exploit ai services. Llm jacking is an attack technique that cybercriminals use to manipulate and exploit an enterprise’s cloud based llms (large language models). One important area to be aware of is llmjacking. this involves unauthorized individuals trying to manipulate and exploit your organization's large language models (llms), particularly when these models are hosted on cloud services and accessed through online accounts. In the realm of cybersecurity, a new menace has emerged: llmjacking, a type of ai hijacking. this innovative attack method utilizes pilfered cloud credentials to infiltrate cloud hosted large language model (llm) services, with the ultimate aim of peddling access to other malicious actors.

The Hidden Threat How Ai Models Are Becoming The Next Frontier In Llmjacking stands apart from typical cloud threats by targeting the compute and access patterns of llms. unlike cryptojacking, which hijacks hardware for mining, llmjacking abuses exposed api keys or misconfigurations to covertly exploit ai services. Llm jacking is an attack technique that cybercriminals use to manipulate and exploit an enterprise’s cloud based llms (large language models). One important area to be aware of is llmjacking. this involves unauthorized individuals trying to manipulate and exploit your organization's large language models (llms), particularly when these models are hosted on cloud services and accessed through online accounts. In the realm of cybersecurity, a new menace has emerged: llmjacking, a type of ai hijacking. this innovative attack method utilizes pilfered cloud credentials to infiltrate cloud hosted large language model (llm) services, with the ultimate aim of peddling access to other malicious actors.

Comments are closed.