What Is Entropy

Measuring Disorder An Introduction To Entropy And The Second Law Of Austrian physicist ludwig boltzmann explained entropy as the measure of the number of possible microscopic arrangements or states of individual atoms and molecules of a system that comply with the macroscopic condition of the system. Entropy is defined as a measure of a system’s disorder or the energy unavailable to do work. entropy is a key concept in physics and chemistry, with application in other disciplines, including cosmology, biology, and economics.

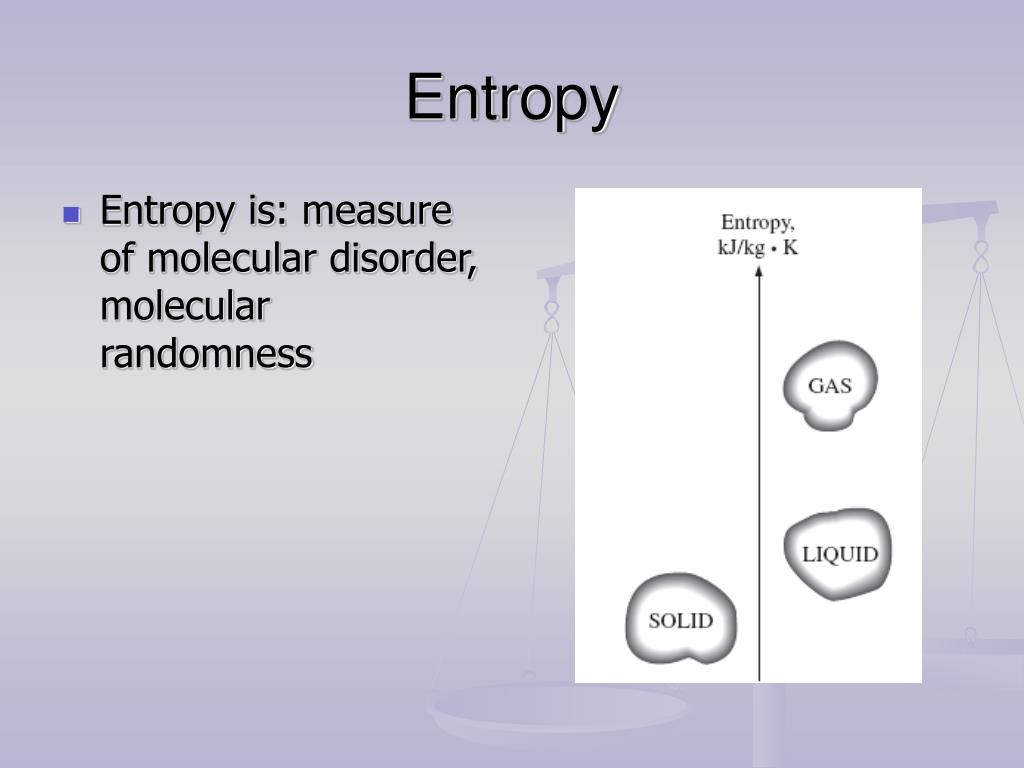

Ppt Entropy S Powerpoint Presentation Free Download Id 6552891 Entropy, the measure of a system’s thermal energy per unit temperature that is unavailable for doing useful work. because work is obtained from ordered molecular motion, entropy is also a measure of the molecular disorder, or randomness, of a system. This phenomenon is explained by the second law of thermodynamics, which relies on a concept known as entropy. entropy is a measure of the disorder of a system. entropy also describes how much energy is not available to do work. the more disordered a system and higher the entropy, the less of a system's energy is available to do work. Entropy is a measure of disorder, dispersal, and the number of microstates a system can occupy. learn how entropy relates to particle arrangements, phase changes, and probability in chemical systems. Entropy is a measure of the disorder in a closed system that increases as energy disperses and becomes less organized. learn how entropy applies to thermodynamics, physics, chemistry, biology and more, and why it's a confusing concept to define.

Ppt Entropy Powerpoint Presentation Free Download Id 6750018 Entropy is a measure of disorder, dispersal, and the number of microstates a system can occupy. learn how entropy relates to particle arrangements, phase changes, and probability in chemical systems. Entropy is a measure of the disorder in a closed system that increases as energy disperses and becomes less organized. learn how entropy applies to thermodynamics, physics, chemistry, biology and more, and why it's a confusing concept to define. Entropy is a concept that quantifies the tendency of the universe to decay into disorder and chaos. learn how entropy evolved from a simple idea of carnot to a complex notion of information and uncertainty, and how it challenges our views of science and the world. Entropy is a measure of the randomness or disorder within a system. learn how entropy applies to thermodynamics, information theory, and phase transitions, and see examples and formulas. Entropy means the amount of disorder or randomness of a system. it is a measure of thermal energy per unit of the system which is unavailable for doing work. the concept of entropy can be applied in various contexts and stages, including cosmology, economics, and thermodynamics. Entropy (s) is a thermodynamic property of all substances that is proportional to their degree of disorder. the greater the number of possible microstates for a system, the greater the disorder and the higher the entropy.

Comments are closed.