What Is Chunking In Ai The Beginners Guide The Power Of Chunking In Llms Rag Explained

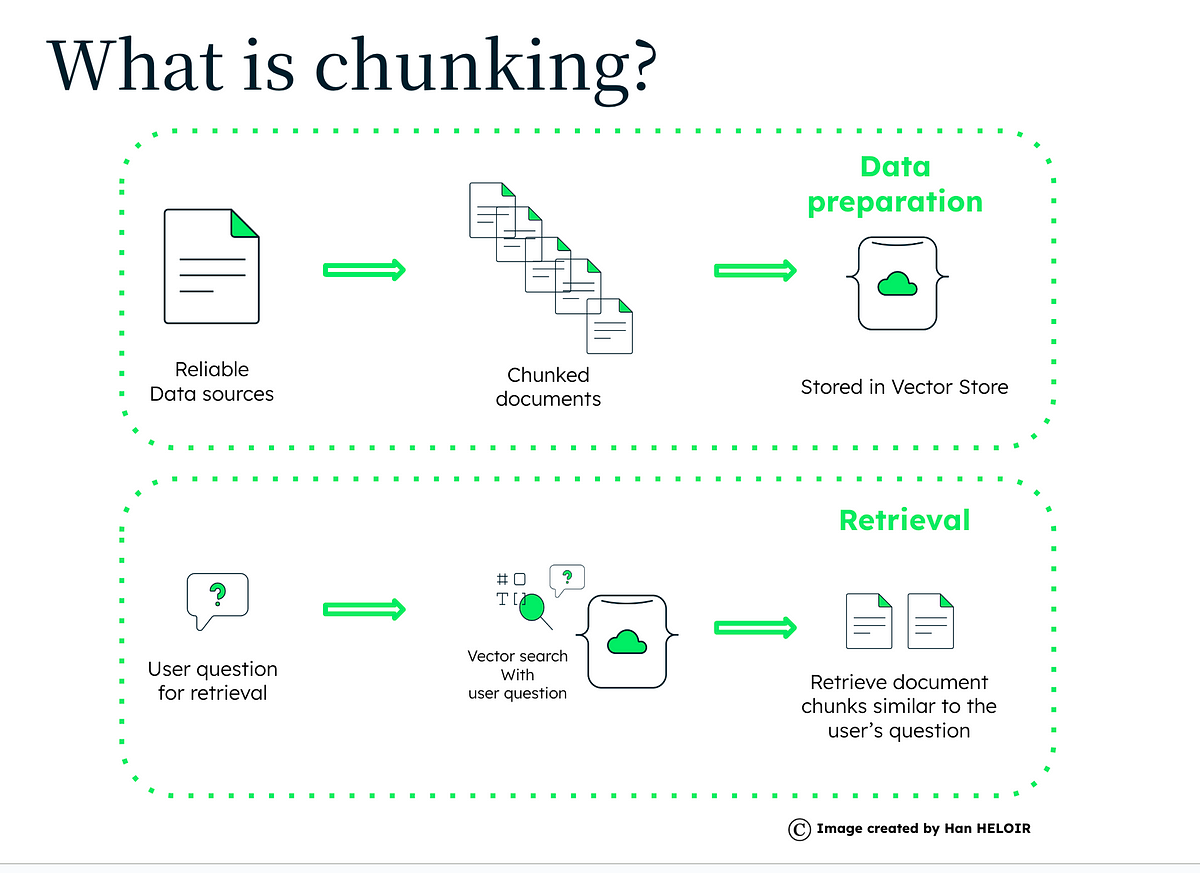

What Is Chunking In Ai The Beginners Guide The Power Of Chunking In At first glance, chunking sounds like a simple preprocessing task: just split a document into pieces, right? but in reality, chunking is one of the most critical design choices in a rag. Chunking is a fundamental technique in ai, especially when dealing with large volumes of data, particularly text. what is chunking? chunking is a preprocessing step that transforms raw, often unstructured data (like long documents, audio streams, or video feeds) into discrete, manageable units.

Chunking Introduction Advanced Llms With Retrieval Augmented Chunking is a technological necessity and a strategic approach to ensuring robust, efficient, and scalable rag systems. it enhances retrieval accuracy, processing efficiency, and resource utilization, playing a crucial role in the success of rag applications. Learn the best chunking strategies for retrieval augmented generation (rag) to improve retrieval accuracy and llm performance. this guide covers best practices, code examples, and industry proven techniques for optimizing chunking in rag workflows, including implementations on databricks. In this guide, i will walk you through the concept of chunking, explain why it matters in ai applications, describe its role in the rag pipeline, and discuss how different strategies can impact retrieval accuracy. A chunking strategy is the method of breaking down large documents into smaller, manageable pieces for ai retrieval. poor chunking leads to irrelevant results, inefficiency, and reduced business value. it determines how effectively relevant information is fetched for accurate ai responses.

A Deep Dive Into Chunking Strategy Chunking Methods And Precision In In this guide, i will walk you through the concept of chunking, explain why it matters in ai applications, describe its role in the rag pipeline, and discuss how different strategies can impact retrieval accuracy. A chunking strategy is the method of breaking down large documents into smaller, manageable pieces for ai retrieval. poor chunking leads to irrelevant results, inefficiency, and reduced business value. it determines how effectively relevant information is fetched for accurate ai responses. Think of chunking as the foundation of your rag pipeline. a poor chunking strategy is like building a house on sand—no matter how sophisticated your retrieval or generation components are, your system will struggle to deliver accurate results. Chunking splits documents into retrievable pieces for rag. learn the tradeoffs, why size matters, and what causes missing context. In this blog post, we’ll dive into the concept of chunking, explore its significance in llm related applications, and share insights from our own experimentation with varying chunk sizes. Documents are too long for embedding models and context windows, so rag systems split them into chunks, embed each chunk, and retrieve the most relevant ones at query time. most teams tune their embedding model obsessively and ignore how the documents were split. that is backwards.

The Art Of Chunking Boosting Ai Performance In Rag Architectures Bard Ai Think of chunking as the foundation of your rag pipeline. a poor chunking strategy is like building a house on sand—no matter how sophisticated your retrieval or generation components are, your system will struggle to deliver accurate results. Chunking splits documents into retrievable pieces for rag. learn the tradeoffs, why size matters, and what causes missing context. In this blog post, we’ll dive into the concept of chunking, explore its significance in llm related applications, and share insights from our own experimentation with varying chunk sizes. Documents are too long for embedding models and context windows, so rag systems split them into chunks, embed each chunk, and retrieve the most relevant ones at query time. most teams tune their embedding model obsessively and ignore how the documents were split. that is backwards.

Comments are closed.