What Are The Differences Between Spark Developer Roles And Other Data

What Are The Differences Between Spark Developer Roles And Other Data Spark developer roles typically involve working with spark clusters to process large amounts of data in parallel, while other data engineering roles may focus on different technologies or tasks. Explore all the different job titles for a pyspark developer and get started on your journey.

Databricks Certified Associate Developer For Apache Spark Databricks This first part establishes the foundational differences between spark developers and hadoop administrators by examining roles, skills, workflows, and growth trajectories. We are looking for a spark developer who knows how to fully exploit the potential of our spark cluster. you will clean, transform, and analyze vast amounts of raw data from various systems using spark to provide ready to use data to our feature developers and business analysts. In this role, you will be expected to write clean, maintainable, and efficient spark code in languages such as scala, java, or python. you will also be responsible for integrating spark applications with various data sources including hdfs, s3, kafka, and relational databases. As a spark developer, you will be responsible for designing, developing, and implementing solutions to process and analyze large data sets using apache spark. you will collaborate with data engineers, data scientists, and other stakeholders to ensure efficient data processing and integration.

Databricks Certified Associate Developer For Apache Spark In this role, you will be expected to write clean, maintainable, and efficient spark code in languages such as scala, java, or python. you will also be responsible for integrating spark applications with various data sources including hdfs, s3, kafka, and relational databases. As a spark developer, you will be responsible for designing, developing, and implementing solutions to process and analyze large data sets using apache spark. you will collaborate with data engineers, data scientists, and other stakeholders to ensure efficient data processing and integration. Who is a spark developer? a spark developer is a software engineer or developer who specializes in developing applications using apache spark. they are responsible for designing, building, testing, and maintaining spark based applications and data processing systems. To be a good spark developer, you must be good at programming, and know about big data technologies, and have experience working with distributed systems. you should also know how to use the sql query language and nosql databases. A spark developer is responsible for the development, programming, and maintenance of applications using the apache spark open source framework. they work with different aspects of the spark ecosystem, including spark sql, dataframes, datasets, and streaming. A pyspark developer utilizes apache spark's python api to build, deploy, and manage data intensive applications. they are responsible for writing scalable code for processing large datasets, implementing data pipelines, and optimizing performance.

Databricks Certified Associate Developer For Apache Spark Who is a spark developer? a spark developer is a software engineer or developer who specializes in developing applications using apache spark. they are responsible for designing, building, testing, and maintaining spark based applications and data processing systems. To be a good spark developer, you must be good at programming, and know about big data technologies, and have experience working with distributed systems. you should also know how to use the sql query language and nosql databases. A spark developer is responsible for the development, programming, and maintenance of applications using the apache spark open source framework. they work with different aspects of the spark ecosystem, including spark sql, dataframes, datasets, and streaming. A pyspark developer utilizes apache spark's python api to build, deploy, and manage data intensive applications. they are responsible for writing scalable code for processing large datasets, implementing data pipelines, and optimizing performance.

Understanding Databricks Apache Spark Performance Tuning Lesson 01 A spark developer is responsible for the development, programming, and maintenance of applications using the apache spark open source framework. they work with different aspects of the spark ecosystem, including spark sql, dataframes, datasets, and streaming. A pyspark developer utilizes apache spark's python api to build, deploy, and manage data intensive applications. they are responsible for writing scalable code for processing large datasets, implementing data pipelines, and optimizing performance.

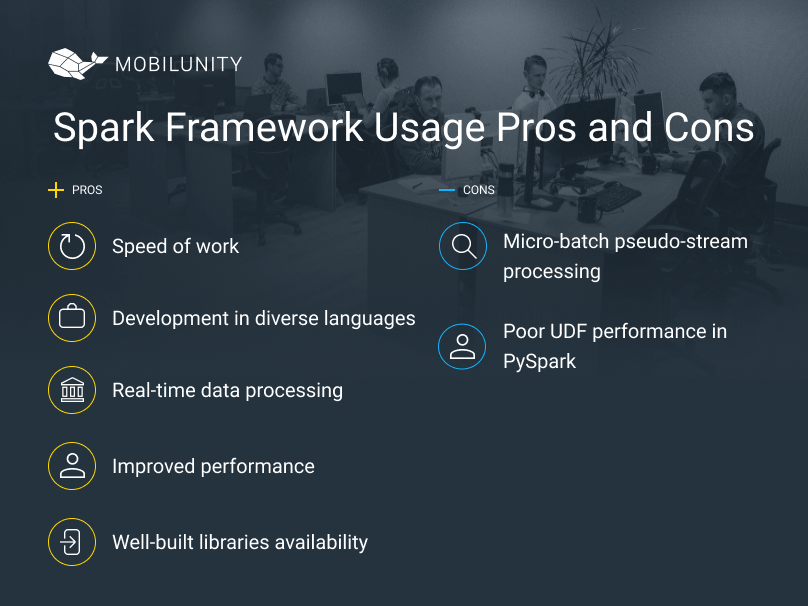

Hire Spark Developer To Process Big Data Mobilunity

Comments are closed.