What Are Residual Connections

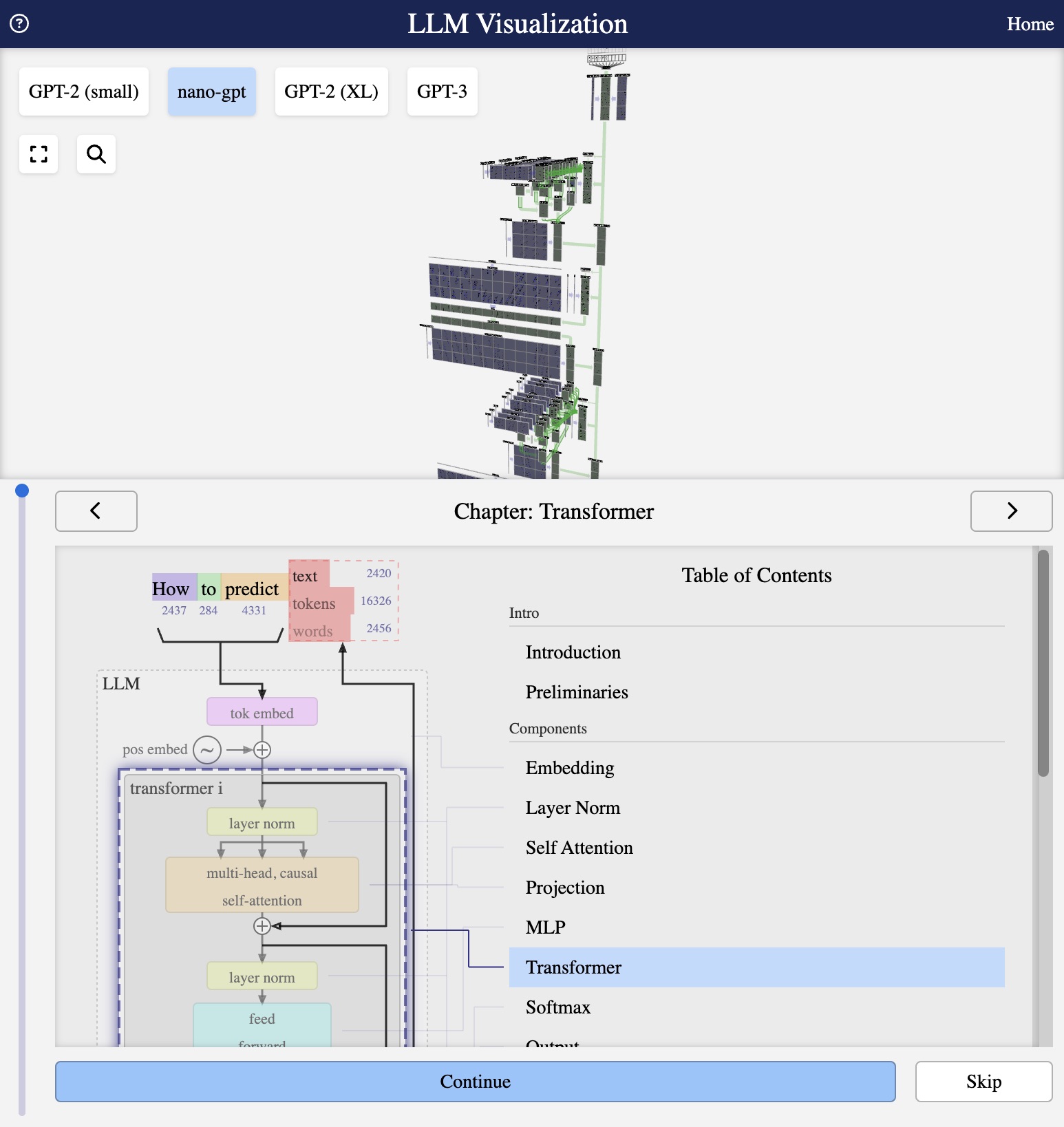

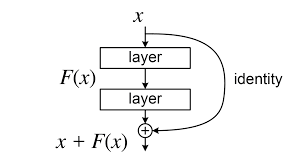

Residual Connections Sudoall In this article we will talk about residual connection (also known as skip connection), which is a simple yet very effective technique to make training deep neural networks easier. To overcome the challenges of training very deep neural networks, residual networks (resnet) was introduced, which uses skip connections that allow the model to learn residual mappings instead of direct transformations making deep neural networks easier to train.

Residual Connections In Deep Learning Superml Org To address this, a clever architectural innovation called residual connections (also known as shortcut or skip connections) was introduced, most notably in resnet (residual networks). let’s. Learn why residual connections are essential for training deep networks — solving vanishing gradients and enabling transformers with 100 layers. Residual connections, also known as skip connections, are a neural network architecture component introduced to mitigate the vanishing gradient problem and facilitate the training of much deeper networks. Residual connections, also known as skip connections, are a type of connection in deep neural networks that allow the gradient to flow more easily through the network.

Residual Connections Download Scientific Diagram Residual connections, also known as skip connections, are a neural network architecture component introduced to mitigate the vanishing gradient problem and facilitate the training of much deeper networks. Residual connections, also known as skip connections, are a type of connection in deep neural networks that allow the gradient to flow more easily through the network. Residual connections, also known as skip connections, are introduced to address the vanishing gradient problem. it bypasses some layers and allows the gradient to pass directly through the network. Residual connections are a fundamental design element that adds the input to a network block’s output, enabling easier gradient propagation in deep architectures. Transformers use these residual connections (also known as skip connections) to improve the flow of gradients during backpropagation and facilitate deeper models. A residual connection directly connects non adjacent layers, bypassing the intermediate ones. this is implemented by simply adding the input of a set of layers to its output.

Residual Connections In Dl Residual connections, also known as skip connections, are introduced to address the vanishing gradient problem. it bypasses some layers and allows the gradient to pass directly through the network. Residual connections are a fundamental design element that adds the input to a network block’s output, enabling easier gradient propagation in deep architectures. Transformers use these residual connections (also known as skip connections) to improve the flow of gradients during backpropagation and facilitate deeper models. A residual connection directly connects non adjacent layers, bypassing the intermediate ones. this is implemented by simply adding the input of a set of layers to its output.

Residual Connections Structure Download Scientific Diagram Transformers use these residual connections (also known as skip connections) to improve the flow of gradients during backpropagation and facilitate deeper models. A residual connection directly connects non adjacent layers, bypassing the intermediate ones. this is implemented by simply adding the input of a set of layers to its output.

Comments are closed.