Weight Quantization Basics Scale Zero Point Calibration

Zero Point Calibration Pdf Calibration Manufactured Goods Learn how weight quantization maps floating point values to integers, reducing llm memory by 4x. covers scale, zero point, symmetric vs asymmetric schemes. Asymmetric quantization accommodates arbitrary data ranges by introducing both scale and zero point parameters, offering greater flexibility for activation functions with non zero means.

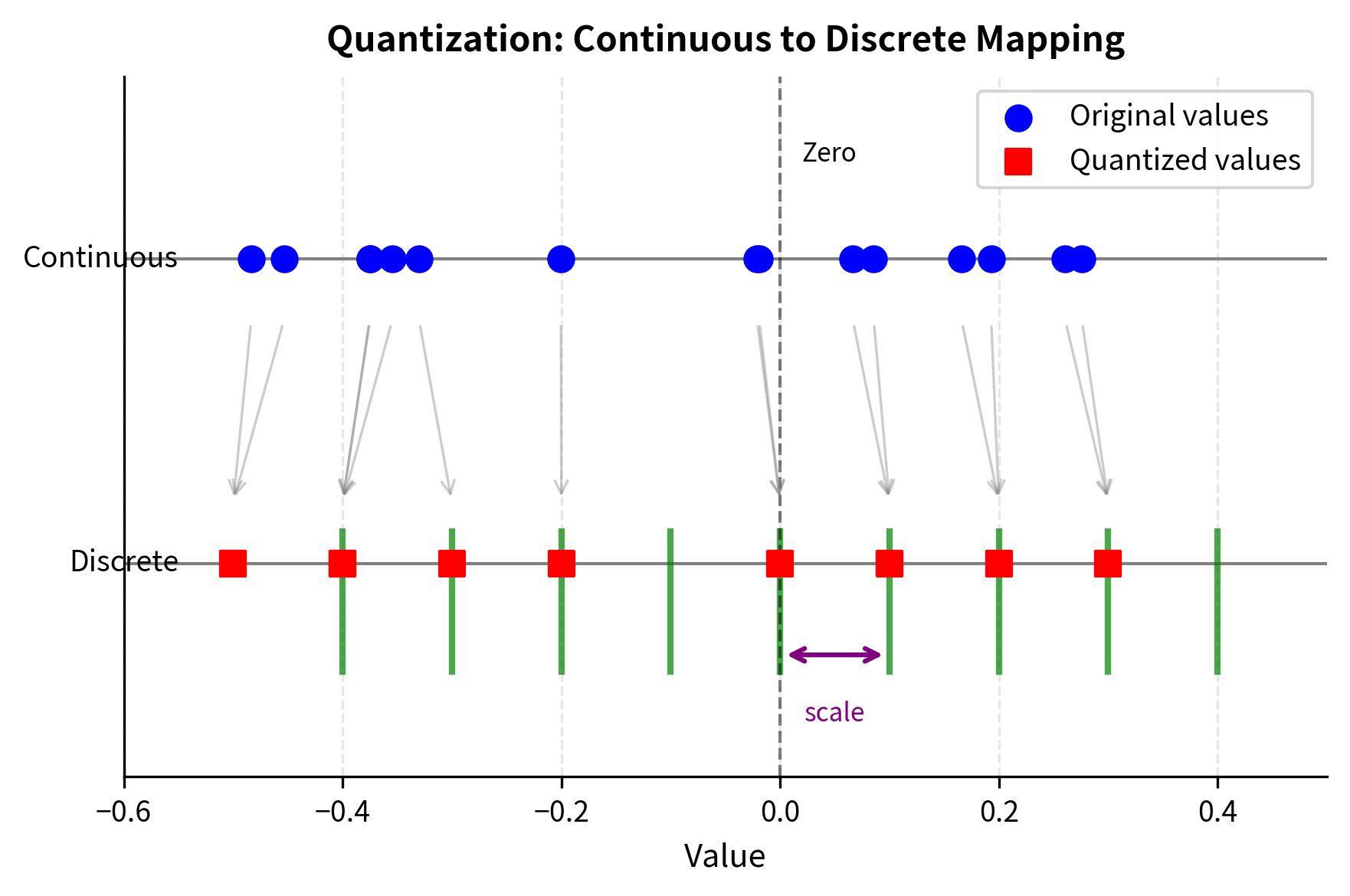

Weight Quantization Basics Scale Zero Point Calibration Analyze how compilers manage and propagate quantization parameters during optimization. Dequantization: before feeding results to the next layer (often still expecting float values), results are mapped back to floating point via the scale and zero point parameters. In this post, i will introduce the field of quantization in the context of language modeling and explore concepts one by one to develop an intuition about the field. we will explore various methodologies, use cases, and the principles behind quantization. Scale and zero points are fundamental in the quantization process. the scale determines how much the original floating point numbers are compressed. the zero point adjusts the range of the quantized values. in quantization, the scale converts floating point numbers to a smaller range.

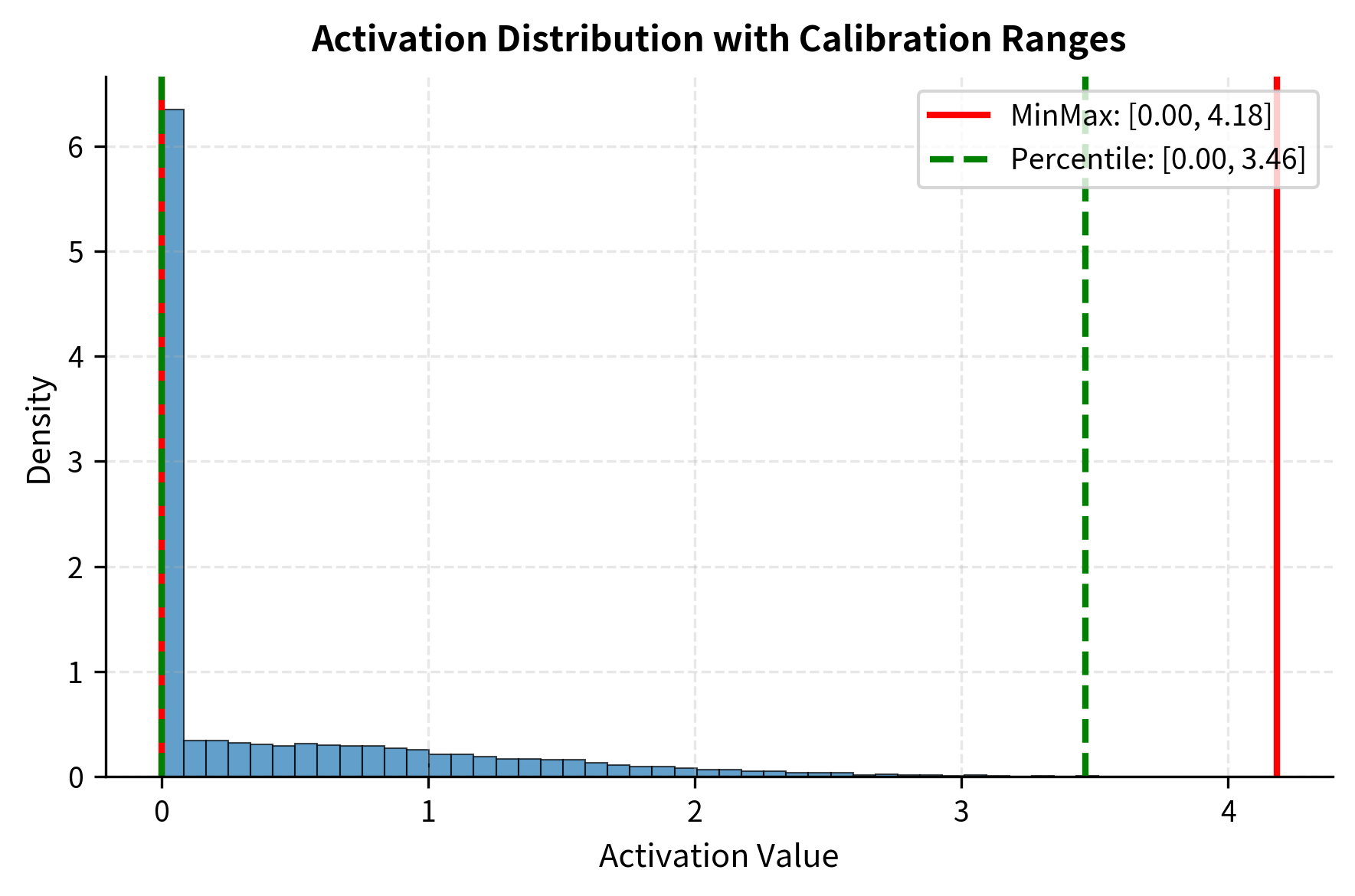

Weight Quantization Basics Scale Zero Point Calibration In this post, i will introduce the field of quantization in the context of language modeling and explore concepts one by one to develop an intuition about the field. we will explore various methodologies, use cases, and the principles behind quantization. Scale and zero points are fundamental in the quantization process. the scale determines how much the original floating point numbers are compressed. the zero point adjusts the range of the quantized values. in quantization, the scale converts floating point numbers to a smaller range. Quantization is a technique to reduce the computational and memory costs of running inference by representing the weights and activations with low precision data types like 8 bit integer (int8) instead of the usual 32 bit floating point (float32). The process of collecting activation statistics and determining appropriate scale and zero point values for them is known as calibration. during calibration, the model weights remain unchanged, and the input data is used to compute the quantization parameters, i.e., scales and zero points. What this does: quantizes the weights of the supported layers, and re wires their forward paths to be compatible with the quantized kernels and quantization scales. Calibration methods determine the quantization scale factors and zero points by analyzing activation and weight tensors. the choice of calibration method significantly impacts quantized model accuracy and affects the range of values that can be represented.

Comments are closed.