Week 1 Gradient Function Explanation Supervised Ml Regression And

Supervised Ml Regression Pdf Now that gradients can be computed, gradient descent, described in equation (3) above can be implemented below in gradient descent. the details of the implementation are described in the comments. With the code they wrote, you would only have to write the gradient descent logic once and then you could try both cost functions and compare the results. of course note that the compute gradient function will be paired with the compute cost function.

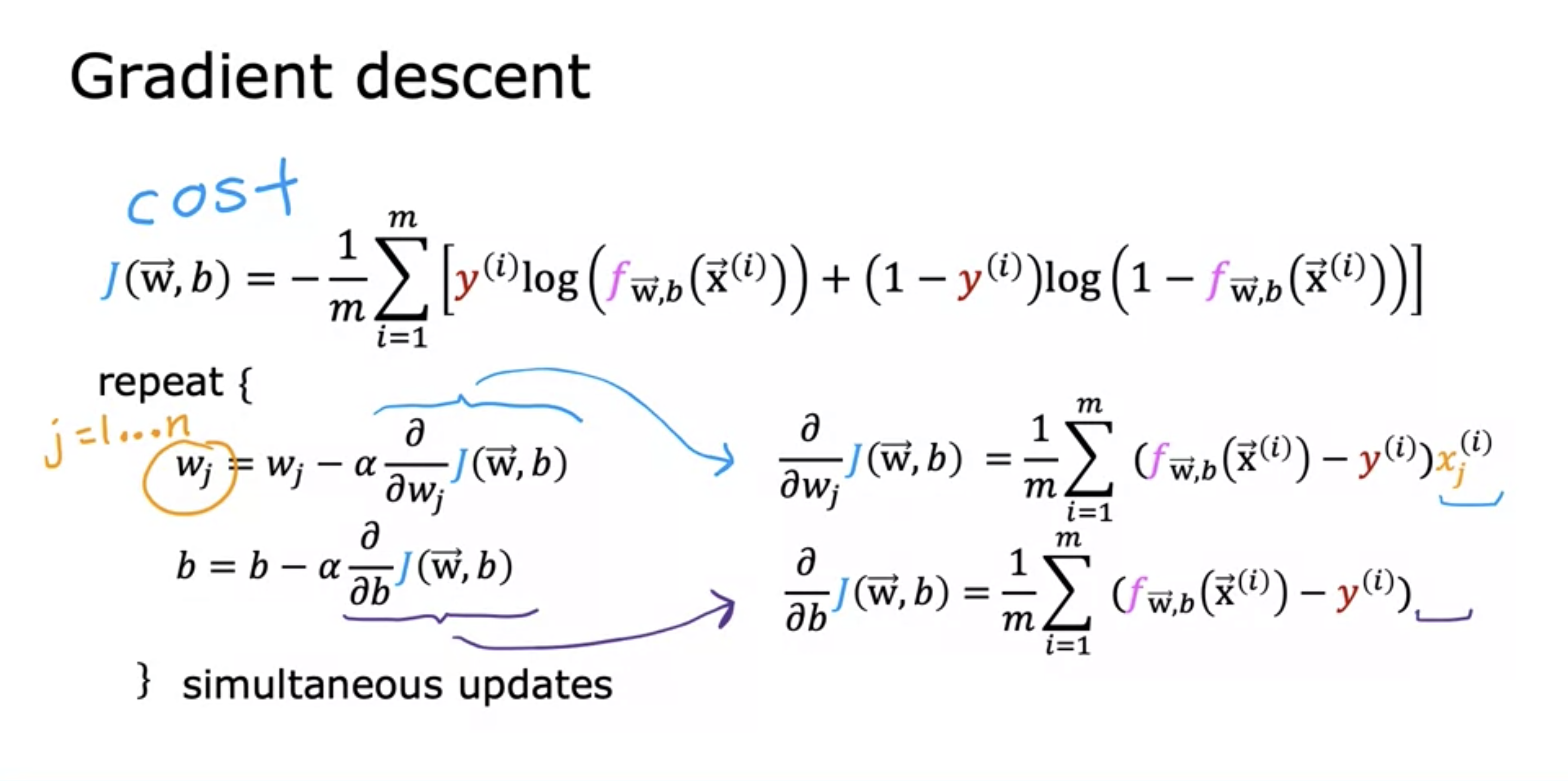

Ml 8 Gradient Descent For Logistic Regression Gradient descent algorithm is used to find out the minimum value of cost function j (w, b). gradient descent algorithm is one of the most important building blocks in the machine. The lectures described how gradient descent utilizes the partial derivative of the cost with respect to a parameter at a point to update that parameter. let's use our compute gradient. What is cost function? it helps us visualize how well our model is predicting by comparing the value predicted by the model to the actual target data in the training set. This week, you’ll extend linear regression to handle multiple input features. you’ll also learn some methods for improving your model’s training and performance, such as vectorization, feature scaling, feature engineering and polynomial regression.

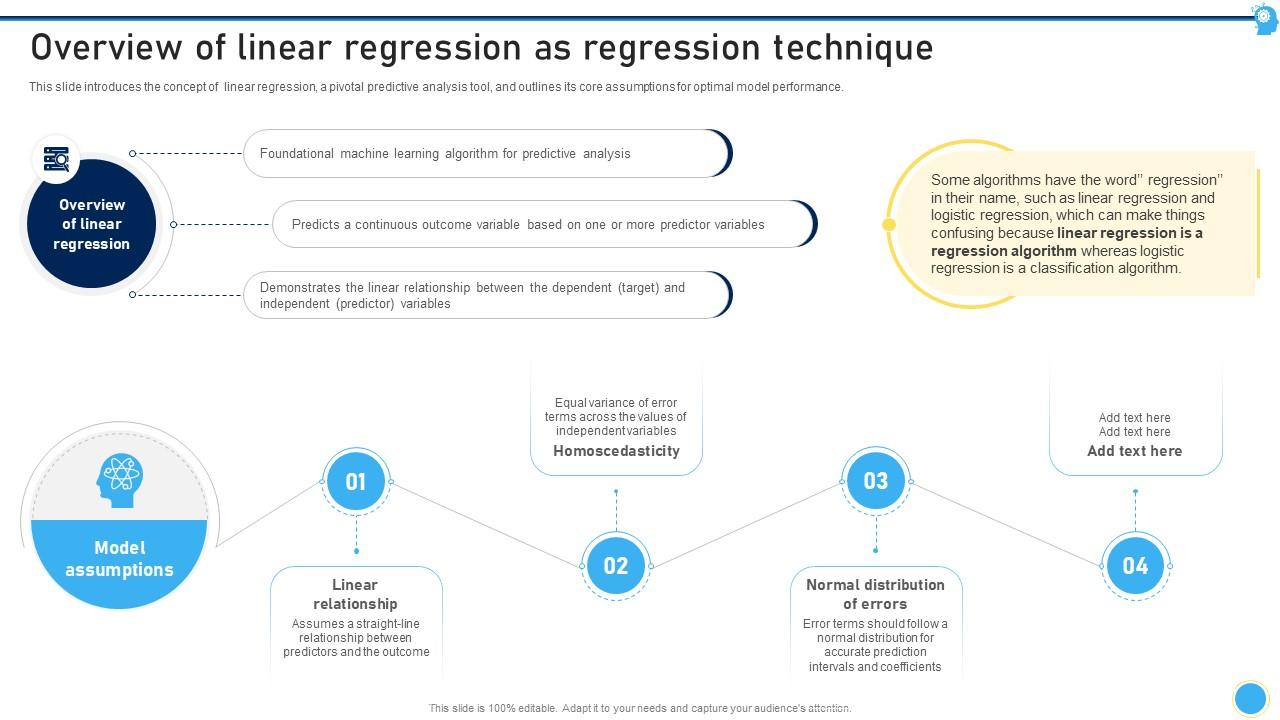

Overview Of Linear Regression As Regression Technique Supervised What is cost function? it helps us visualize how well our model is predicting by comparing the value predicted by the model to the actual target data in the training set. This week, you’ll extend linear regression to handle multiple input features. you’ll also learn some methods for improving your model’s training and performance, such as vectorization, feature scaling, feature engineering and polynomial regression. After going through the definitions, applications, and advantages and disadvantages of bayesian linear regression, it is time for us to explore how to implement bayesian regression using python. Supervised learning is a type of machine learning where a model learns from labelled data, meaning each input has a correct output. the model compares its predictions with actual results and improves over time to increase accuracy. Gradient function is a function passed as an argument to the gradient descent () function and it refers to compute gradient () function defined earlier in the notebook. In the optional lab c1 w1 lab04 gradient descent soln of the course " supervised machine learning: regression and classification", there are two functions that have not been defined at all.

Week 2 Supervised Learning Linear Regression And Gradient Descent After going through the definitions, applications, and advantages and disadvantages of bayesian linear regression, it is time for us to explore how to implement bayesian regression using python. Supervised learning is a type of machine learning where a model learns from labelled data, meaning each input has a correct output. the model compares its predictions with actual results and improves over time to increase accuracy. Gradient function is a function passed as an argument to the gradient descent () function and it refers to compute gradient () function defined earlier in the notebook. In the optional lab c1 w1 lab04 gradient descent soln of the course " supervised machine learning: regression and classification", there are two functions that have not been defined at all.

Gradient Function For Regularized Linear Regression Supervised Ml Gradient function is a function passed as an argument to the gradient descent () function and it refers to compute gradient () function defined earlier in the notebook. In the optional lab c1 w1 lab04 gradient descent soln of the course " supervised machine learning: regression and classification", there are two functions that have not been defined at all.

Comments are closed.