Visually Explained Newtons Method In Optimization

Github Alkostenko Optimization Methods Newtons Method We take a look at newton's method, a powerful technique in optimization. we explain the intuition behind it, and we list some of its pros and cons. Explore newton's method for optimization, a powerful technique used in machine learning, engineering, and applied mathematics. learn about second order derivatives, hessian matrix, convergence, and its applications in optimization problems.

Newton S Method Optimization Notes Optimization newton's method is an approach for unconstrained optimization. in this article, we will motivate the formulation of this approach and provide interactive demos over multiple univariate and multivariate functions to show it in action. We take a look at newton's method, a powerful technique in optimization. we explain the intuition behind it, and we list some of its pros and cons. no necessary background required beyond basic linea. Example: newton method, quassi newton method. in this article we will focus on the newton method for optimization and how it can be used for training neural networks. Newton's method for unconstrained optimization is a powerful technique that uses both gradient and hessian information to find minima or maxima of twice differentiable functions.

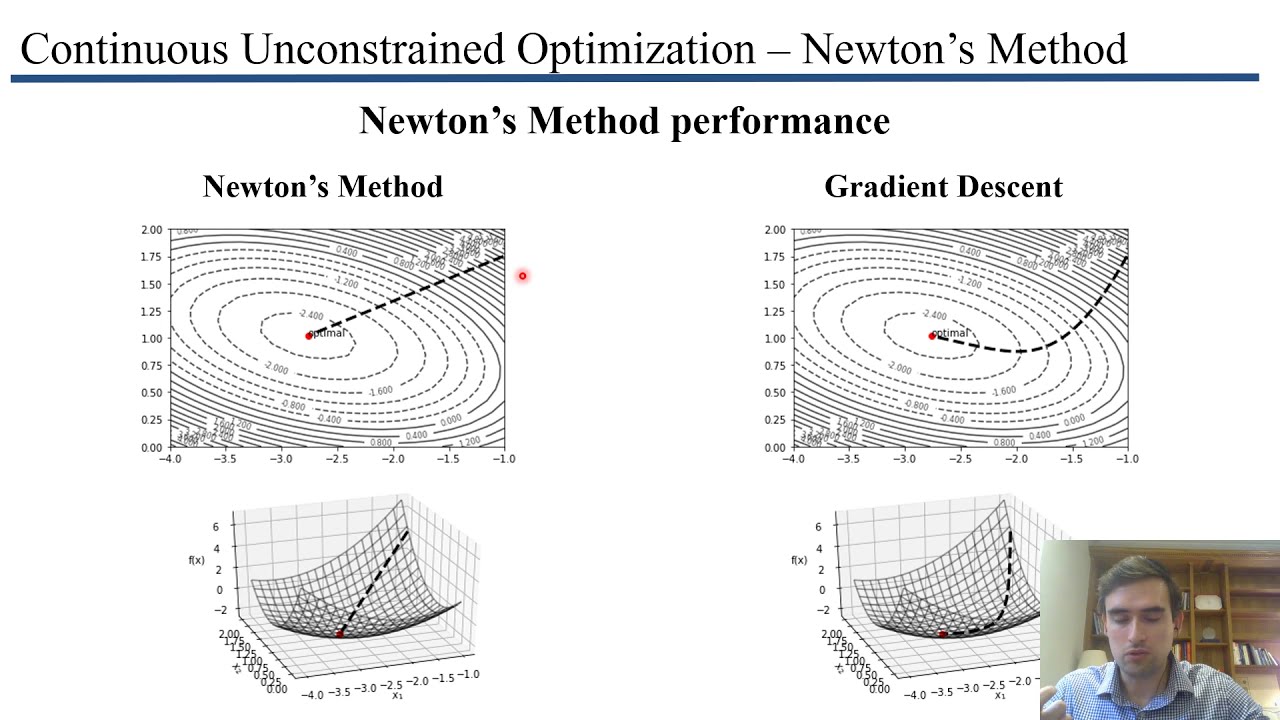

Newton Method In Optimization Newton S Method Machine Learning Ajratw Example: newton method, quassi newton method. in this article we will focus on the newton method for optimization and how it can be used for training neural networks. Newton's method for unconstrained optimization is a powerful technique that uses both gradient and hessian information to find minima or maxima of twice differentiable functions. A comparison of gradient descent (green) and newton's method (red) for minimizing a function (with small step sizes). newton's method uses curvature information (i.e. the second derivative) to take a more direct route. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author (s) and do not necessarily reflect the views of the national science foundation. other sponsors include maple, mathcad, usf, famu and msoe. based on a work at mathforcollege nm. Newton’s method is originally a root finding method for nonlinear equations, but in combination with optimality conditions it becomes the workhorse of many optimization algorithms. In this example, the steepest descent method theoretically requires an infinite number of iterations to reach the optimum at (0, 0). practically after 5 iterations the solution is found.

Newton Method In Optimization Newton S Method Machine Learning Ajratw A comparison of gradient descent (green) and newton's method (red) for minimizing a function (with small step sizes). newton's method uses curvature information (i.e. the second derivative) to take a more direct route. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author (s) and do not necessarily reflect the views of the national science foundation. other sponsors include maple, mathcad, usf, famu and msoe. based on a work at mathforcollege nm. Newton’s method is originally a root finding method for nonlinear equations, but in combination with optimality conditions it becomes the workhorse of many optimization algorithms. In this example, the steepest descent method theoretically requires an infinite number of iterations to reach the optimum at (0, 0). practically after 5 iterations the solution is found.

Newton S Method For Optimization Codesignal Learn Newton’s method is originally a root finding method for nonlinear equations, but in combination with optimality conditions it becomes the workhorse of many optimization algorithms. In this example, the steepest descent method theoretically requires an infinite number of iterations to reach the optimum at (0, 0). practically after 5 iterations the solution is found.

Comments are closed.