Vision Transformer Attention

Vision Transformer Archives Debuggercafe A visual analytics approach to interpret the attention mechanism of vision transformers (vits), a model that applies self attention to image patches. the paper identifies the important heads, profiles the attention strengths and patterns, and validates the findings with experts. This article takes an in depth look to how an attention layer works in the context of computer vision. we’ll cover both single headed and multi headed attention.

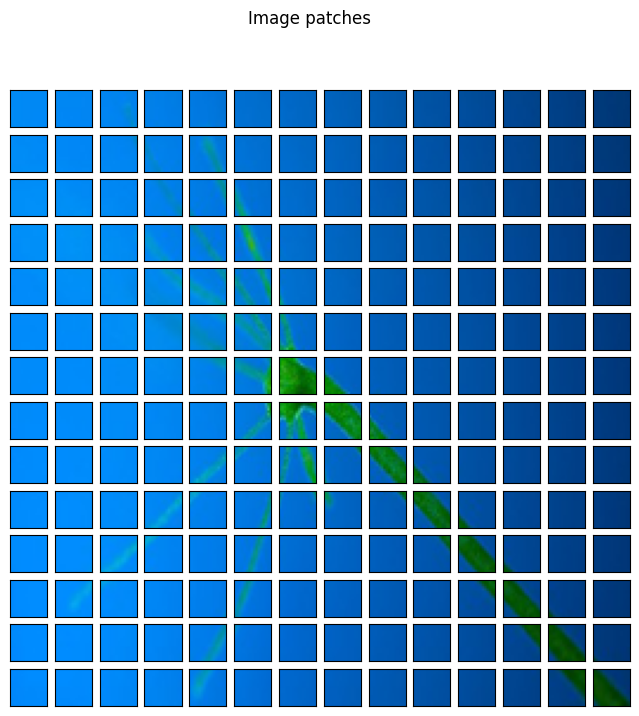

Visualization Results Of Vision Transformer Fused Self Attention Module Vision transformer (vit) expands the success of transformer models from sequential data to images. the model decomposes an image into many smaller patches and arranges them into a sequence. multi head self attentions are then applied to the sequence to learn the attention between patches. This paper presents deformable attention transformer, a novel hierarchical vision transformer that can be adapted to both image classification and dense prediction tasks. From patch embeddings to attention maps, this hands on guide covers everything you need to know about vision transformers — including vit, deit, swin, and dinov2 — with full python code to train, fine tune, and interpret them on your own data. Research demonstrates that attention mechanisms in 2d and 3d vision transformers (vits) adapt the transformer architecture for computer vision tasks, capturing global context effectively.

Visualization Results Of Vision Transformer Fused Self Attention Module From patch embeddings to attention maps, this hands on guide covers everything you need to know about vision transformers — including vit, deit, swin, and dinov2 — with full python code to train, fine tune, and interpret them on your own data. Research demonstrates that attention mechanisms in 2d and 3d vision transformers (vits) adapt the transformer architecture for computer vision tasks, capturing global context effectively. Abstract: this paper tackles the high computational space complexity associated with multi head self attention (mhsa) in vanilla vision transformers. to this end, we propose hierarchical mhsa (h mhsa), a novel approach that computes self attention in a hier archical fashion. In this post, we will delve into how to quantify and visualize attention, focusing on the vit model, and demonstrate how attention maps can be generated and interpreted. Abstract: for state of the art image understanding, vision transformers (vits) have become the standard architecture but their processing diverges substantially from human attentional characteristics. we investigate whether this cognitive gap can be shrunk by fine tuning the self attention weights of google’s vit b 16 on human saliency fixation maps. to isolate the effects of semantically. In this study, to reduce the number of vit operations and preserve the global and local features of the image classification, we introduce a new graph head attention (gha) mechanism for vit that replaces mha with fewer graph heads using the proposed graph generation and graph attention.

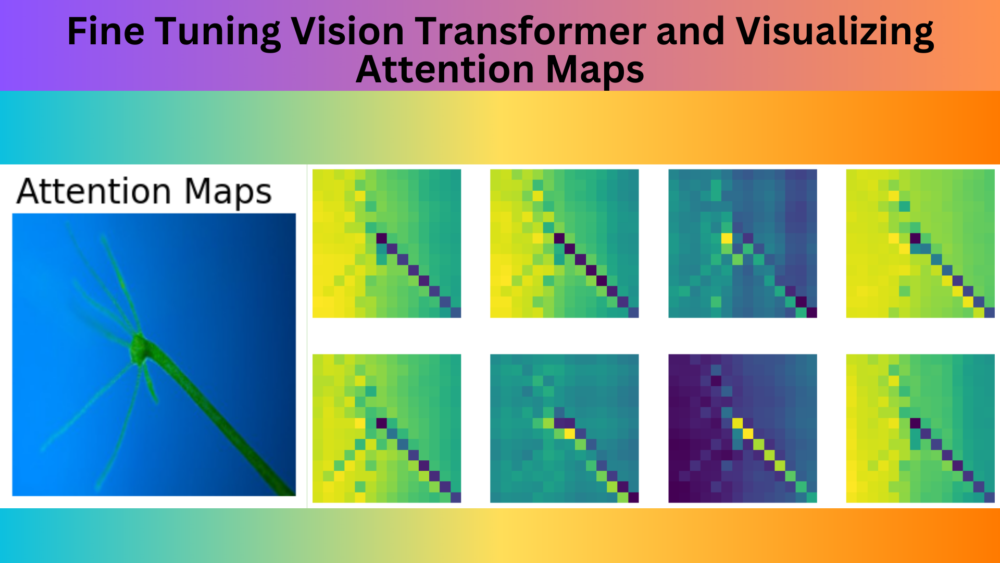

Fine Tuning Vision Transformer And Visualizing Attention Maps Abstract: this paper tackles the high computational space complexity associated with multi head self attention (mhsa) in vanilla vision transformers. to this end, we propose hierarchical mhsa (h mhsa), a novel approach that computes self attention in a hier archical fashion. In this post, we will delve into how to quantify and visualize attention, focusing on the vit model, and demonstrate how attention maps can be generated and interpreted. Abstract: for state of the art image understanding, vision transformers (vits) have become the standard architecture but their processing diverges substantially from human attentional characteristics. we investigate whether this cognitive gap can be shrunk by fine tuning the self attention weights of google’s vit b 16 on human saliency fixation maps. to isolate the effects of semantically. In this study, to reduce the number of vit operations and preserve the global and local features of the image classification, we introduce a new graph head attention (gha) mechanism for vit that replaces mha with fewer graph heads using the proposed graph generation and graph attention.

Comments are closed.