Vinija S Notes Natural Language Processing Tokenizer

Vinija S Notes Natural Language Processing Transformers Pdf Tokenization is the critical discretization step that bridges continuous human language and discrete statistical models. in modern large language models (llms), it is not merely preprocessing but an integral architectural component that defines the model’s sample space. Vinija's notes natural language processing tokenizer the document discusses different methods for tokenizing text, including sub word tokenization techniques like wordpiece, byte pair encoding, unigram subword tokenization, and sentencepiece.

Vinija S Notes Natural Language Processing Tokenizer Pdf The objective is to enable machines to read and comprehend the meaning of text. to facilitate language learning for machines, text needs to be divided into smaller units called tokens, which are. It is difficult to perform as the process of reading and understanding languages is far more complex than it seems at first glance. tokenization is a foundation step in nlp pipeline that shapes the entire workflow. involves dividing a string or text into a list of smaller units known as tokens. Processing of natural language is required when you want an intelligent system like robot to perform as per your instructions, when you want to hear decision from a dialogue based clinical expert system, etc. Tokenization significantly influences language models(lms)’ performance. this paper traces the evolution of tokenizers from word level to subword level, analyzing how they balance tokens and types to enhance model adaptability while controlling complexity.

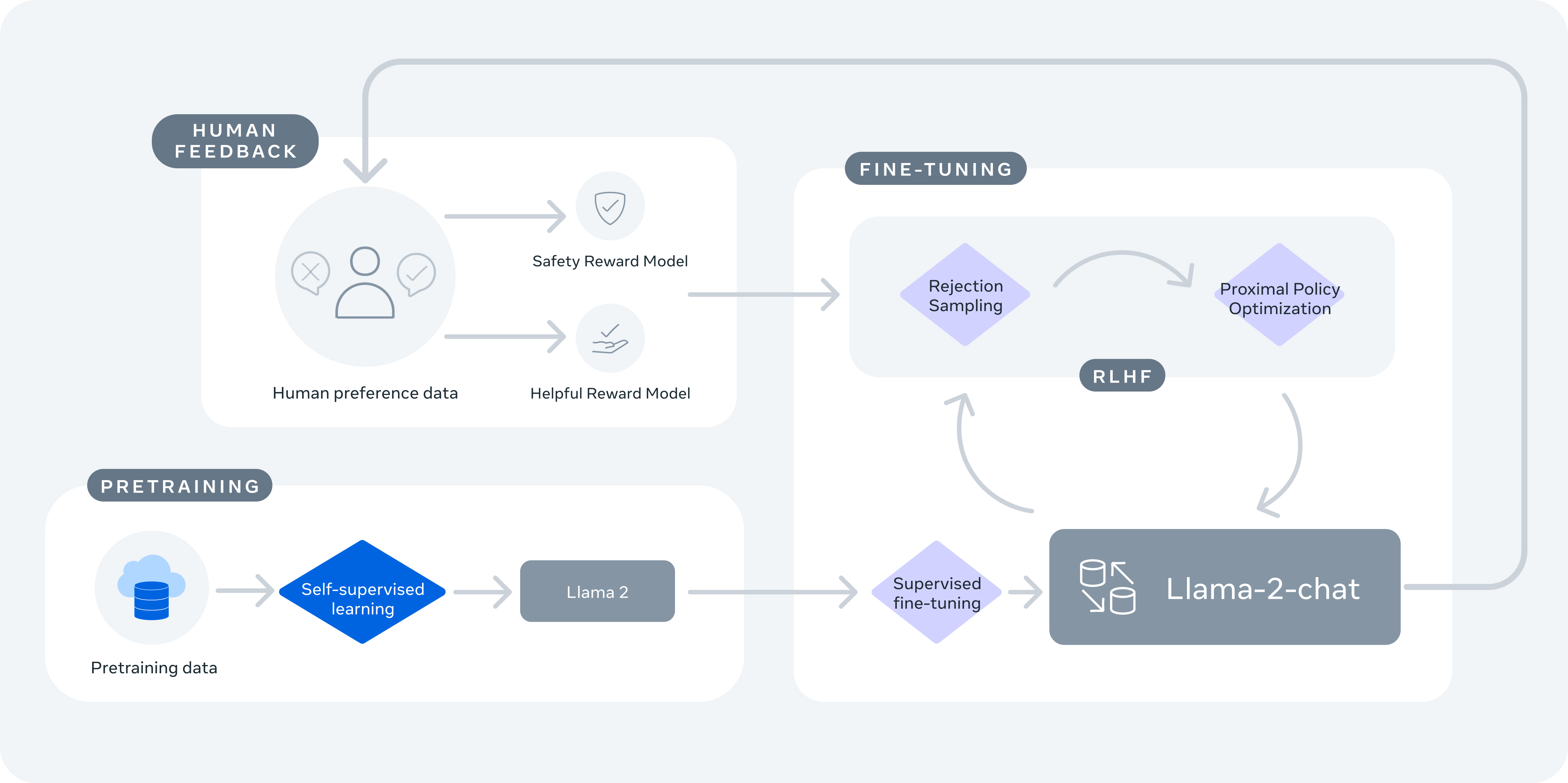

Vinija S Notes Models Llama Processing of natural language is required when you want an intelligent system like robot to perform as per your instructions, when you want to hear decision from a dialogue based clinical expert system, etc. Tokenization significantly influences language models(lms)’ performance. this paper traces the evolution of tokenizers from word level to subword level, analyzing how they balance tokens and types to enhance model adaptability while controlling complexity. This section delineates the importance of tokenization in natural language processing (nlp) and elucidates its role in enabling machines to comprehend language. In english, this kind of tokenization and normalization may apply to just a limited set of cases, but in other languages, these phenomena have to be treated in a less trivial manner. Natural language processing session 4: tokenization and stemming instructor: behrooz mansouri spring 2023, university of southern maine. After text standardization, the next critical step in natural language processing is tokenization. tokenization involves breaking down the standardized text into smaller units called tokens.

Comments are closed.