Vinija S Notes Models Toolformer

Vinija S Notes Natural Language Processing Transformers Pdf Toolformer is based on a pre trained gpt j model with 6.7 billion parameters. toolformer was trained using a self supervised learning approach that involves sampling and filtering api calls to augment an existing dataset of text. We introduce toolformer, a model trained to decide which apis to call, when to call them, what arguments to pass, and how to best incorporate the results into future token prediction. this is done in a self supervised way, requiring nothing more than a handful of demonstrations for each api.

Vinija S Notes Models Bard 🤯⚒️ : toolformer by meta 🔗 link for notes: lnkd.in gcmc efq 👋🏼 hey linkedin connections, meta has just released toolformer , a self supervised approach for enhancing large. Toolformer: language models can teach themselves to use tools api calls; model details gpt 4 multimodal text and image transformer model 📸 credits. In this paper, we show that lms can teach themselves to use external tools via simple apis and achieve the best of both worlds. we introduce toolformer, a model trained to decide which apis to call, when to call them, what arguments to pass, and how to best incorporate the results into future token prediction. Given the prevalence of decoder based models in the area of generative ai, the article focuses on decoder models (such as gpt x) rather than encoder models (such as bert and its variants). henceforth, the term llms is used interchangeably with “decoder based models”.

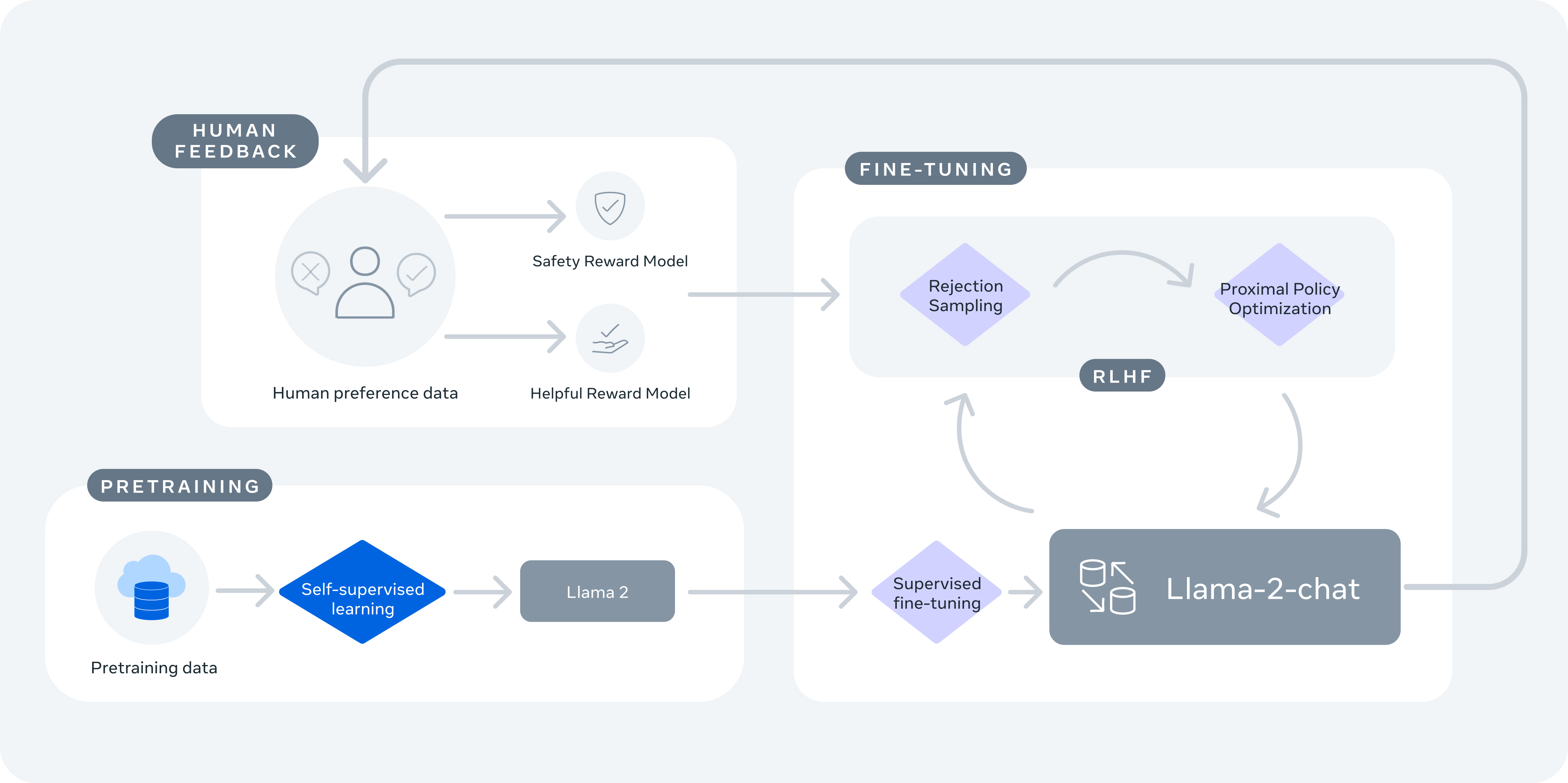

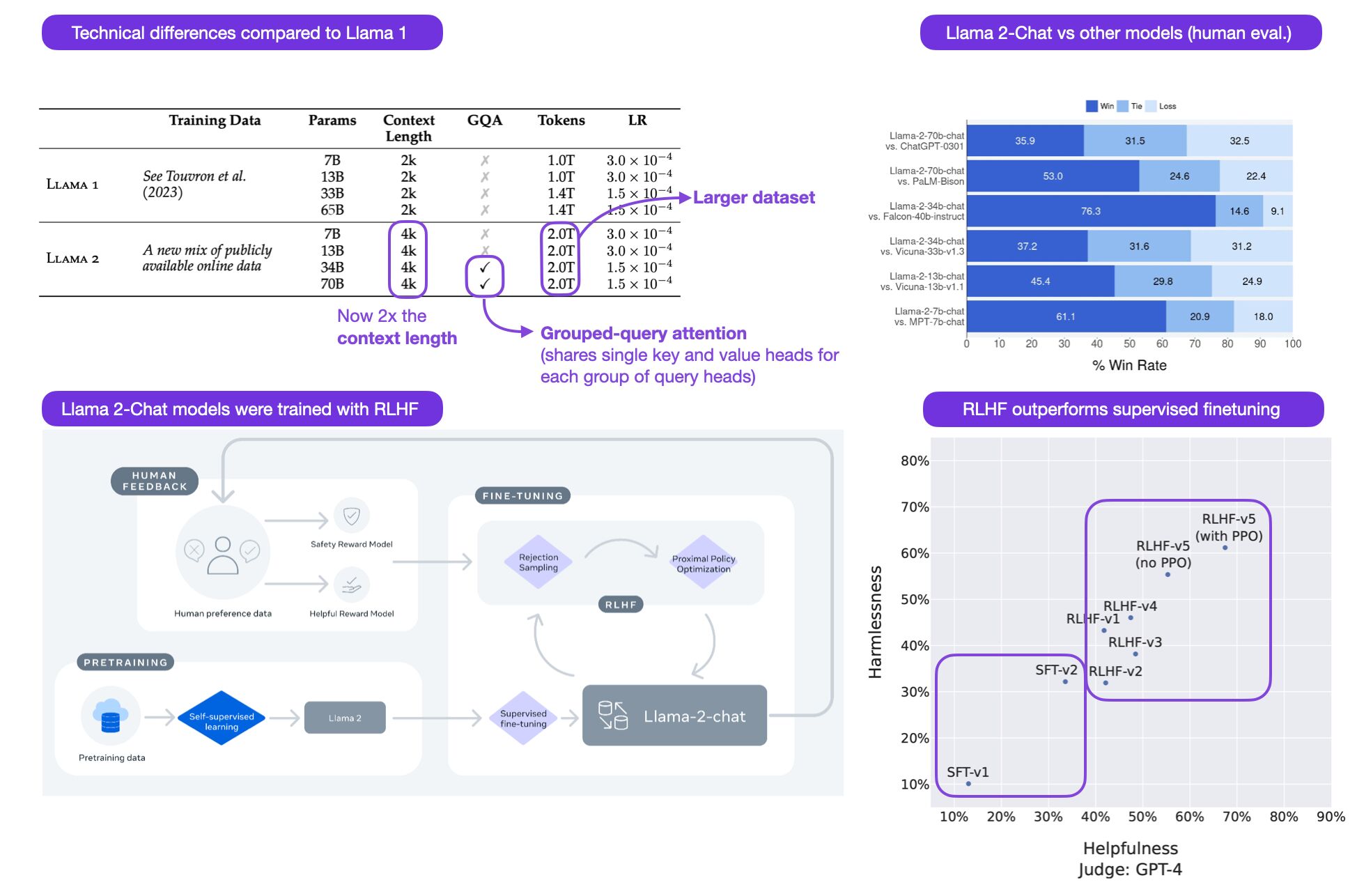

Vinija S Notes Models Llama In this paper, we show that lms can teach themselves to use external tools via simple apis and achieve the best of both worlds. we introduce toolformer, a model trained to decide which apis to call, when to call them, what arguments to pass, and how to best incorporate the results into future token prediction. Given the prevalence of decoder based models in the area of generative ai, the article focuses on decoder models (such as gpt x) rather than encoder models (such as bert and its variants). henceforth, the term llms is used interchangeably with “decoder based models”. We introduce toolformer, a model trained to decide which apis to call, when to call them, what arguments to pass, and how to best incorporate the results into future token prediction. this is done in a self supervised way, requiring nothing more than a handful of demonstrations for each api. He gives examples of rnn generated text from different sources, like paul graham’s essays, shakespeare’s works, articles, algebraic geometry in latex, linux source code, and baby names. these examples show how rnns can learn complex structures, grammar, and context from raw text. Combine models: use ensemble methods to combine the predictions of multiple models for a stronger overall model. use pretrained models: leverage transfer learning by using models pretrained on similar tasks and fine tuning them for your specific problem. To effectively debug performance issues in a machine learning model, it is crucial to evaluate the model’s performance on relevant data and understand the context of the problem. here are key considerations for debugging based on different scenarios:.

Vinija S Notes Models Llama We introduce toolformer, a model trained to decide which apis to call, when to call them, what arguments to pass, and how to best incorporate the results into future token prediction. this is done in a self supervised way, requiring nothing more than a handful of demonstrations for each api. He gives examples of rnn generated text from different sources, like paul graham’s essays, shakespeare’s works, articles, algebraic geometry in latex, linux source code, and baby names. these examples show how rnns can learn complex structures, grammar, and context from raw text. Combine models: use ensemble methods to combine the predictions of multiple models for a stronger overall model. use pretrained models: leverage transfer learning by using models pretrained on similar tasks and fine tuning them for your specific problem. To effectively debug performance issues in a machine learning model, it is crucial to evaluate the model’s performance on relevant data and understand the context of the problem. here are key considerations for debugging based on different scenarios:.

Comments are closed.