Vinija S Notes Models Clip

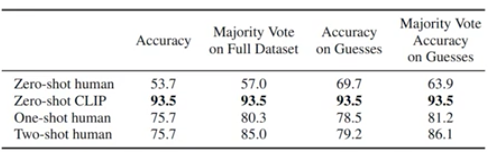

Vinija S Notes Natural Language Processing Transformers Pdf Clip model is a zero shot, multi modal model that uses contrastive loss for pre training. clip is able to predict the most relevant text caption for an image without optimizing for any particular task. We’ve recently seen a bunch of extraordinary advancements with gpt 4, toolformer, llama, rlhf, visual chatgpt, etc. 📝 here are some primers on some recent llms and related concepts to get you up.

Vinija S Notes Models Clip It describes how attention originated from the need to address the bottleneck problem in sequence to sequence models. the key components of attention, including the encoder, decoder, and context vector are explained. various attention mechanisms and extensions are also covered. A table of contents for reviews on research papers within ai. clip. the in line diagrams are taken from resources mentioned in the reference section within each topic. author = {jain, vinija and chadha, aman}, title = {paper reviews}, howpublished = {\url{ vinija.ai}}, year = {2022},. 🖼🔠 clip: openai’s contrastive language image pretraining model 🔗 link for notes under: lnkd.in gcv8t2ak 👋🏼 hey linkedin connections, i’ve…. What are the bias and variance in a machine learning model and explain the bias variance trade off?.

Vinija S Notes Models Clip 🖼🔠 clip: openai’s contrastive language image pretraining model 🔗 link for notes under: lnkd.in gcv8t2ak 👋🏼 hey linkedin connections, i’ve…. What are the bias and variance in a machine learning model and explain the bias variance trade off?. Given the prevalence of decoder based models in the area of generative ai, the article focuses on decoder models (such as gpt x) rather than encoder models (such as bert and its variants). henceforth, the term llms is used interchangeably with “decoder based models”. He gives examples of rnn generated text from different sources, like paul graham’s essays, shakespeare’s works, articles, algebraic geometry in latex, linux source code, and baby names. these examples show how rnns can learn complex structures, grammar, and context from raw text. Nevertheless, it also opens doors for pioneering research in artificial intelligence and natural language processing domains. this article delves into various strategies to counteract and mitigate the effects of hallucination at various stages of the model’s pipeline. This page contains a table of contents for my notes and reviews on machine learning topics and basic concepts that would be great to have in your toolkit as you plan to train your own model. each section is divided by topic to help you get bite sized information that is easy to understand!.

Comments are closed.