V1 And V2 Depth Anything Map Combination Tutorial Youtube

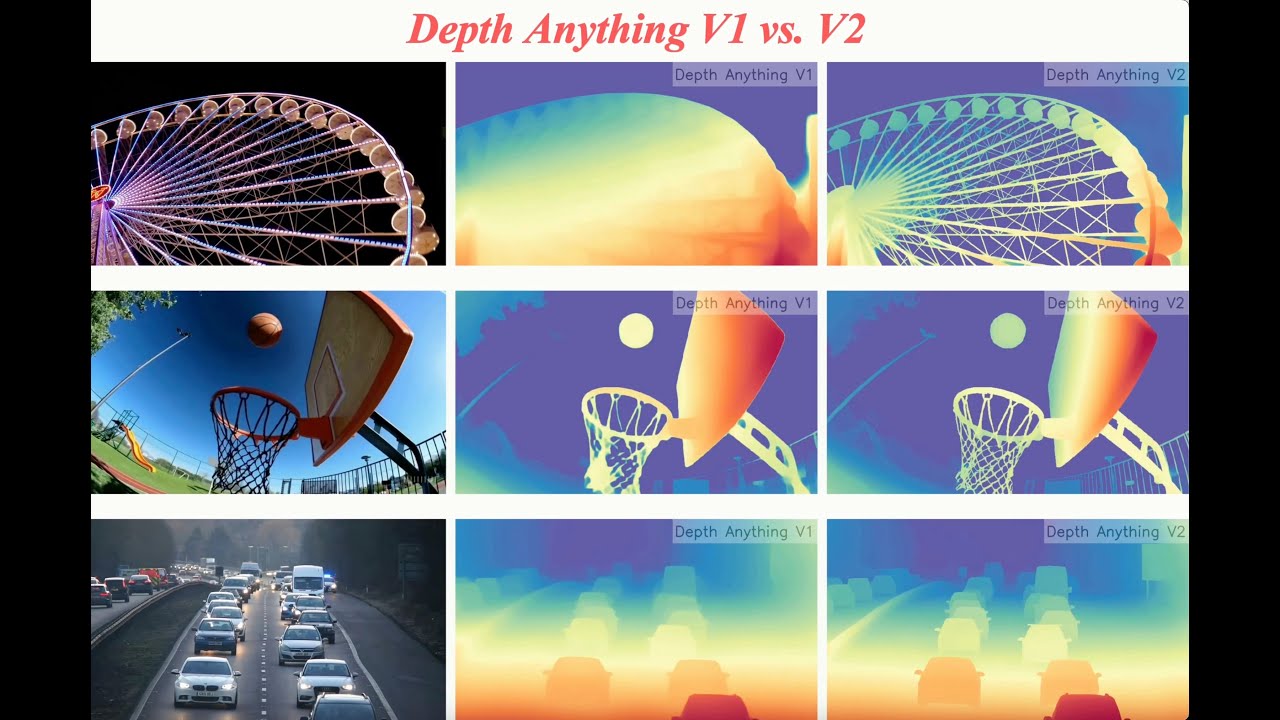

Depth Anything V2 Youtube About press copyright contact us creators advertise developers terms privacy policy & safety how works test new features nfl sunday ticket © 2025 google llc. In this tutorial, we walk through how to use depth anything v2 for monocular depth estimation, focusing on understanding the model, its outputs, how to run inference, interpreting its.

First Attempt At Depth Map Youtube Upload a photo and the app will estimate how far each part of the scene is, creating a colorful depth map that you can explore with a slider. it also provides downloadable grayscale and 16‑bit raw. This document provides an overview of the different ways to use depth anything v2 for depth estimation. it introduces the available interfaces, their typical use cases, and guides you to the appropriate detailed documentation. Learn how to estimate object distance from a single image using depth anything v2. build depth aware workflows in roboflow for robotics & more. This work presents depth anything v2. without pursuing fancy techniques, we aim to reveal crucial findings to pave the way towards building a powerful monocular depth estimation model.

Depth Maps For Newbie S Youtube Learn how to estimate object distance from a single image using depth anything v2. build depth aware workflows in roboflow for robotics & more. This work presents depth anything v2. without pursuing fancy techniques, we aim to reveal crucial findings to pave the way towards building a powerful monocular depth estimation model. This repo provides a tensorrt implementation of the depth anything depth estimation model in both c and python, enabling efficient real time inference. depth anything v1. depth anything v2. 2024 06 20: added support for tensorrt 10. 2024 06 17: depth anything v2 has been integrated. This extension leverages the powerful depth anything v2 model to provide highly accurate and detailed monocular depth estimation from images. for ai artists, this means you can generate depth maps that add a new dimension to your artwork, enabling more realistic and immersive visual effects. In this notebook we will show how to perform depth estimation task using depth anything models architecture with openvino. we will consider depthanything and depthanythingv2 models. This article will discuss depth anything v2, a practical solution for robust monocular depth estimation. depth anything model aims to create a simple yet powerful foundation model that works well with any image under any conditions.

Depth Anything V2 Youtube This repo provides a tensorrt implementation of the depth anything depth estimation model in both c and python, enabling efficient real time inference. depth anything v1. depth anything v2. 2024 06 20: added support for tensorrt 10. 2024 06 17: depth anything v2 has been integrated. This extension leverages the powerful depth anything v2 model to provide highly accurate and detailed monocular depth estimation from images. for ai artists, this means you can generate depth maps that add a new dimension to your artwork, enabling more realistic and immersive visual effects. In this notebook we will show how to perform depth estimation task using depth anything models architecture with openvino. we will consider depthanything and depthanythingv2 models. This article will discuss depth anything v2, a practical solution for robust monocular depth estimation. depth anything model aims to create a simple yet powerful foundation model that works well with any image under any conditions.

How To Create High Detail Depth Maps The Fast Easy Way Youtube In this notebook we will show how to perform depth estimation task using depth anything models architecture with openvino. we will consider depthanything and depthanythingv2 models. This article will discuss depth anything v2, a practical solution for robust monocular depth estimation. depth anything model aims to create a simple yet powerful foundation model that works well with any image under any conditions.

Depth Anything V2 Monocular Depth Estimation Explanation And Real Time

Comments are closed.