Using Mixed Precision On Rdus

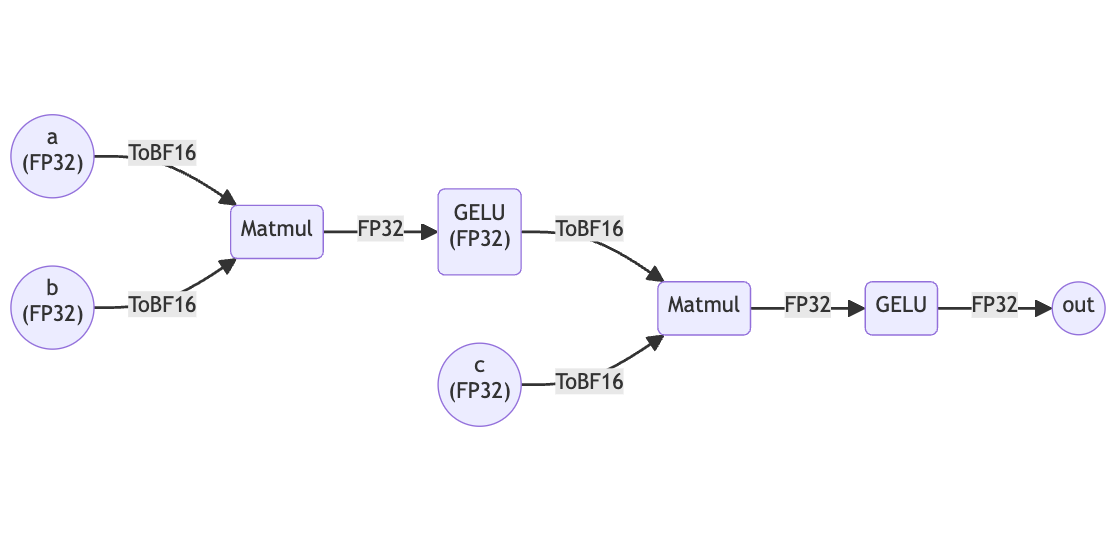

Using Mixed Precision On Rdus Sambaflow 1.18 introduces support for mixed precision on rdus, streamlining the experience for model developers and overcoming framework limitations. Our novel graph level automatic mixed precision (graphamp) algorithm automatically optimizes model precision, making it easier to achieve a desirable balance between accuracy and performance.

Mixed Precision Towards Data Science We’re on a journey to advance and democratize artificial intelligence through open source and open science. Deep neural network training has traditionally relied on ieee single precision format, however with mixed precision, you can train with half precision while maintaining the network accuracy achieved with single precision. this technique of using both single and half precision representations is referred to as mixed precision technique. Mixed precision algorithms combine low and high precision computations in order to bene t from the performance gains of reduced precision while retaining good accuracy. We’ve talked about mixed precision techniques before (here, here, and here), and this blog post is a summary of those techniques and an introduction if you’re new to mixed precision.

Automatic Mixed Precision Using Pytorch Mixed precision algorithms combine low and high precision computations in order to bene t from the performance gains of reduced precision while retaining good accuracy. We’ve talked about mixed precision techniques before (here, here, and here), and this blog post is a summary of those techniques and an introduction if you’re new to mixed precision. Mixed precision training, on the other hand, uses lower precision data types for certain parts of the neural network while maintaining higher precision data types for others. by doing so, it. Mixed precision computation is a promising method for substantially increasing the speed of numerical computations. however, using mixed precision data is a dou. Mixed precision training offers significant computational speedup by performing operations in half precision format, while storing minimal information in single precision to retain as much information as possible in critical parts of the network. However, if i use amp for mixed precision training, i also see determinism even without deterministic=true. has anyone seen similar cases or have insights into how using amp could remove nondeterminism? here’s a writeup with further details on experiments and code. thanks!.

Comments are closed.